4M-21: An Any-to-Any Vision Model for Tens of Tasks and Modalities

Today's paper presents an any-to-any vision model called 4M-21 that can handle tens of diverse tasks and modalities. It significantly expands the capabilities of existing multimodal models like 4M.

Method Overview

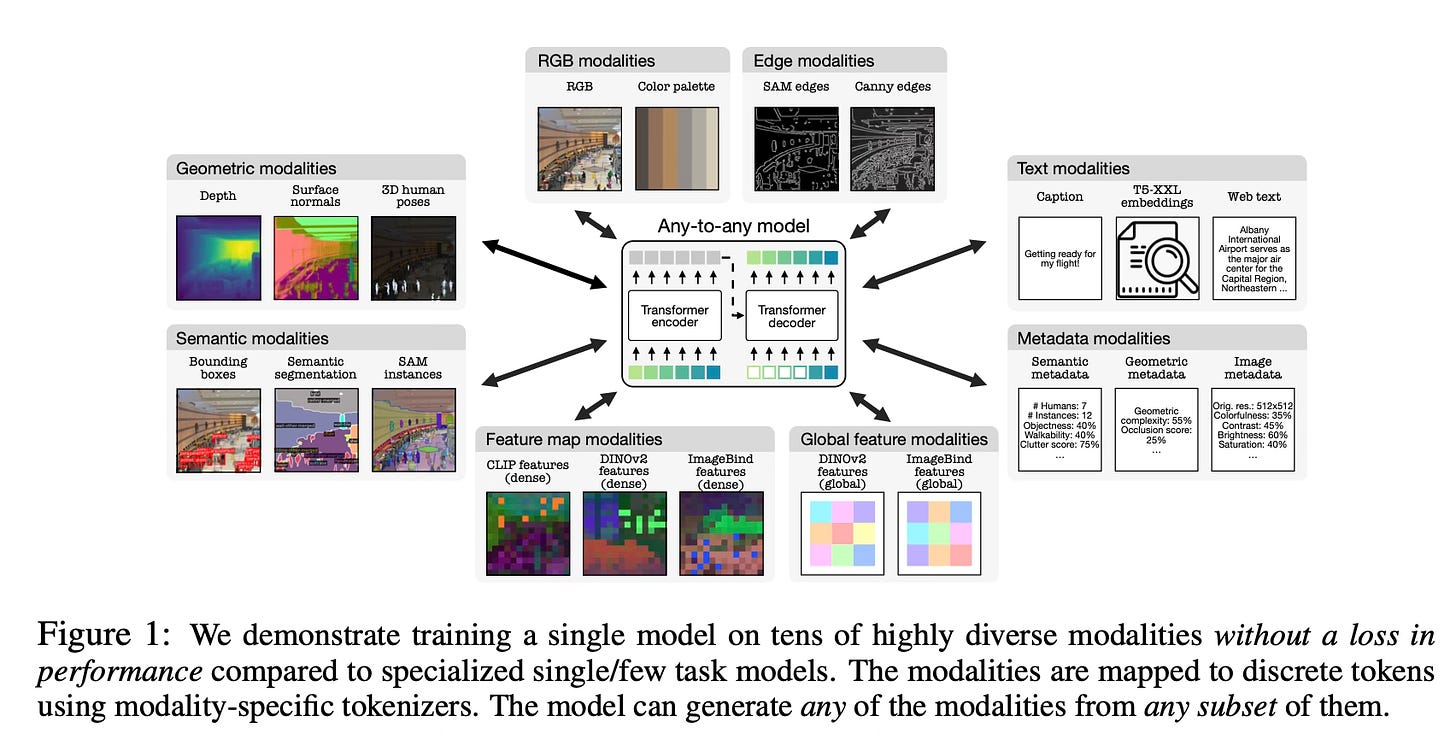

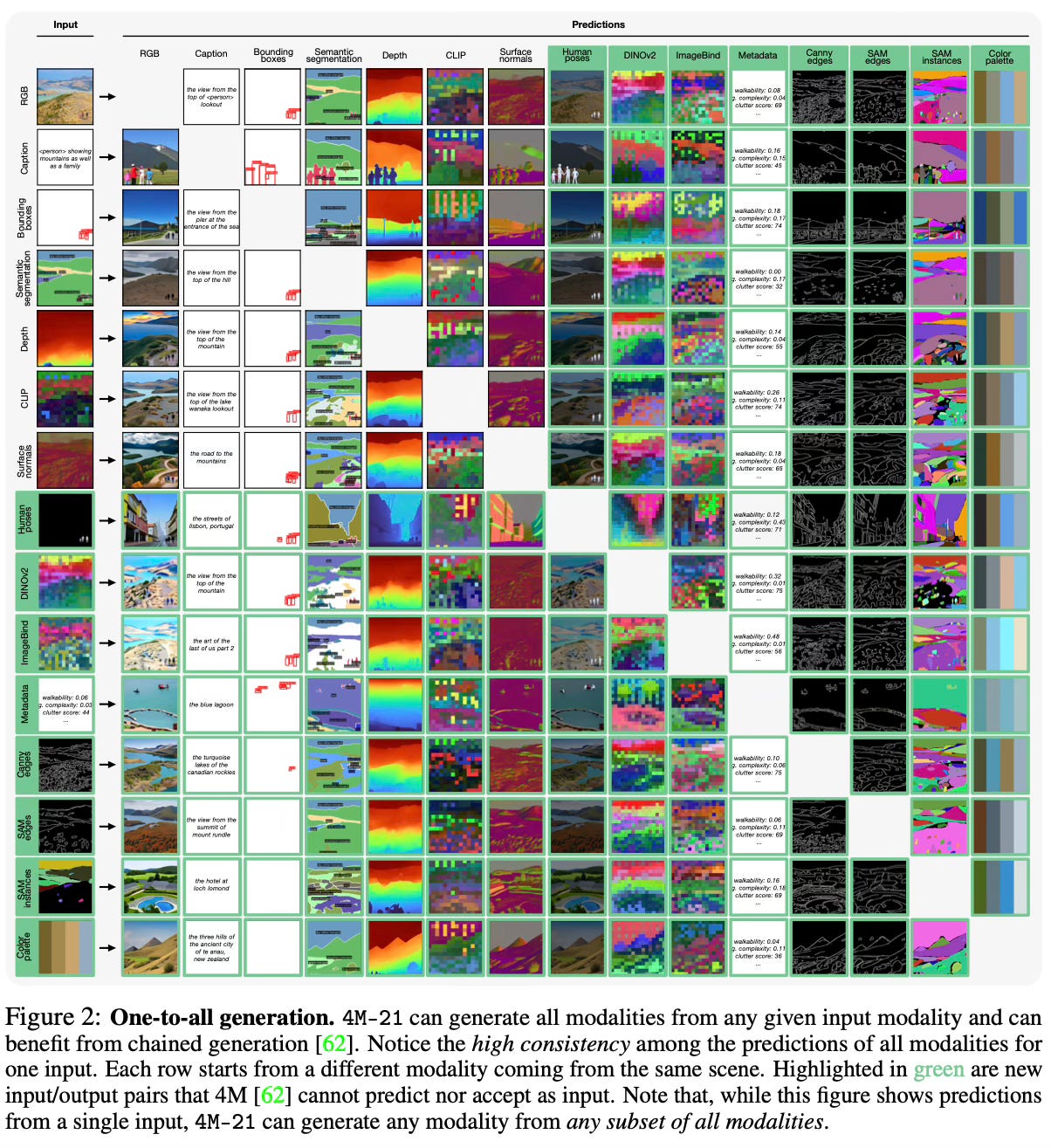

The method builds upon the multimodal masked modeling approach of 4M, where different modalities are first tokenized into sequences of discrete tokens using modality-specific tokenizers. In this way, the model can handle inputs in any modality and can generate/predict all modalities as shown below:

During training, random subsets of tokens from all modalities are masked, and the objective is to predict the masked tokens from the remaining ones.

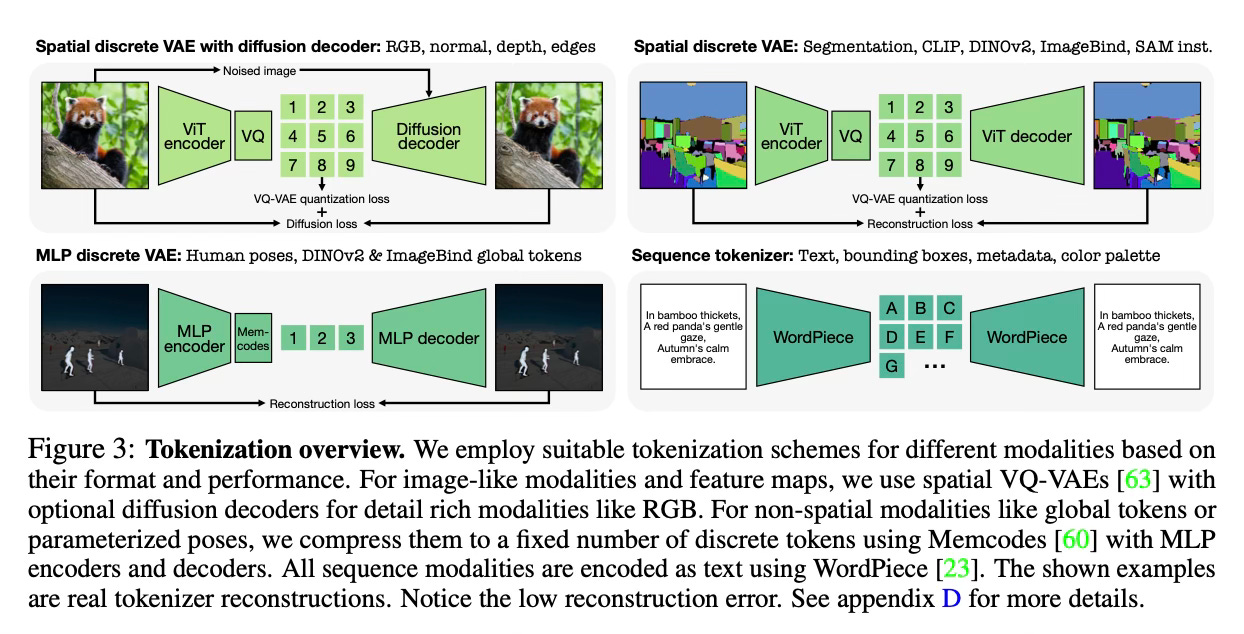

For image-like modalities such as RGB, edges, and feature maps, the authors train ViT-based VQ-VAE tokenizers to map the inputs into a small grid of tokens. For structured data like 3D human poses or global image tokens, they use MLP-based discrete VAEs to compress them into token sequences. Text data like captions and metadata are tokenized using a WordPiece tokenizer.

The model is trained on a large dataset containing 21 diverse modalities, including RGB images, geometric data (surface normals, depth, 3D poses), semantic data (segmentation, bounding boxes), edges, feature maps from CLIP/DINO/ImageBind, and various types of metadata. It is pre-trained on the COYO700M dataset and then fine-tuned on a smaller CC12M dataset with the additional modalities. The authors also incorporate web text from C4 for language modeling.

Results

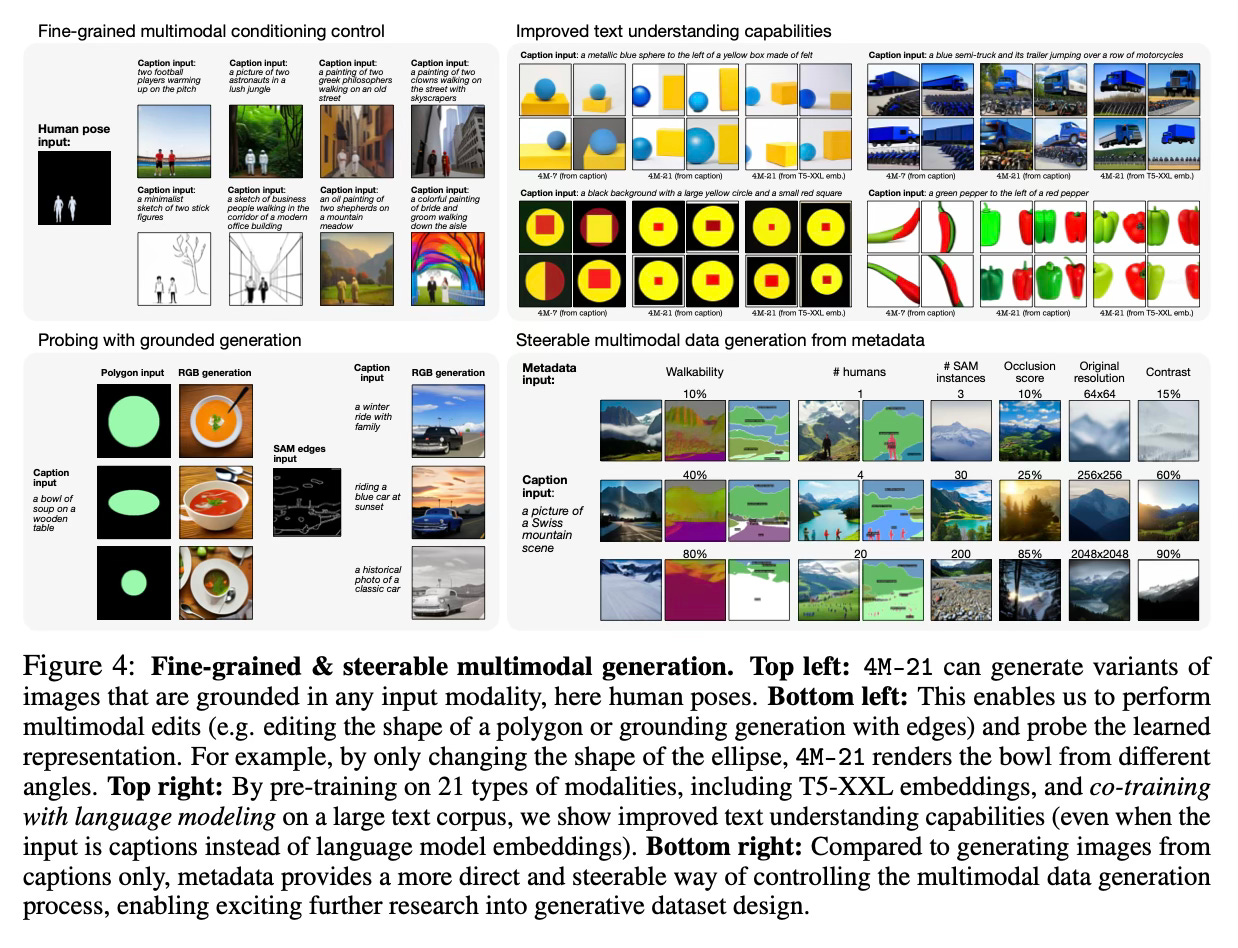

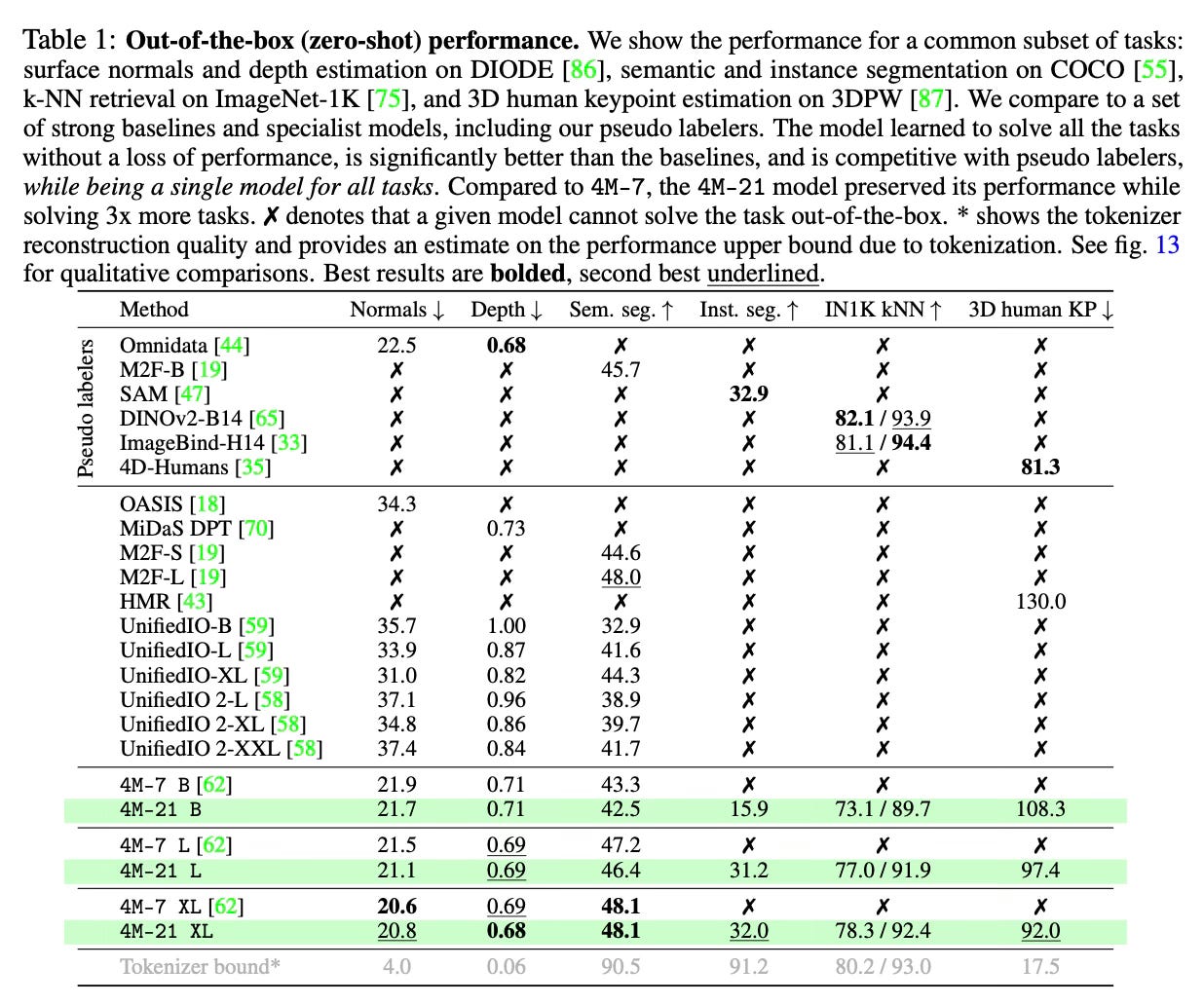

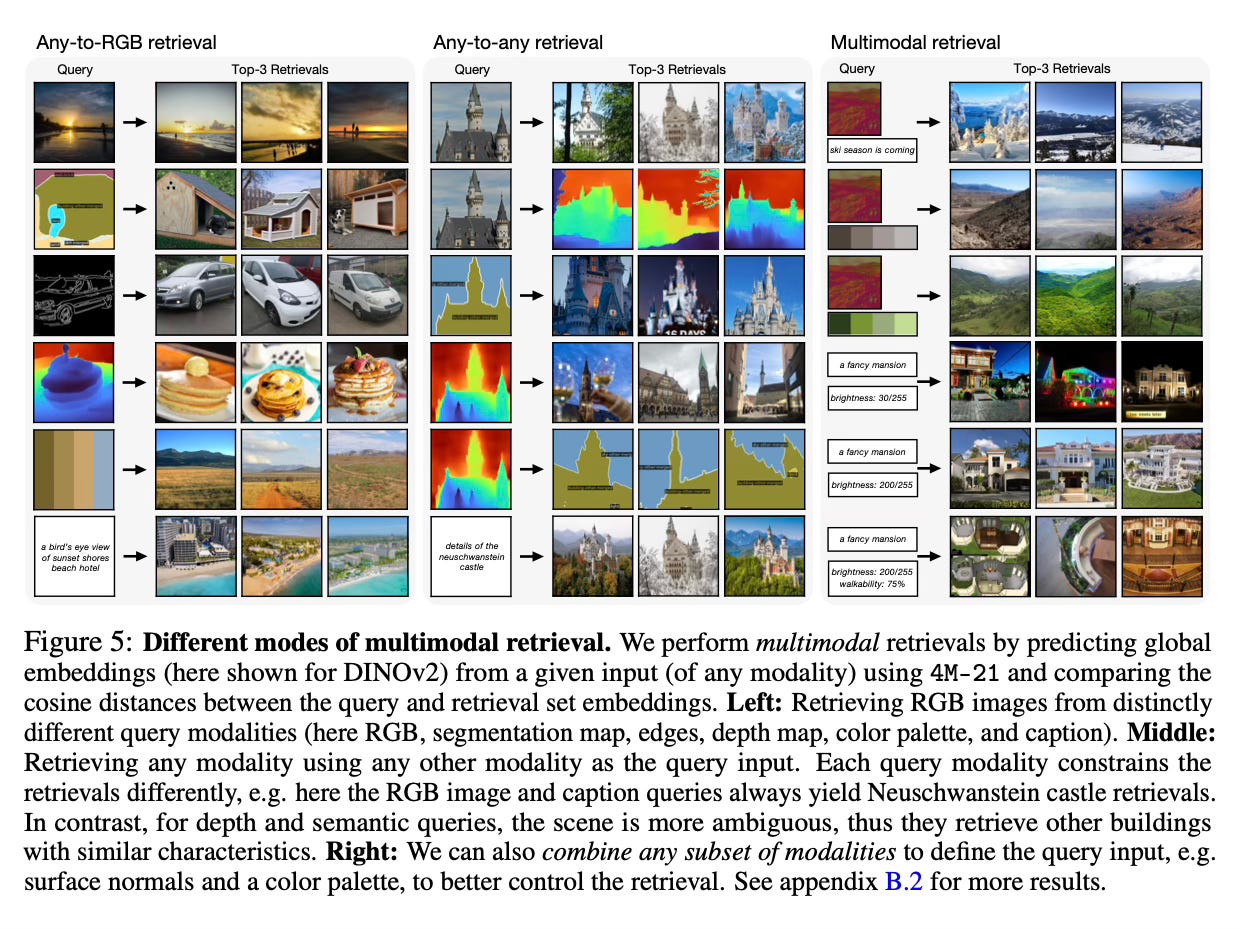

The 4M-21 model demonstrates strong performance on a wide range of vision tasks out-of-the-box, often matching or outperforming specialized single-task models while being a unified model for all tasks. It enables new capabilities like multimodal retrieval, controllable multimodal generation, and improved text understanding for image generation.

Conclusion

The paper presents a scalable approach to train a single any-to-any model on 21 diverse modalities without sacrificing performance. This significantly expands the out-of-the-box capabilities compared to existing models and unlocks new potential for multimodal interaction and generation. For more information please consult the full paper or the project page.

Congrats to the authors for their work!

Bachmann, Roman, et al. "4M-21: An Any-to-Any Vision Model for Tens of Tasks and Modalities." ArXiv, 14 June 2024, https://arxiv.org/abs/2406.09406v2.