Autoregressive Model Beats Diffusion: Llama for Scalable Image Generation

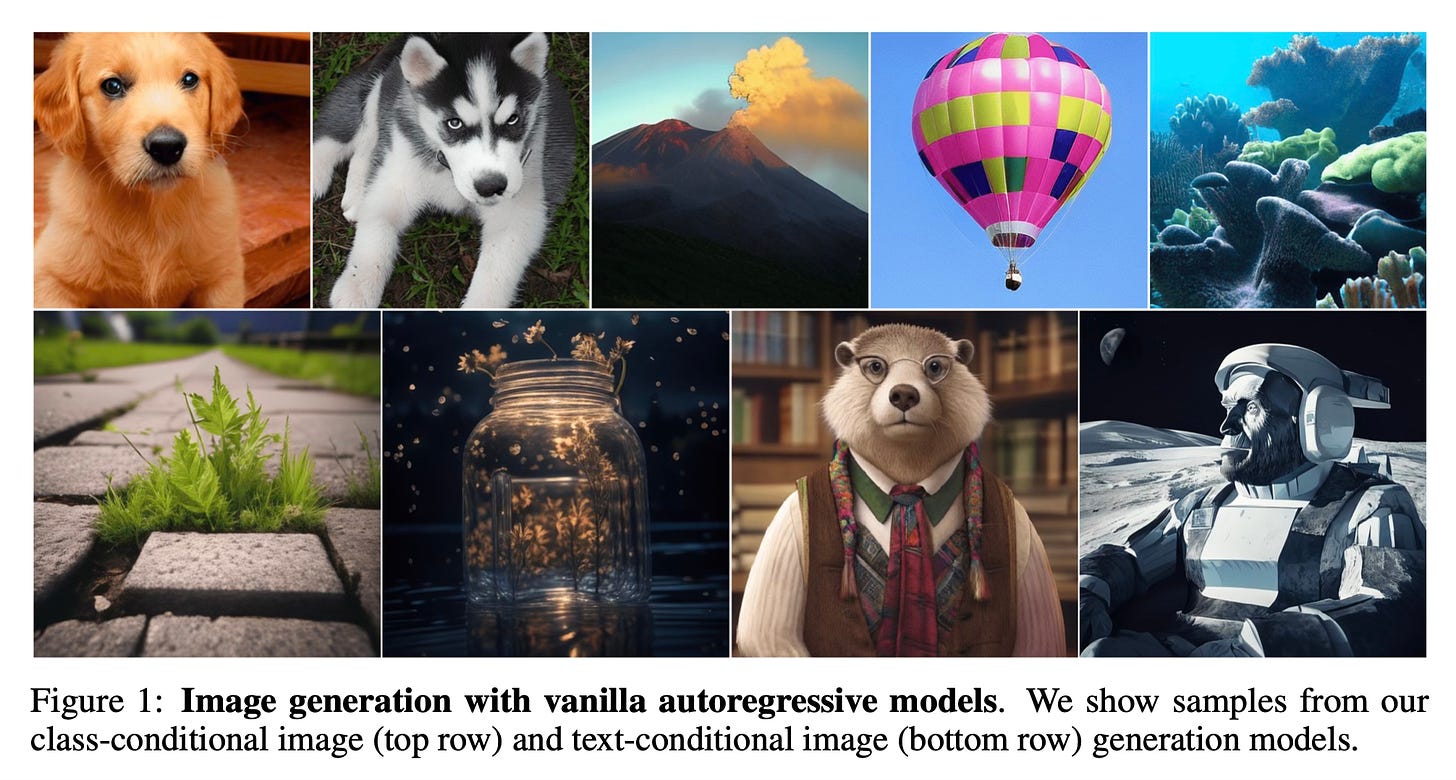

Today's paper introduces LlamaGen, a new family of image generation models that apply the "next-token prediction" paradigm of large language models to visual generation.

Method Overview

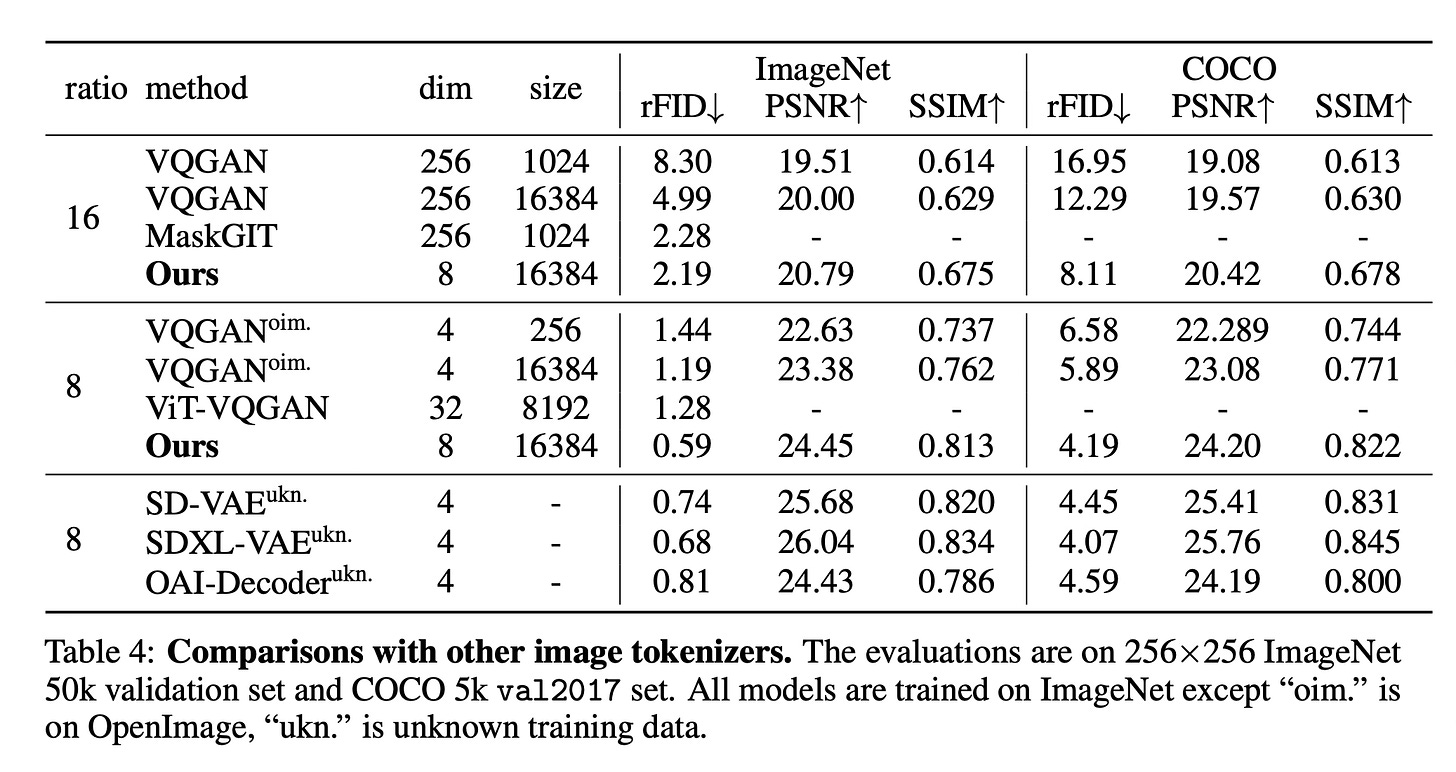

LlamaGen consists of an image tokenizer and an autoregressive model based on the Llama architecture. The image tokenizer converts images to discrete tokens using a quantized autoencoder with a learnable codebook. It uses a low codebook vector dimension, large codebook size, and adversarial training, which achieves high reconstruction quality (0.94 rFID) and codebook usage (97%) on the ImageNet benchmark.

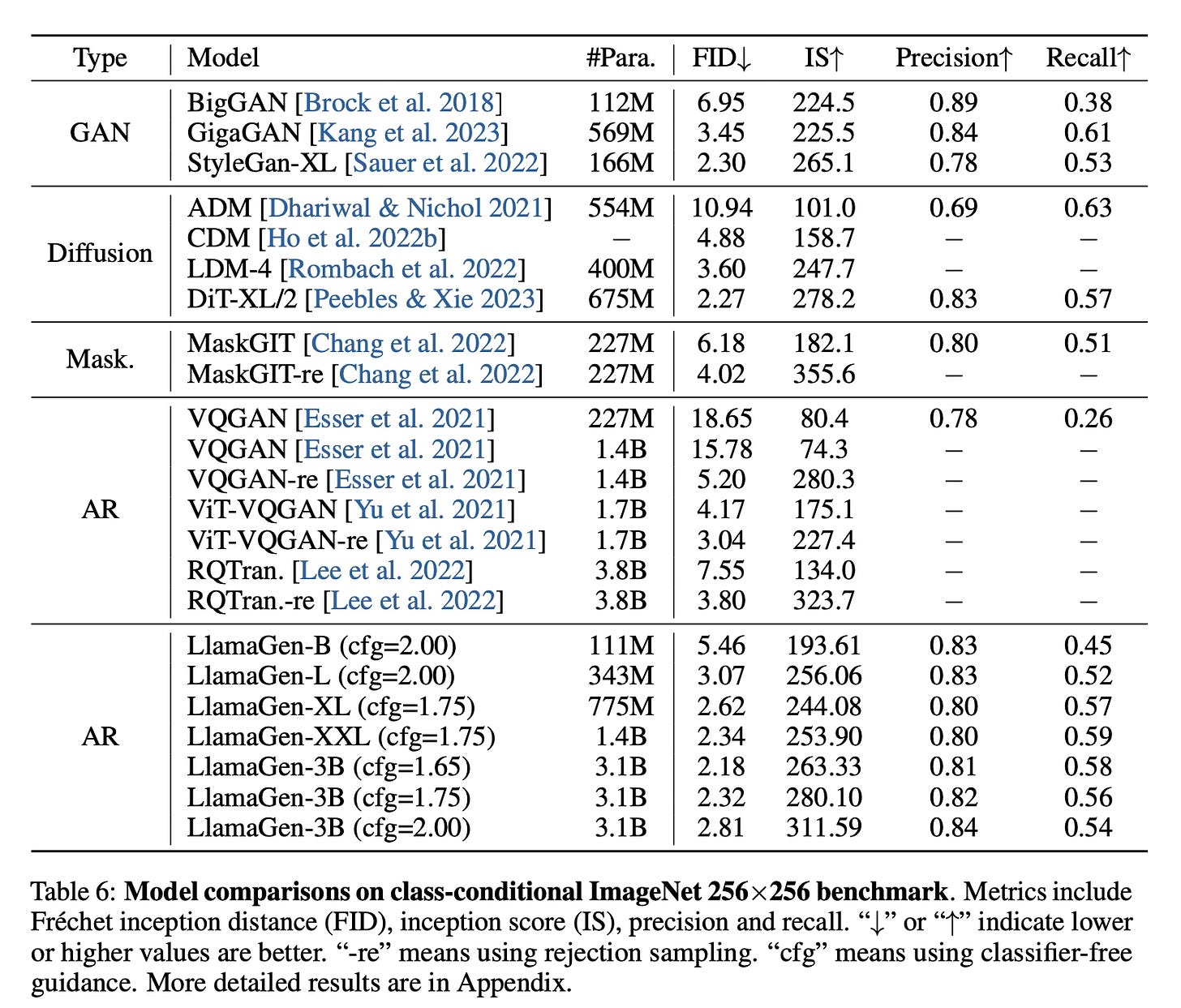

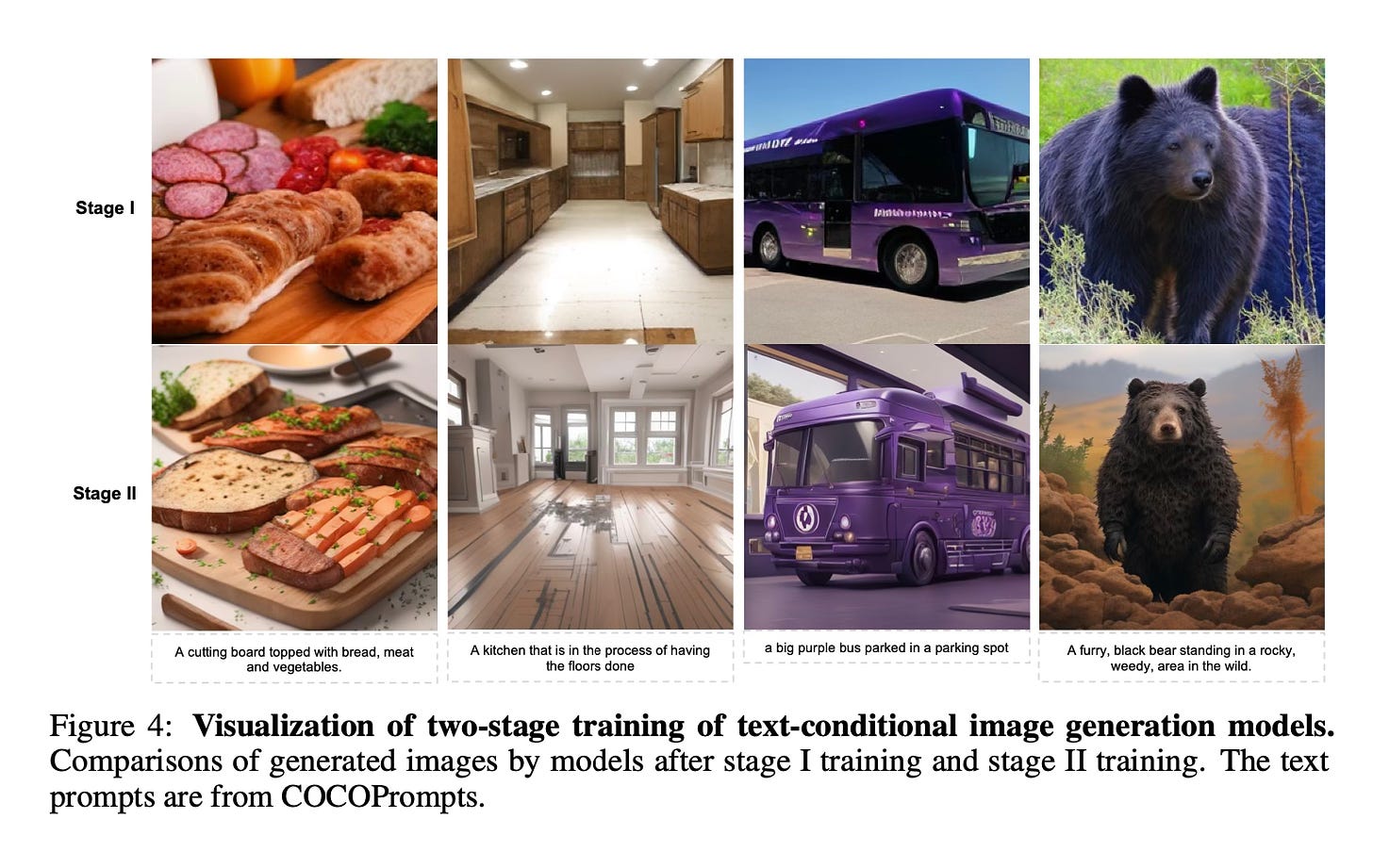

The autoregressive model takes the tokenized image as input and generates the tokens sequentially by predicting the next token conditioned on previous tokens. It uses the same architecture as Llama with pre-normalization, SwiGLU activation, and rotary positional embeddings. For class-conditional generation, a class embedding is used as the initial token. For text-conditional generation, a text encoder provides the initial text embedding.

Results

The LlamaGen models achieve state-of-the-art performance on the ImageNet 256x256 benchmark, with the 3.1B model reaching 2.18 FID, outperforming popular diffusion models. The text-conditional 775M model, trained on LAION-COCO and high-quality images, demonstrates competitive visual quality and text alignment.

Conclusion

This work shows that vanilla autoregressive models without inductive biases on visual signals can serve as the basis for advanced image generation systems when scaled properly. The authors release all models and codes to facilitate open-source research in this area. For more information please consult the full paper or the code.

Congrats to the authors for their work!

Sun, Peize, et al. "Autoregressive Model Beats Diffusion: Llama for Scalable Image Generation." ArXiv Preprint ArXiv:2406.06525, 2024.