Depth Anything V2

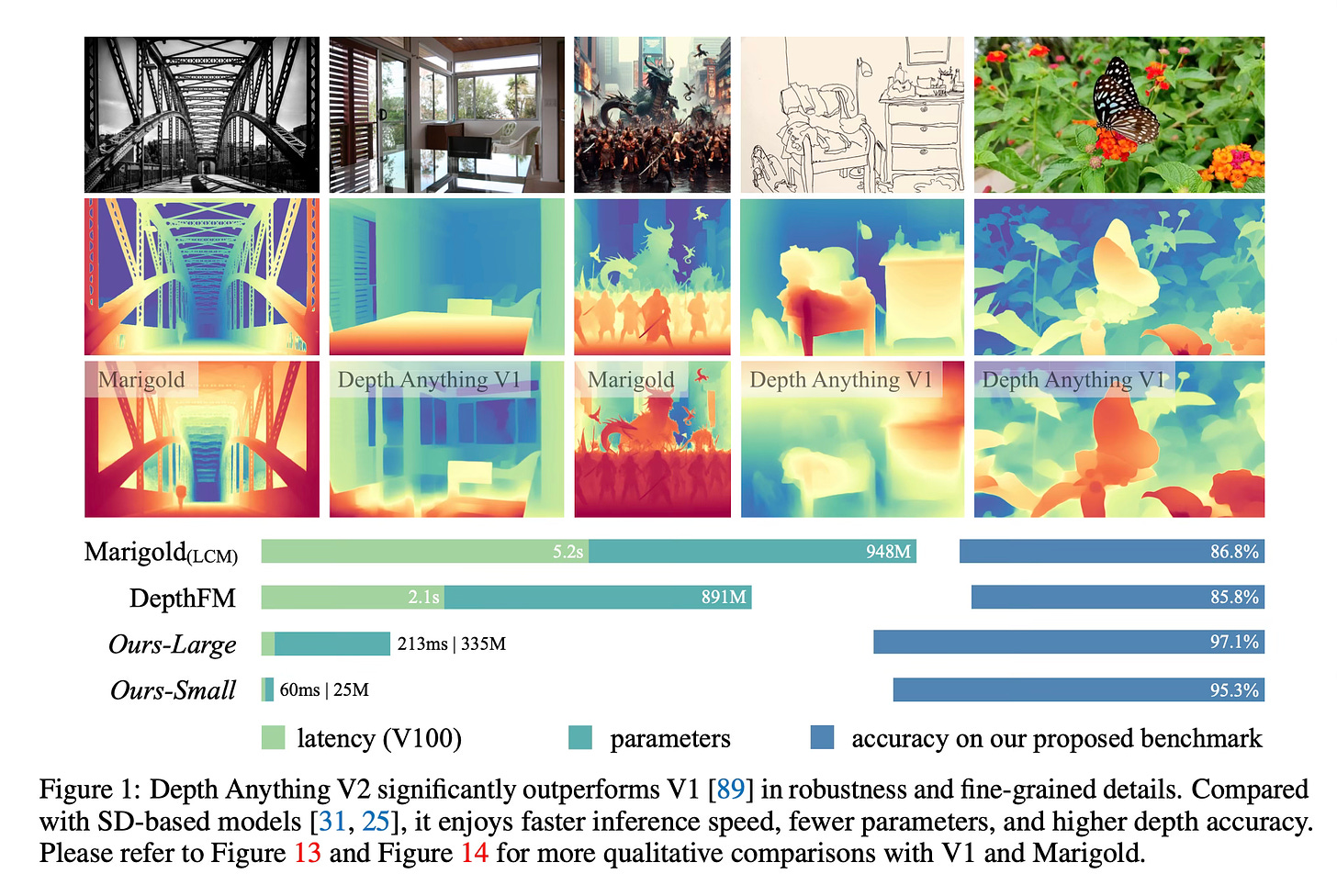

Today's paper presents Depth Anything V2, a powerful monocular depth estimation model that significantly improves upon the previous version. It aims to produce robust and fine-grained depth predictions while supporting various model scales for different applications.

Method Overview

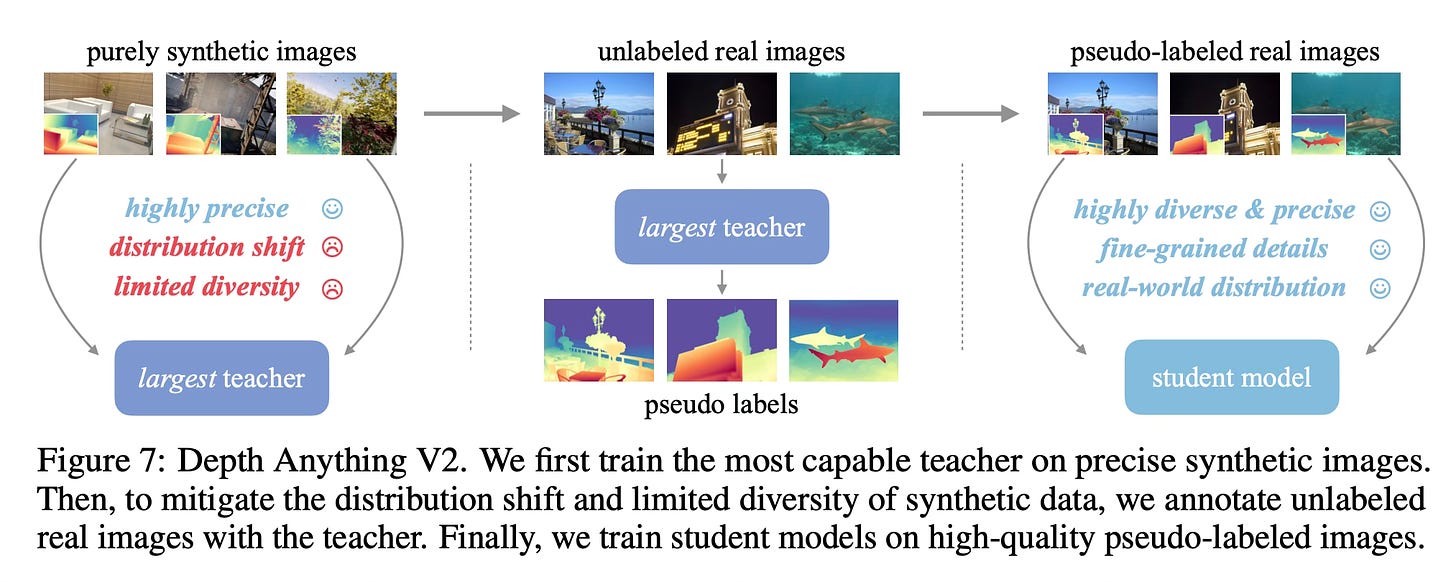

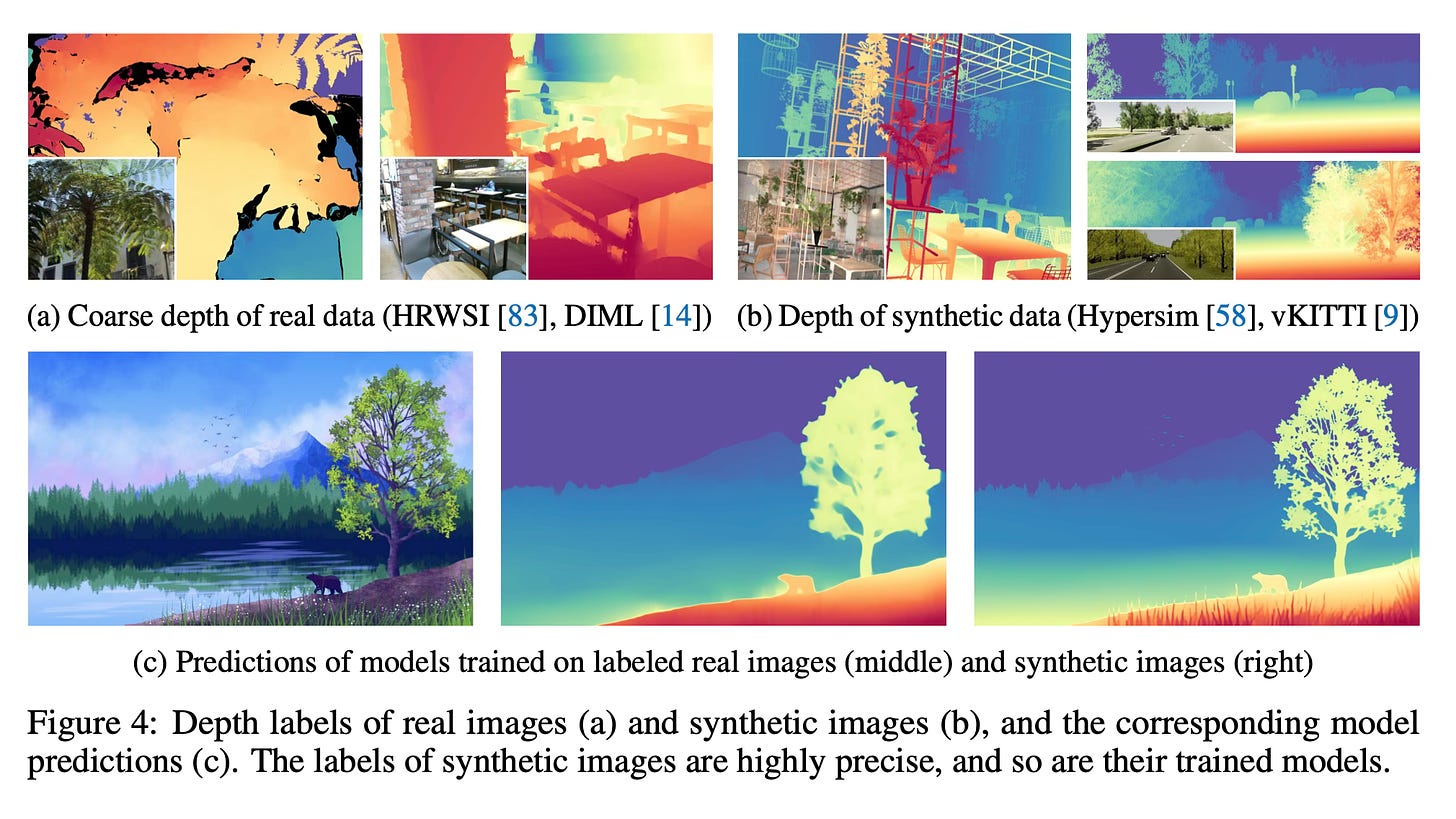

The key idea behind Depth Anything V2 is to leverage the strengths of both synthetic and real data.

The method consists of three main steps:

First, a highly capable teacher model based on the DINOv2-Giant encoder is trained solely on precise synthetic images. This ensures that the model learns to predict fine-grained depth details accurately.

Next, this teacher model is used to generate pseudo depth labels for a large-scale dataset of 62 million unlabeled real images. These pseudo-labeled images capture the real-world distribution and diversity that synthetic data lacks.

Finally, smaller student models of different scales (DINOv2-Small, -Base, -Large) are trained on the pseudo-labeled real data. This allows the students to learn robust depth estimation while benefiting from the teacher's high-quality predictions and the real data distribution.

By combining the advantages of synthetic data (precise labels) and real data (diversity and realism), Depth Anything V2 can produce highly accurate depth maps with fine details, handle complex scenes with transparent objects and reflections, and generalize well to various domains.

Results

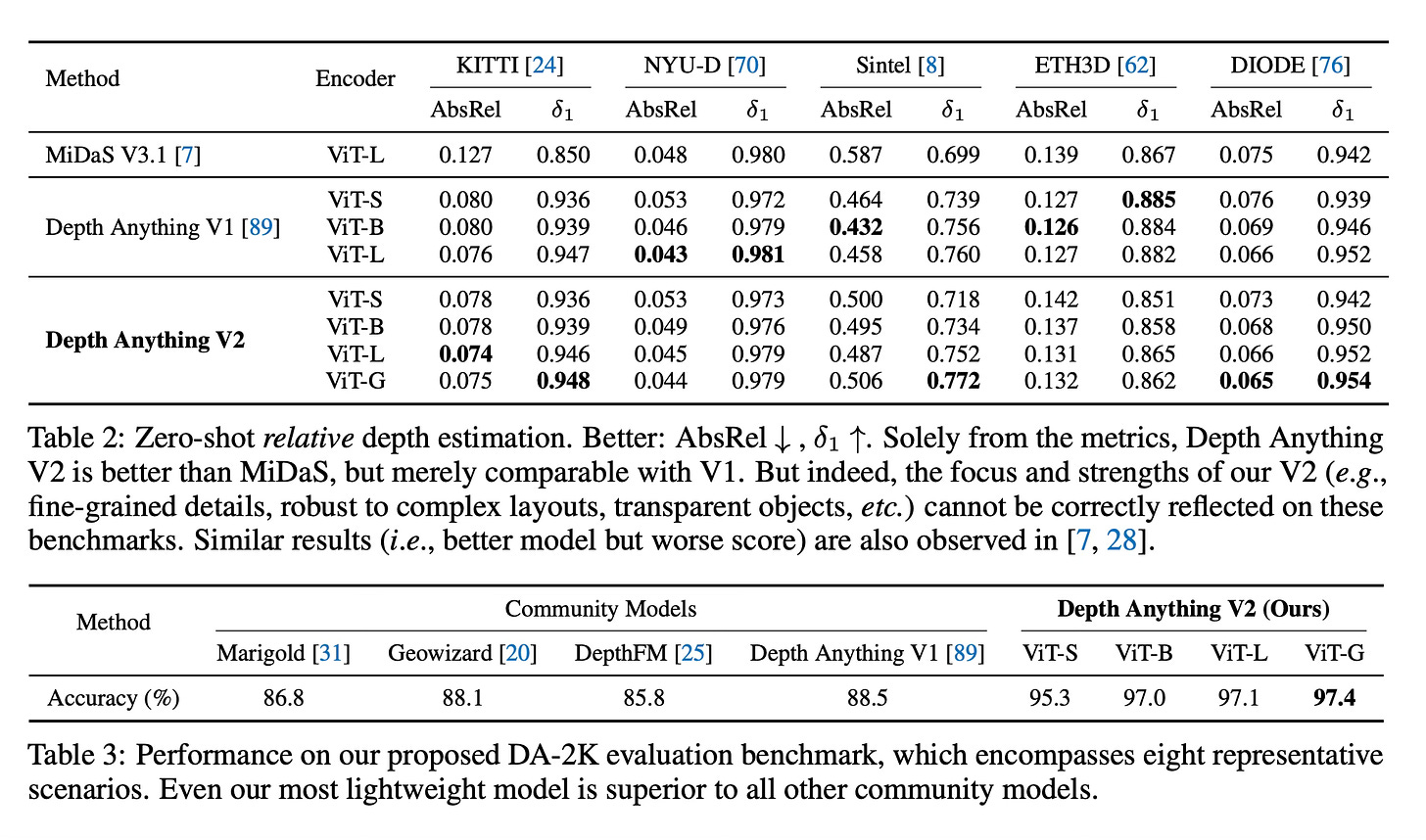

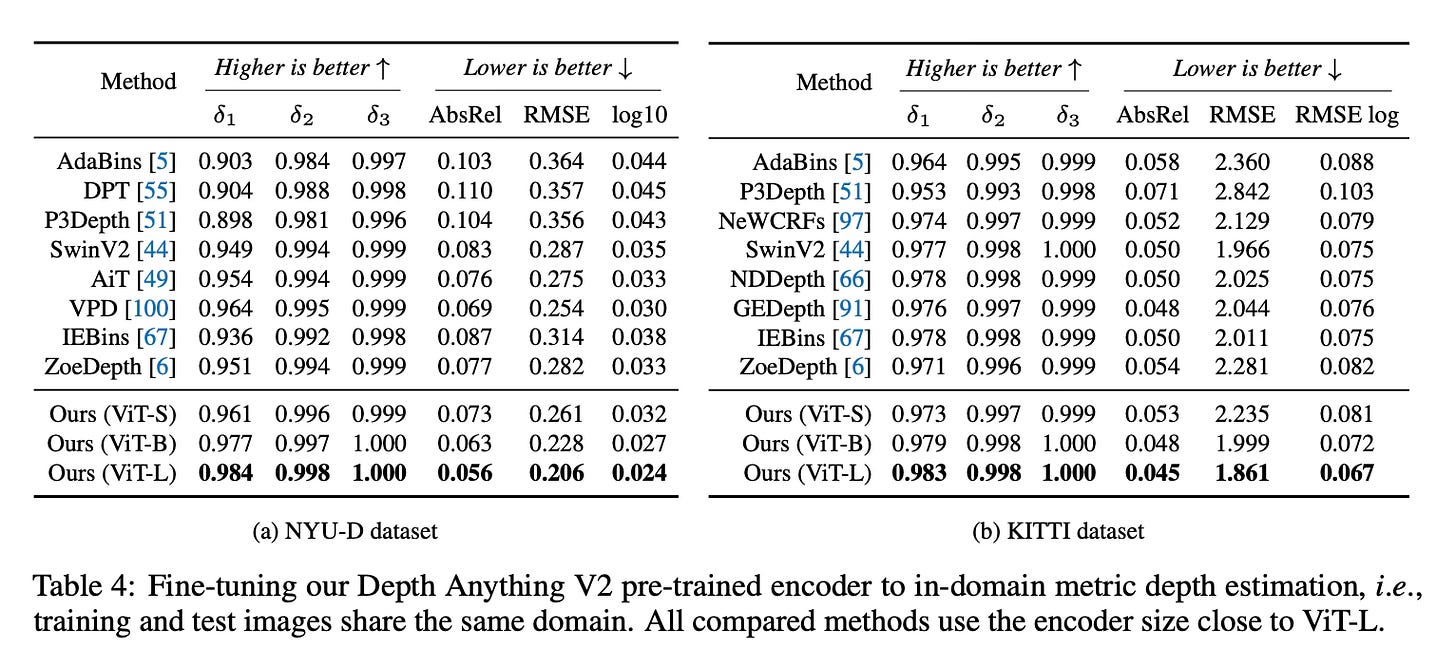

Depth Anything V2 significantly outperforms previous methods, including the original Depth Anything V1 and the recent Stable Diffusion-based models like Marigold and Geowizard. Even the lightweight ViT-Small model achieves remarkable performance, surpassing much larger models from the community.

The paper also introduces a new evaluation benchmark, DA-2K, covering diverse scenarios. Depth Anything V2 demonstrates superior accuracy, with the ViT-Giant model achieving 97.4% accuracy in relative depth discrimination.

Conclusion

Depth Anything V2 presents a powerful foundation model for monocular depth estimation by combining the strengths of synthetic and real data. It produces robust and fine-grained depth predictions, supports various model scales, and can be easily fine-tuned for downstream tasks. For more information please consult the full paper.

Congrats to the authors for their work!

Yang, Lihe, et al. "Depth Anything V2." ArXiv Preprint ArXiv:2406.09414, 13 June 2024.