FLAME: Factuality-Aware Alignment for Large Language Models

Today’s paper studies how to improve the factual accuracy of large language models (LLMs) during the alignment process, which aims to make LLMs better follow natural language instructions. The authors observe that the conventional alignment process fails to enhance factual accuracy and often leads to more false facts being generated.

The standard alignment process consists of two steps: supervised fine-tuning (SFT) and reinforcement learning (RL). The authors find that both steps can inadvertently encourage hallucination in LLMs.

In the SFT step, fine-tuning LLMs on human-created responses may introduce unfamiliar information, prompting LLMs to output unknown facts. In the RL step, reward functions that guide LLMs to provide more detailed responses can also lead to increased hallucination. To address these issues, the authors propose factuality-aware alignment (FLAME).

Method Overview

FLAME (Factuality-aware Alignment) aims to improve the factual accuracy and alignment of large language models (LLMs) when following diverse instructions. For both SFT and RL/DPO, FLAME aims to improve the factual accuracy. In order to improve the factual accuracy, the authors start with a pre-trained LLM and use a self-rewarding scheme.

Factuality-Aware Supervised Fine-Tuning (SFT):

Classify instructions as fact-based or not using the LLM itself

For non-fact-based instructions, use human-written responses for fine-tuning

For fact-based instructions, sample responses from the pre-trained LLM via few-shot prompting to avoid introducing unknown knowledge

Use the above obtained data for fine-tuning

Factuality-Aware Discriminative Prompting Optimization (DPO):

Further fine-tune the SFT model using DPO

Use the SFT model as the reward model for instruction following

Introduce a separate factuality reward model to evaluate factual accuracy

Create preference pairs (prompt, factual response, non-factual response) for fact-based instructions

Fine-tune on these preference pairs to improve both instruction following and factuality

FLAME separates factuality from general instruction following, allowing the LLM to rely on its own knowledge for factual responses, and optimizes both aspects jointly during the final discriminative fine-tuning stage.

Results

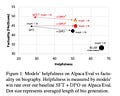

Experiments show that FLAME guides LLMs to output more factual responses while maintaining instruction-following capability, outperforming the standard alignment process.

Conclusion

The proposed factuality-aware alignment method enhances the factual accuracy of LLMs during the alignment process by carefully controlling the information presented during fine-tuning and incorporating explicit factuality rewards. For more information please consult the full paper.

Congrats to the authors for their work!

Lin, Sheng-Chieh, et al. "FLAME: Factuality-Aware Alignment for Large Language Models." arXiv preprint arXiv:2405.01525 (2024).