HQ-Edit: A High-Quality Dataset for Instruction-based Image Editing

Today’s paper introduces HQ-Edit, a new high-quality dataset for instruction-based image editing. HQ-Edit contains around 200,000 edits and leverages advanced foundation models like GPT-4V and DALL-E 3 to generate high-quality image editing pairs at scale. The dataset features high-resolution images rich in detail along with comprehensive editing instructions.

Method Overview

HQ-Edit is created using a three-step pipeline:

1. Expansion - A small set (293) of diverse seed examples of input, output image descriptions along with edit instructions are collected from various sources. Then, GPT-4 is used to expand these into around 100,000 instances to ensure a broad diversity of edit instructions. The prompt used is shown below:

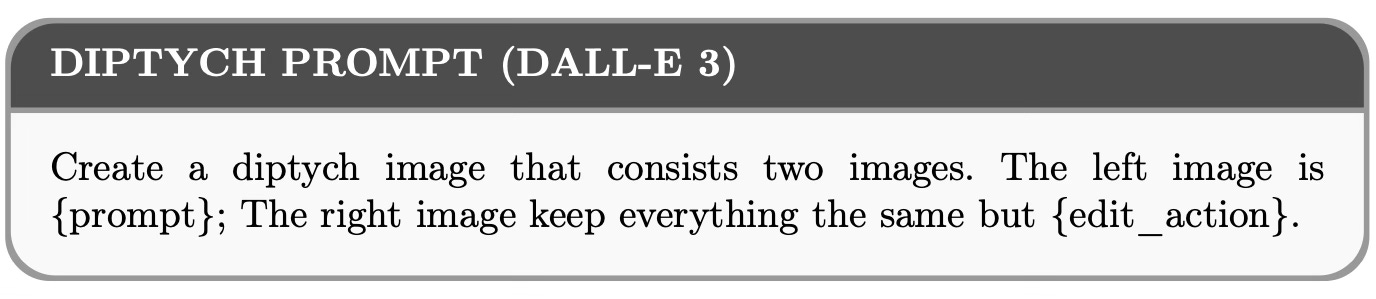

2. Generation - The expanded examples are refined into detailed prompts and fed into DALL-E 3 to generate diptychs containing input and output image pairs side-by-side. Generating the pairs together as diptychs rather than separately improves alignment and consistency. The used prompts for this step are shown below:

3. Post-processing - The diptychs undergo decomposition into image pairs, warping and filtering to precisely align them, and instruction refinement using GPT-4V to rewrite the instructions in more detail. Inverse edits transforming the output back to the input are also generated to double the dataset size.

Two new metrics, Alignment and Coherence, are introduced to quantitatively assess the quality of the image edit pairs using GPT-4. Alignment measures the semantic consistency of the edits with the instructions, while Coherence evaluates the aesthetic quality of the edited image.

Results

In this way, the resulting HQ-Edit dataset is quite diverse:

Moreover, extensive experiments demonstrate that models trained on HQ-Edit substantially outperform existing methods, even surpassing models fine-tuned on human-annotated data. For example, an InstructPix2Pix model fine-tuned on HQ-Edit achieves state-of-the-art editing performance, increasing Alignment by 12.3 points and Coherence by 5.64 points compared to the base model.

The authors also conduct a human evaluation study to show that the proposed Alignment metric correlates well with the human judgement.

Conclusion

HQ-Edit introduces a high-quality, large-scale dataset for instruction-based image editing. By leveraging powerful foundation models and a novel synthesis pipeline, it obtains image edit pairs with good resolution, detail, and instruction alignment. For more information please consult the full paper or the project page.

Code: https://github.com/UCSC-VLAA/HQ-Edit

Demo: https://huggingface.co/spaces/LAOS-Y/HQEdit

Data: https://huggingface.co/datasets/UCSC-VLAA/HQ-Edit

Congrats to the authors for their work!

Hui, Mude, et al. "HQ-Edit: A High-Quality Dataset for Instruction-based Image Editing." arXiv preprint arXiv:2404.09990 (2023).