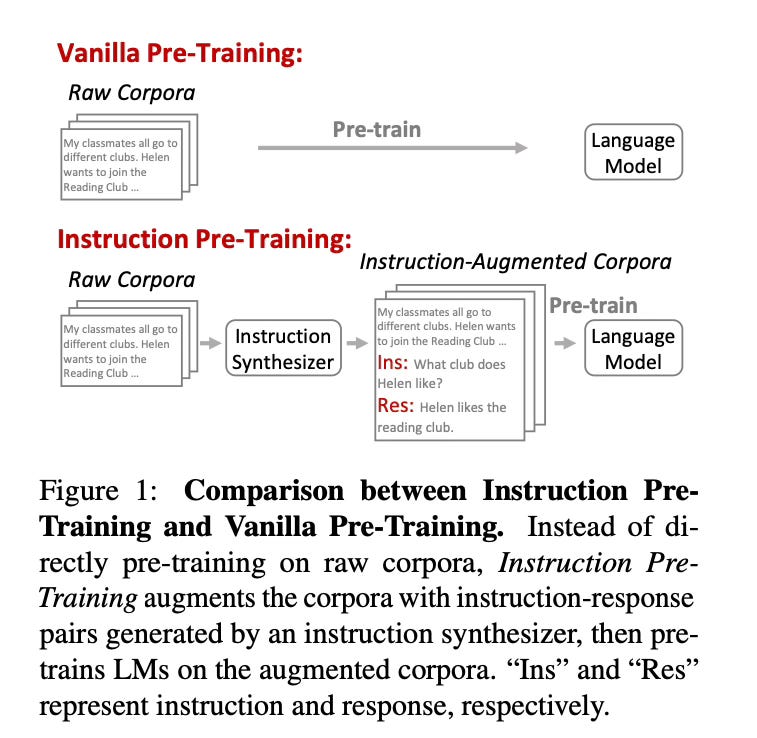

Today's paper introduces Instruction Pre-Training, a new approach to pre-training language models using supervised multitask learning. Instead of training only on raw text corpora, this method augments the pre-training data with instruction-response pairs generated by an instruction synthesizer. This allows the model to learn from diverse tasks during pre-training, enhancing its generalization abilities.

Method Overview

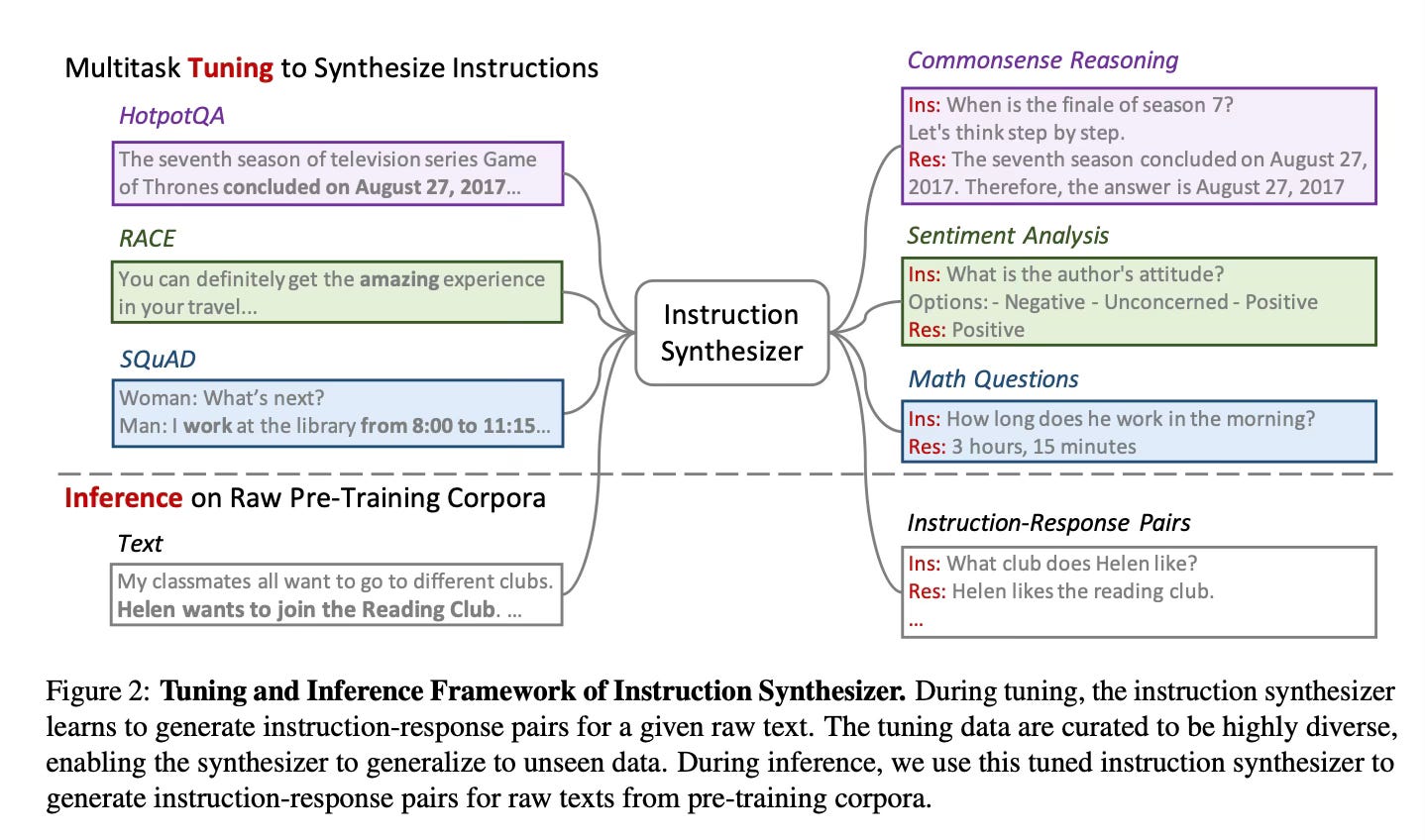

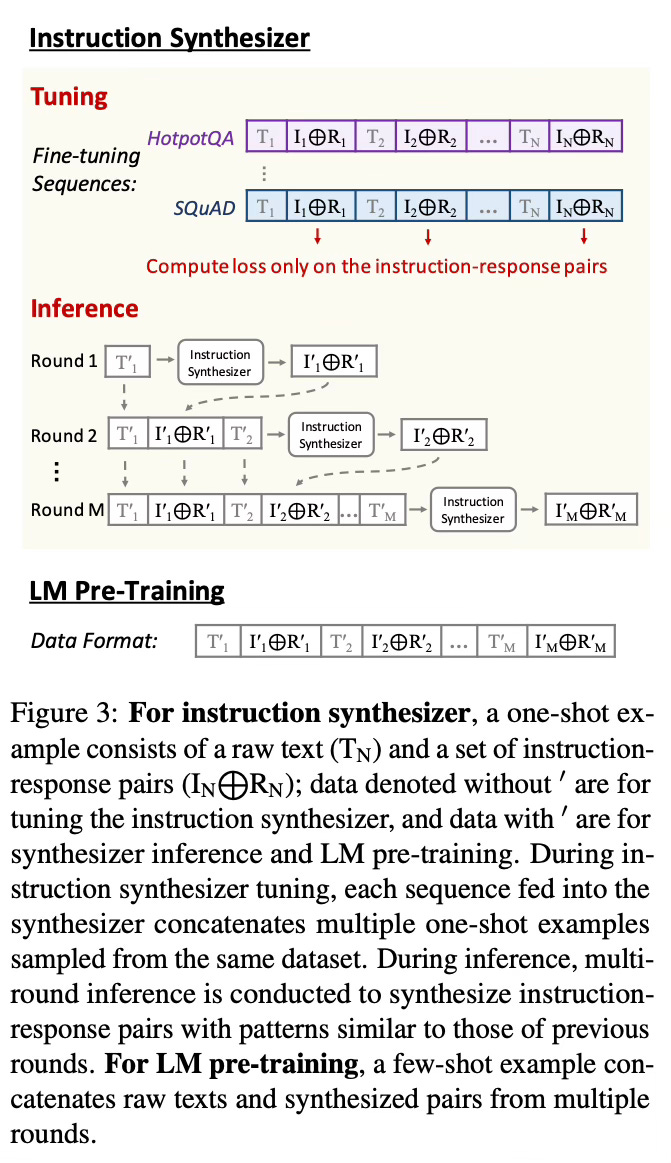

The key idea is to develop an instruction synthesizer that can generate instruction-response pairs based on raw text. This synthesizer is created by fine-tuning a language model on a diverse collection of context-based task completion datasets. During inference, it takes a piece of raw text as input and outputs multiple instruction-response pairs related to that text. The process is detailed below:

To create the pre-training data, the authors apply this synthesizer to augment a portion of the raw pre-training corpora with generated instruction-response pairs. The augmented data is then mixed with the original raw corpora for pre-training the language model.

The pre-training process itself remains largely unchanged - the model is trained on next-token prediction for all tokens in the augmented data. This allows it to learn both from the raw text and the synthesized instruction-response pairs.

The authors explore this approach for both general pre-training from scratch and domain-adaptive continued pre-training. For general pre-training, they augment about 20% of the raw corpora. For domain-adaptive pre-training, they augment all of the domain-specific data.

Results

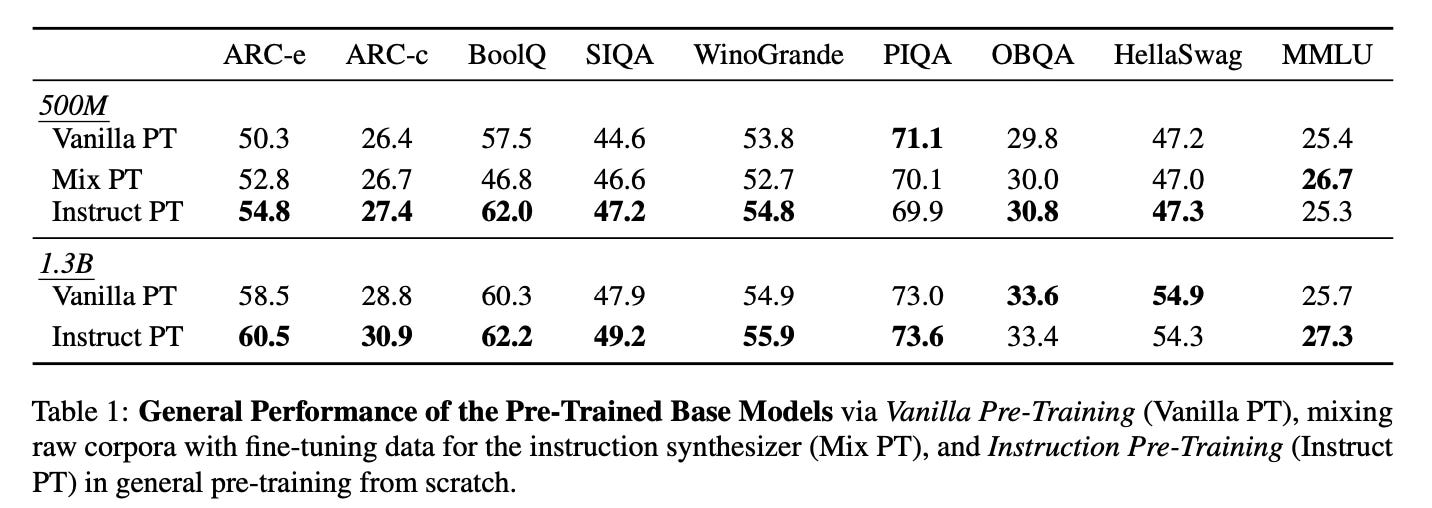

The results show that Instruction Pre-Training consistently outperforms standard pre-training across different model sizes and pre-training scenarios:

In general pre-training from scratch, a 500M parameter model trained on 100B tokens with Instruction Pre-Training matches the performance of a 1B parameter model trained on 300B tokens with standard pre-training.

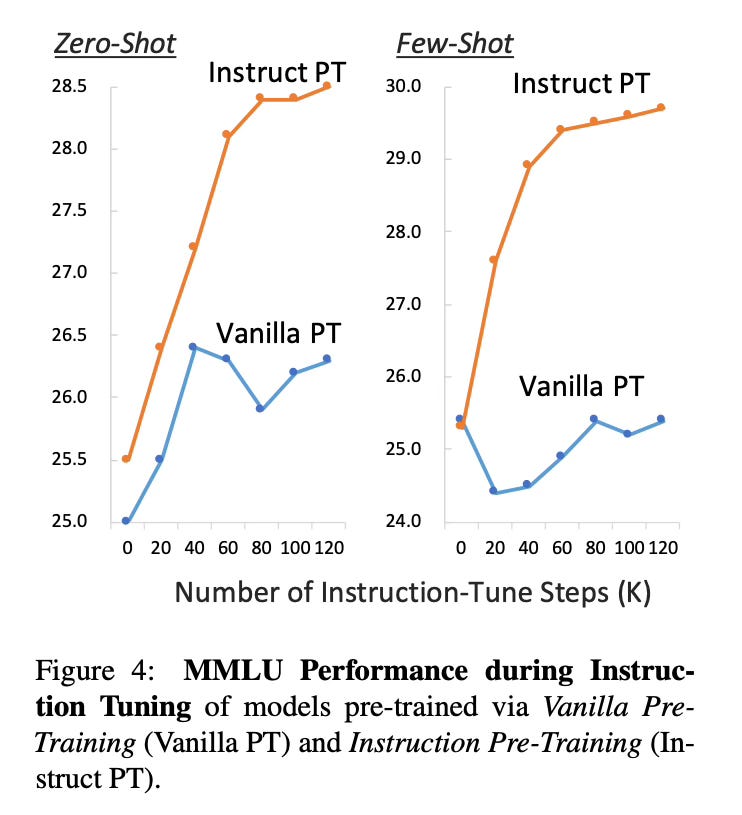

Models pre-trained with this method also benefit more from subsequent instruction tuning, showing faster and more stable improvements.

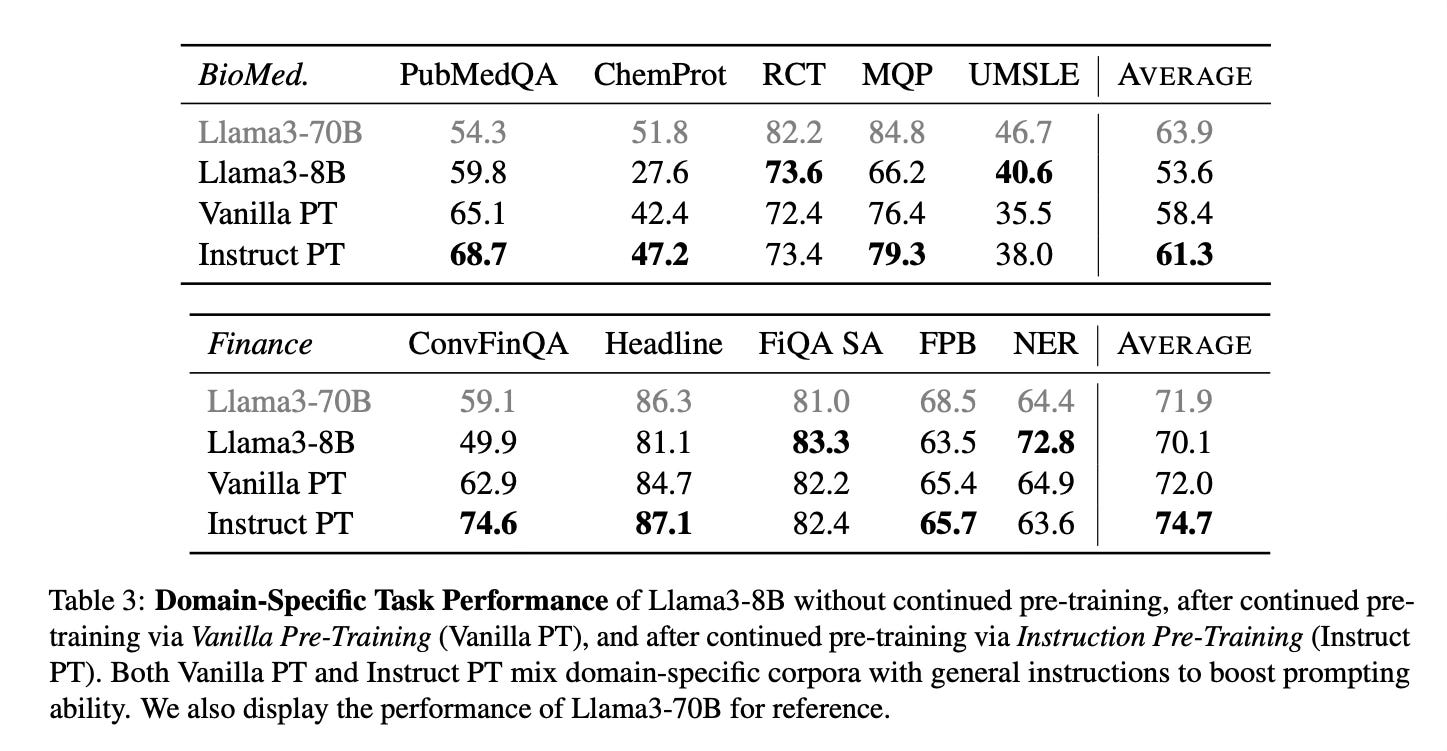

In domain-adaptive continued pre-training, this approach enables Llama3-8B to match or exceed the performance of Llama3-70B on domain-specific tasks after continued pre-training.

Conclusion

Instruction Pre-Training demonstrates the potential of incorporating supervised multitask learning into the pre-training stage of language models. By augmenting raw corpora with diverse synthesized tasks, this approach enhances model generalization and downstream task performance. The results suggest this is a promising direction for improving language model pre-training, though further research is needed to address potential limitations like hallucination in synthetic data. For more information please consult the full paper.

Congrats to the authors for their work!

Cheng, Daixuan, et al. "Instruction Pre-Training: Language Models are Supervised Multitask Learners." arXiv preprint arXiv:2406.14491 (2024).

Instruction Pre-Training utilizes supervised multitask learning. Unlike traditional training that solely relies on raw text corpora, this approach augments the pre-training data with instruction-response pairs generated by an instruction synthesizer. This enables the model to learn from a variety of tasks during pre-training, thereby enhancing its generalization capabilities.