Make Your LLM Fully Utilize the Context

Today’s paper introduces INformation-INtensive (IN2) training, a data-driven approach to overcome the "lost-in-the-middle" challenge faced by long-context large language models (LLMs). While LLMs can process lengthy inputs, they often struggle to fully utilize information in the middle of the long context. The authors hypothesize this stems from insufficient explicit supervision during long-context training.

Method Overview

IN2 training leverages a synthesized long-context question-answer dataset to explicitly teach the model that important information can be present throughout the context, not just at the beginning and end.

The training data is constructed from a general natural language corpus. For each raw text, a question-answer pair is generated using a powerful LLM like GPT-4. The answer requires information from short segments randomly placed in a synthesized long context of 4K-32K tokens.

Two types of question-answer pairs are generated:

1. Requiring fine-grained information awareness on a single 128-token segment

2. Requiring integration and reasoning of information from two or more segments

The authors apply IN2 finetuning to the Mistral-7B-Instruct model to create FILM-7B. The training data contains a balanced mix of the two question-answer types above, some short-context QA data, and general instruction-tuning data.

Results

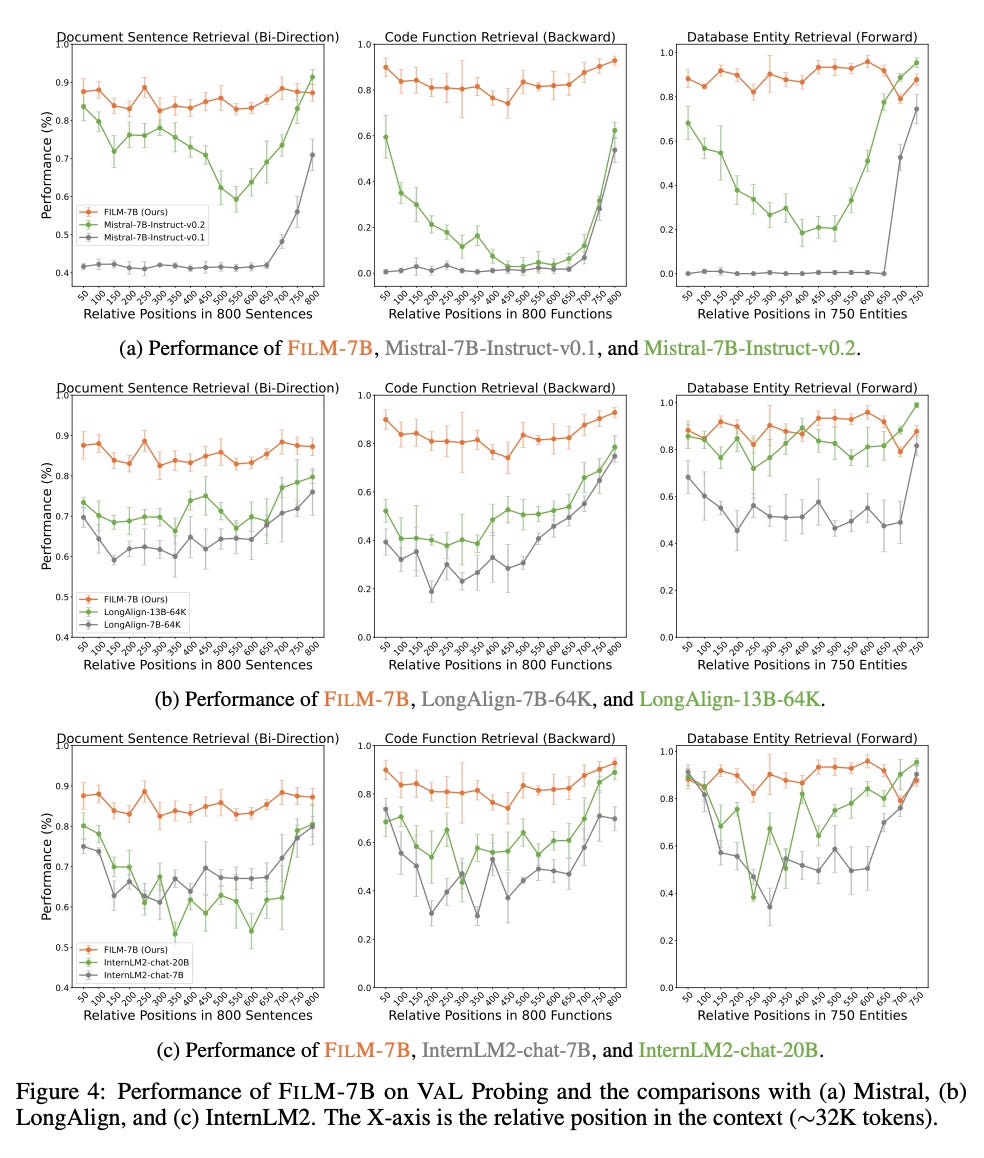

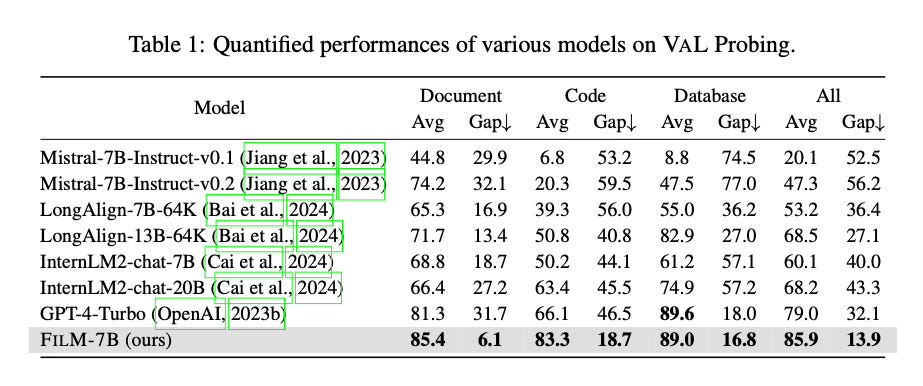

The authors design three tasks to assess FILM-7B's long-context information awareness:

1. Document Sentence Retrieval (bi-directional retrieval) - “the context consist of numerous natural language sentences, and the instruction aims to retrieve a single sentence containing a given piece.”

2. Code Function Retrieval (backward retrieval) - “the contexts consist of Python functions, and the instruction aims to retrieve the function name for a given line of code within the function definition.”

3. Database Entity Retrieval (forward retrieval) - “the contexts contain lists of structured entities, each with three fields: ID, label, and description. The query aims to retrieve the label and description for a given ID.”

The results show that IN2 training helps overcoming the lost-in-the-middle problem compared to the Mistral-7B backbone. FILM-7B exhibits robust performance across different positions in the 32K context window, even outperforming GPT-4 in some cases.

Conclusion

IN2 training is an effective data-driven approach to make LLMs fully utilize information throughout a long context. By applying it to create FILM-7B, the method improves the performance in long-context scenarios. For more information please consult the full paper.

Congrats to the authors for their work!

An, Shengnan, et al. "Make Your LLM Fully Utilize the Context." arXiv preprint arXiv:2404.16811 (2024).