Many-Shot In-Context Learning in Multimodal Foundation Models

Today's paper explores the potential of many-shot in-context learning (ICL) for multimodal foundation models. It investigates how increasing the number of examples in the prompt can improve the performance of these models on various tasks across different domains.

Method Overview

The study evaluates the performance of two state-of-the-art multimodal foundation models, GPT-4o and Gemini 1.5 Pro, on 10 datasets spanning natural imagery, medical imagery, remote sensing, and molecular imagery. The tasks include multi-class, multi-label, and fine-grained classification.

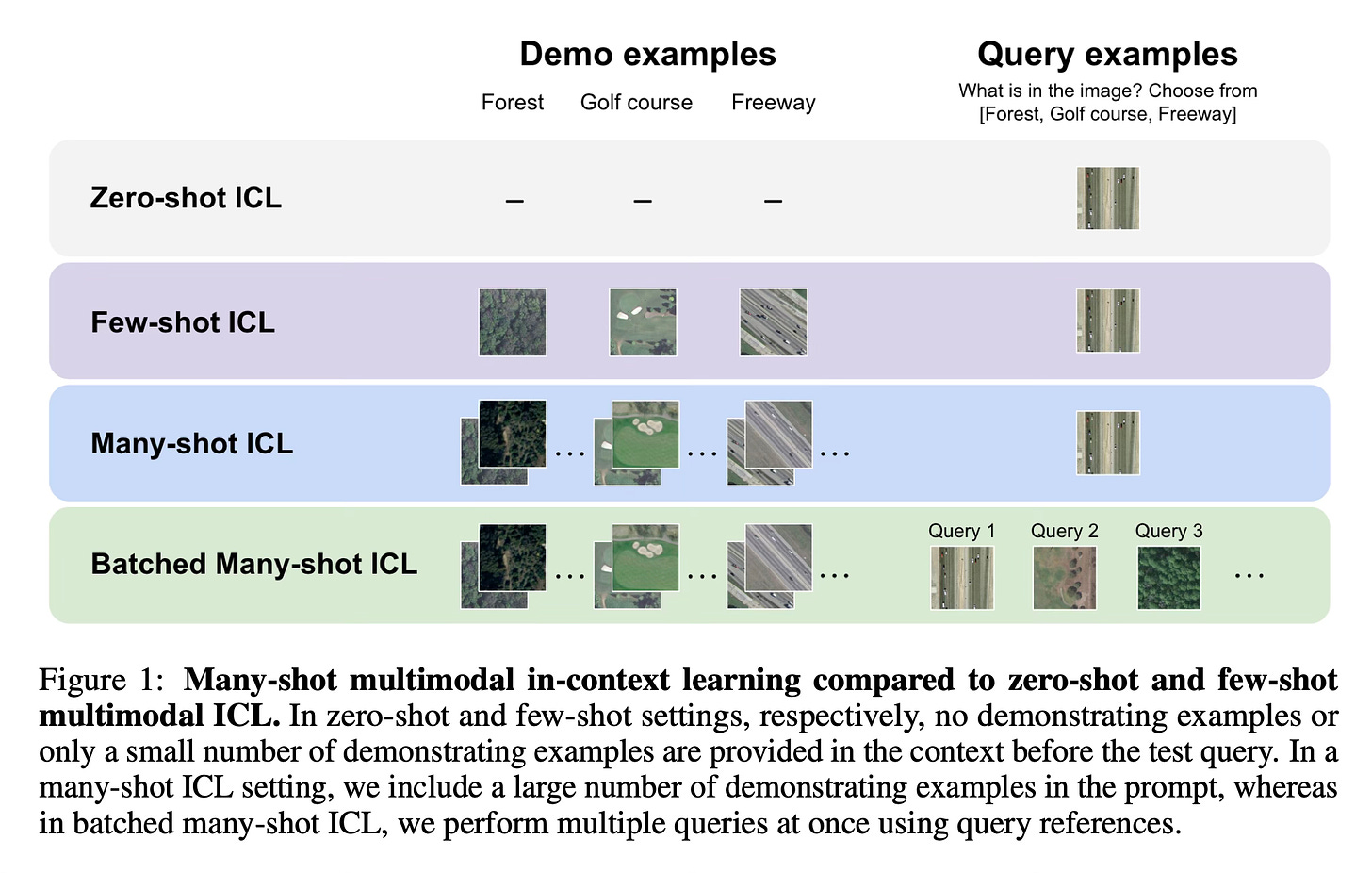

The key idea is to leverage the long context windows of these models, which can accommodate thousands of tokens, to provide a large number of demonstrating examples (or "shots") in the prompt before the test query. This is referred to as "many-shot ICL".

In the traditional few-shot ICL setting, only a small number of demonstrating examples (typically less than 100) are included in the prompt. However, with many-shot ICL, the authors explore the impact of scaling up the number of demonstrating examples by orders of magnitude, up to almost 2,000 multimodal examples.

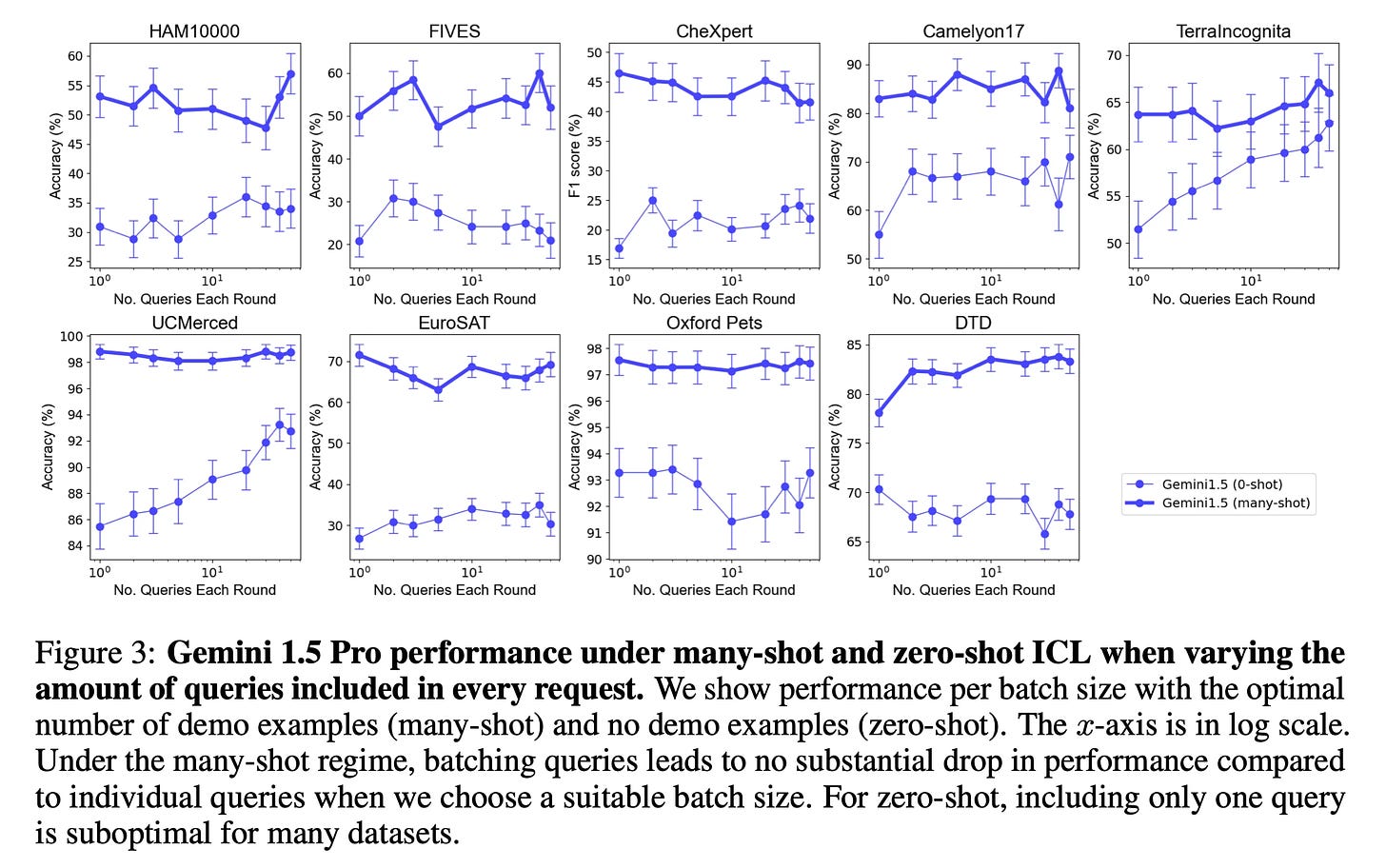

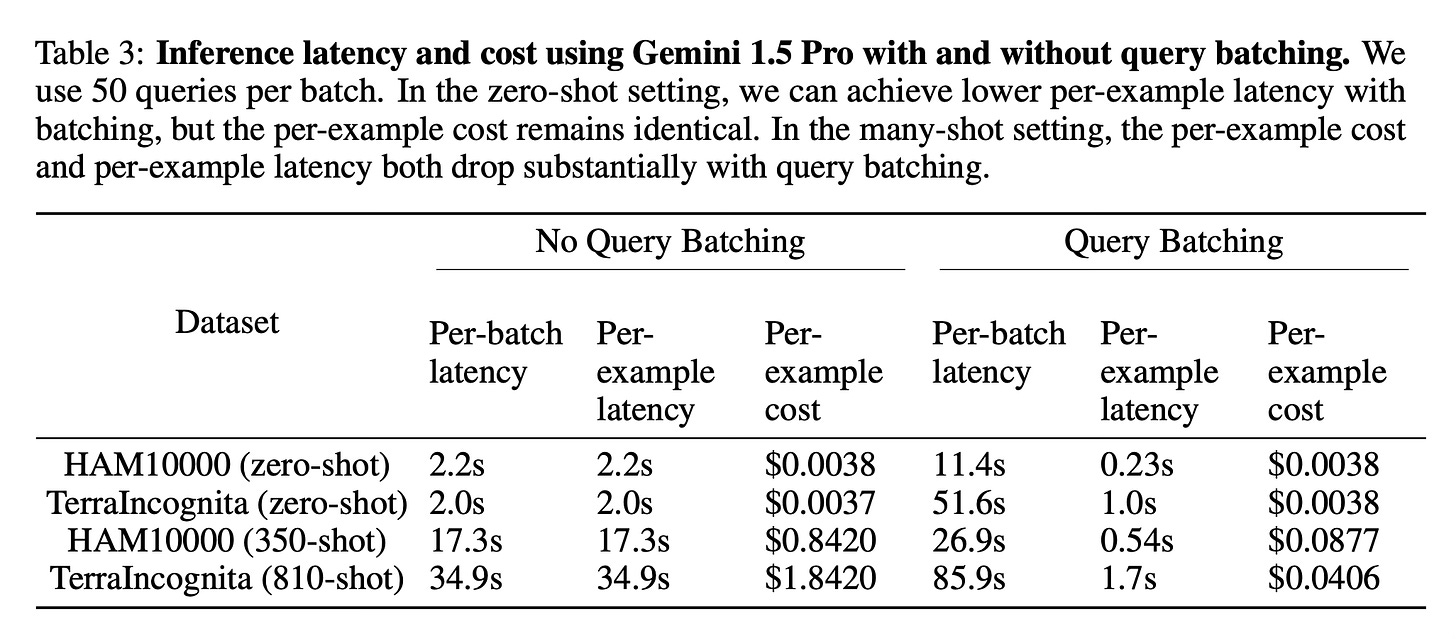

The study also investigates the effect of batching multiple queries into a single API call. This approach can potentially improve performance while reducing per-query cost and latency, which is crucial for many-shot ICL due to the long prompts required.

Results

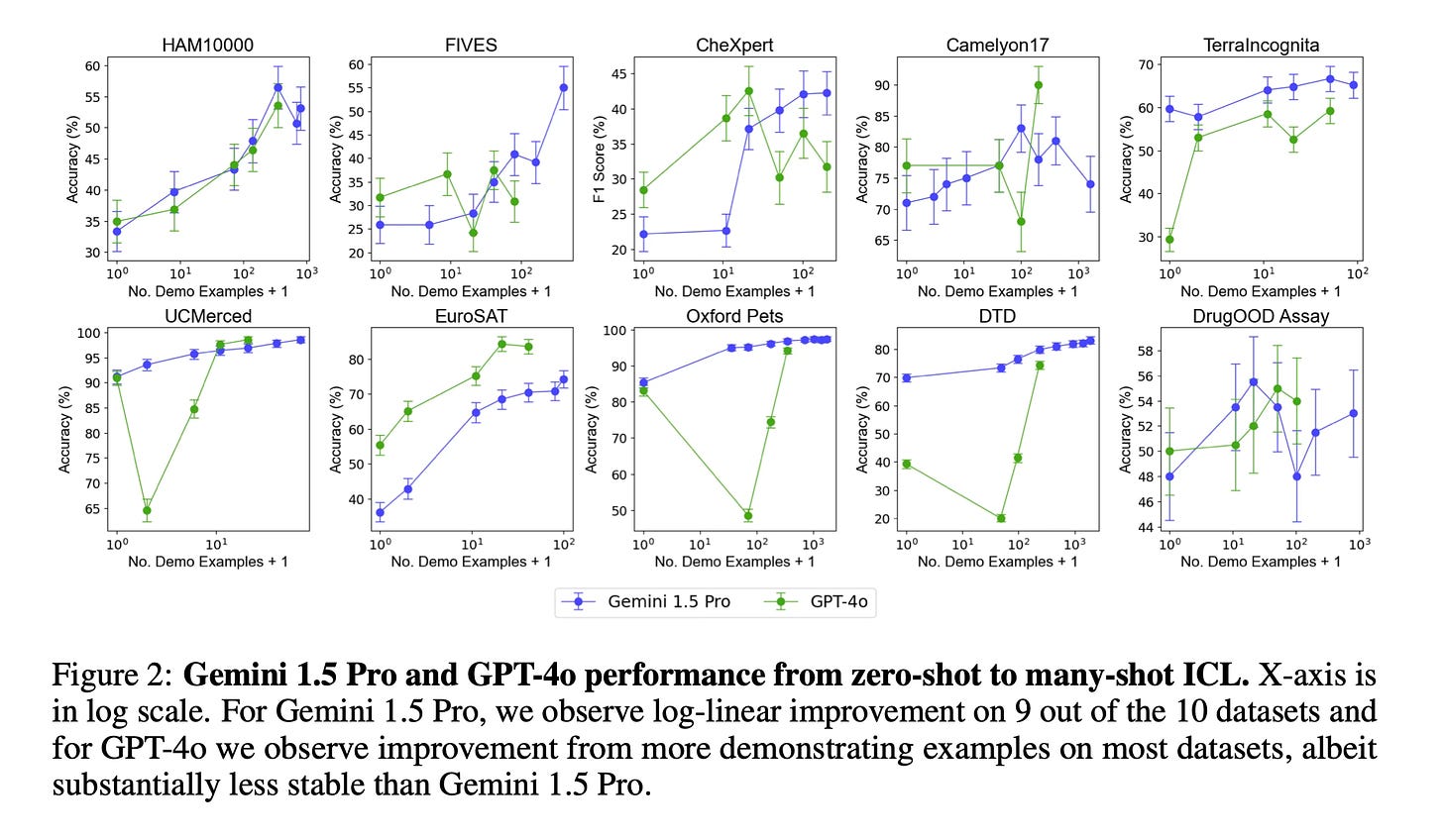

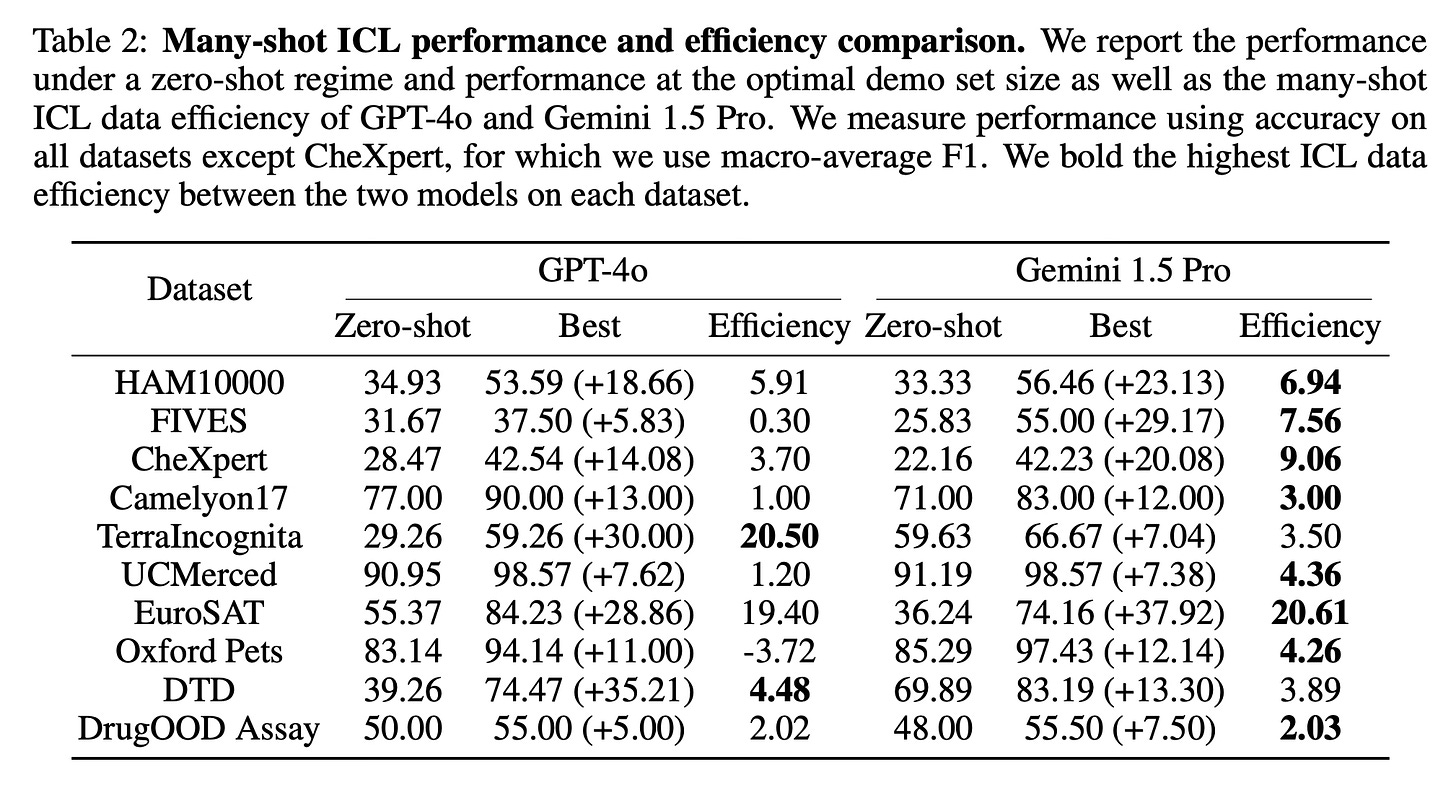

The experiments reveal that many-shot ICL leads to substantial performance improvements compared to few-shot ICL (with less than 100 examples) across all datasets. Notably, the performance of Gemini 1.5 Pro generally improves log-linearly as the number of demonstrating examples increases, while GPT-4o exhibits less stable improvements.

Furthermore, the authors measure the data efficiency of the models under ICL, which represents the rate at which the models learn from additional demonstrating examples. They find that Gemini 1.5 Pro exhibits higher ICL data efficiency than GPT-4o on most datasets, despite both models achieving similar zero-shot performance.

Regarding batched many-shot ICL, the results show that batching up to 50 queries can lead to some performance improvements under zero-shot and many-shot ICL settings.

Conclusion

The paper shows that many-shot ICL has a lot of potential and highlights the importance of long context windows and batched inference for practical applications. For more information, please consult the full paper.

Congrats to the authors for their work!

Jiang, Yixing, et al. "Many-Shot In-Context Learning in Multimodal Foundation Models." ArXiv, 16 May 2024, https://arxiv.org/abs/2405.09798v1.