McEval: Massively Multilingual Code Evaluation

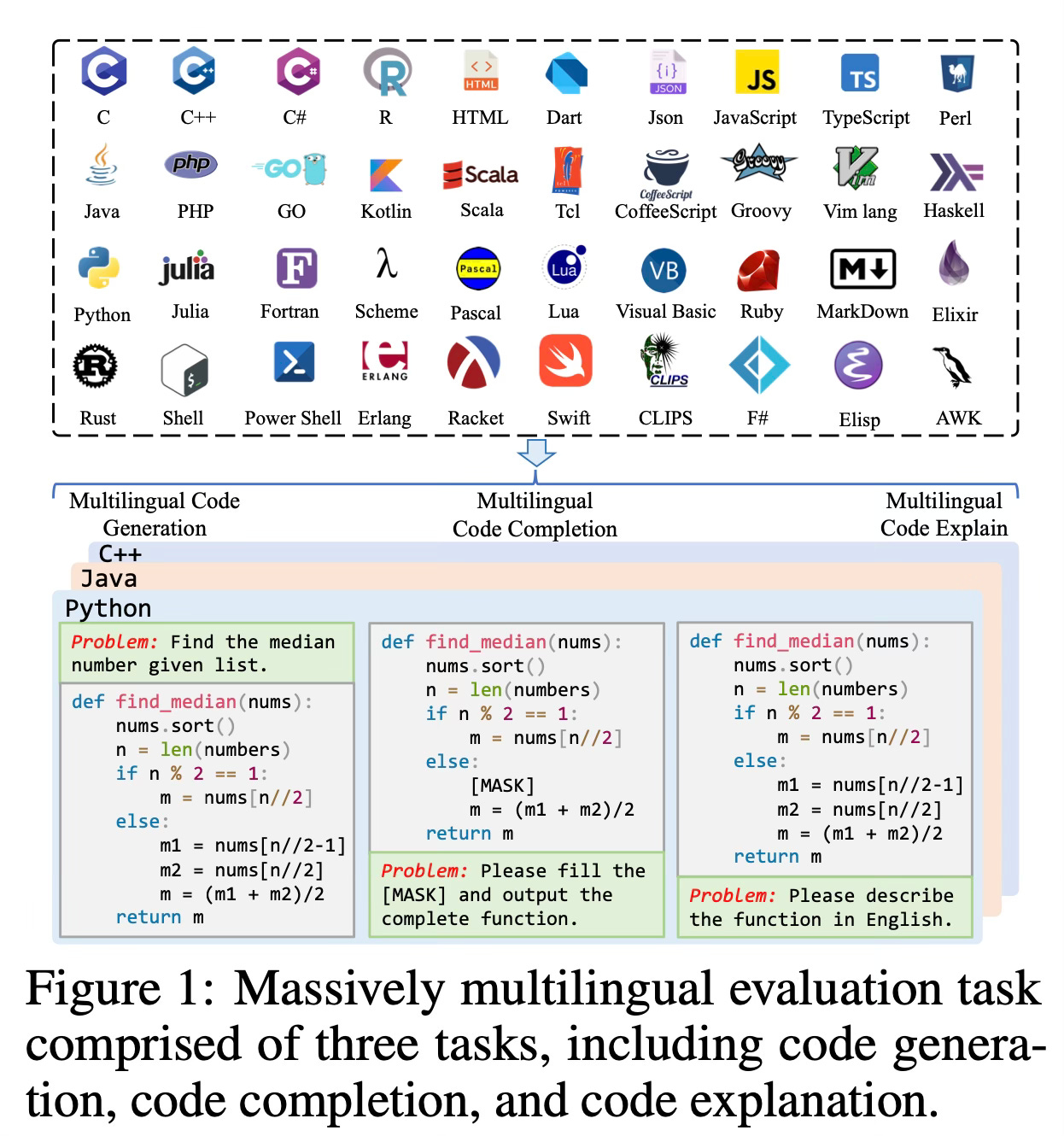

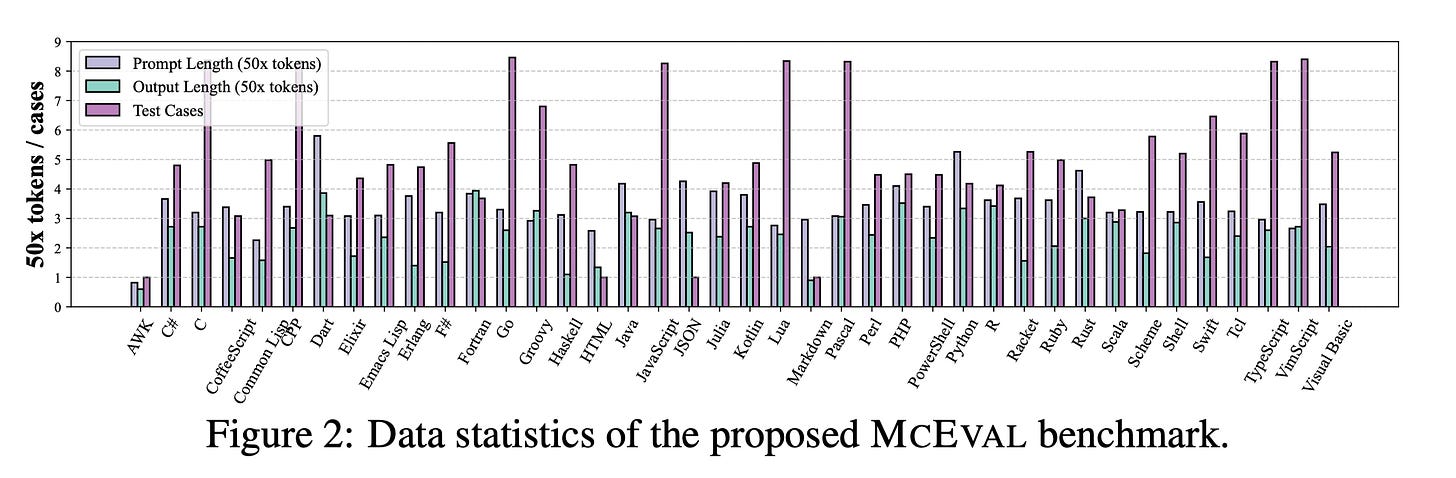

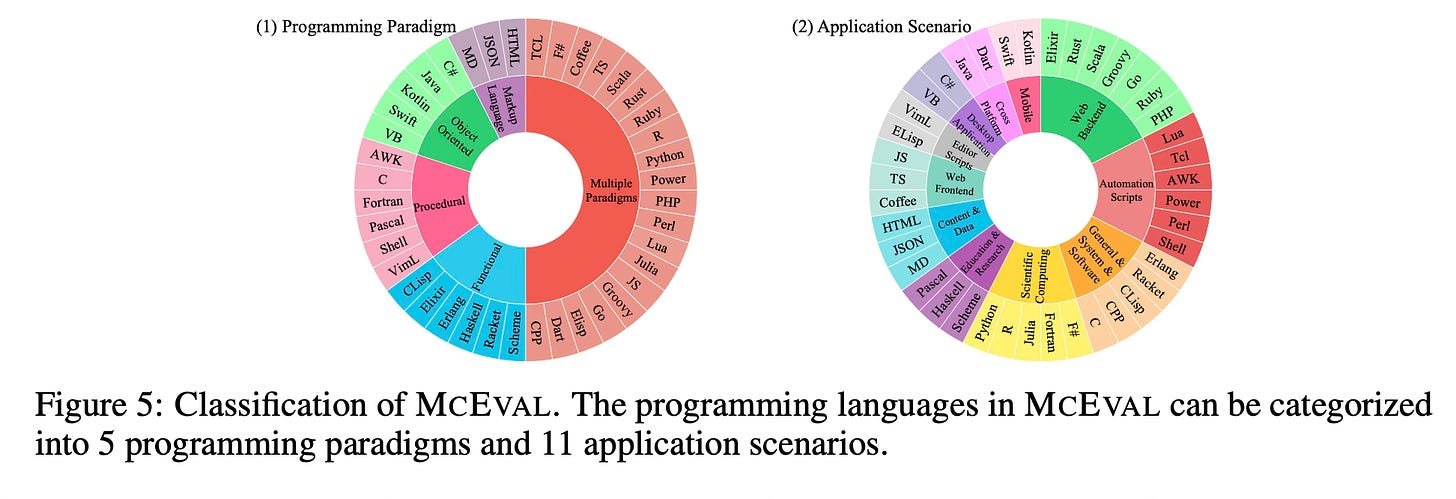

Today's paper introduces MCEVAL, a massively multilingual code evaluation benchmark covering 40 programming languages with 16K test samples. It aims to facilitate the research of code LLMs in multilingual scenarios by providing challenging code completion, understanding, and generation evaluation tasks with finely curated massively multilingual instruction corpora.

Overview

The MCEVAL benchmark is designed to evaluate the performance of code LLMs in multilingual programming tasks. It consists of two main components: the MCEVAL-INSTRUCT corpus and the MCEVAL evaluation set.

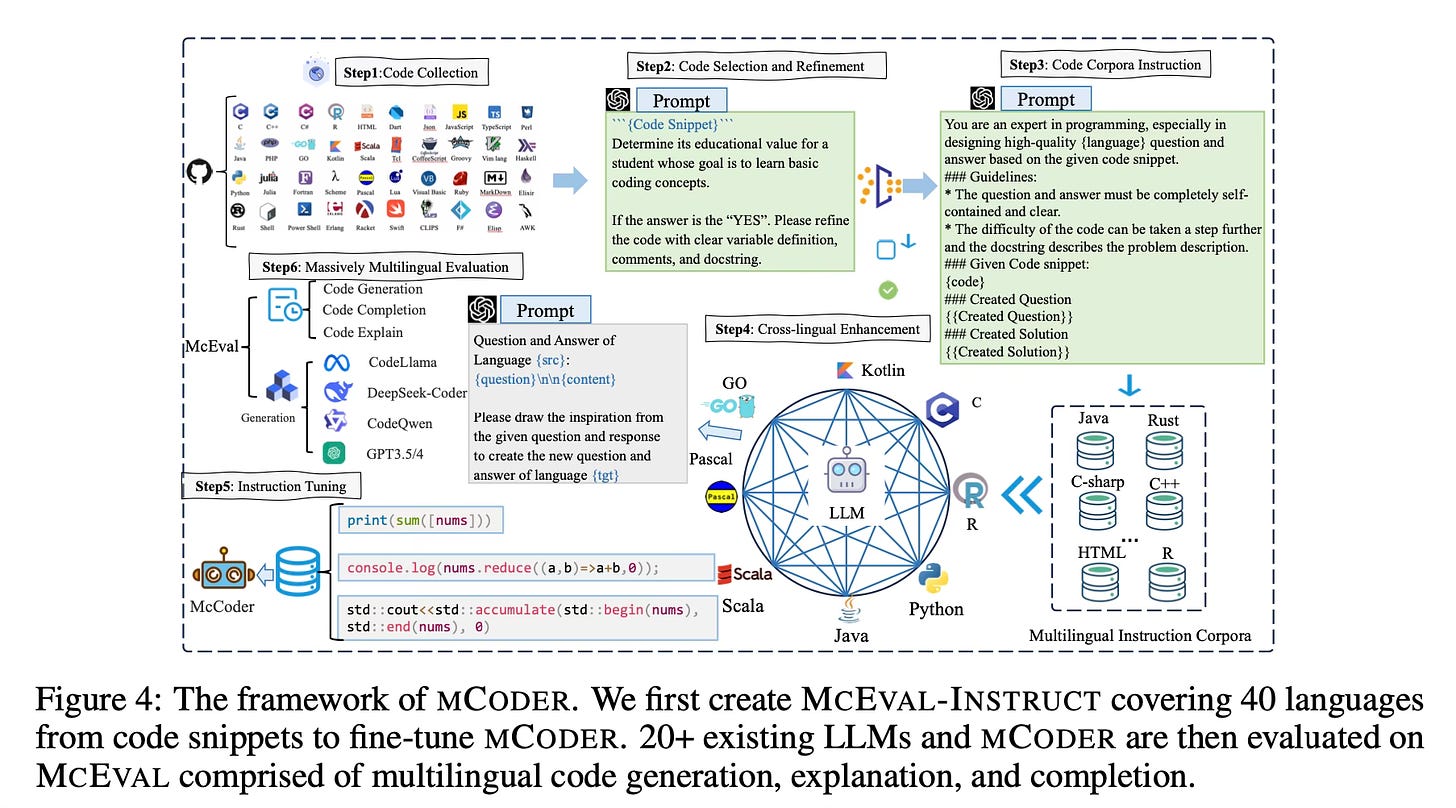

The MCEVAL-INSTRUCT corpus is a finely curated massively multilingual instruction corpus that serves as the training data for code LLMs. It contains programming problems and their corresponding solutions in 40 different programming languages. The problems cover a wide range of topics and difficulty levels, ensuring a diverse and challenging dataset for training. To construct this training split, gpt-4-1106-preview is used to generate the problem statement and the solution based on a given code snipped. The prompt used is:

“You are an expert in programming, especially in designing high-quality language question and answer based on the given code snippet.\n\n ### Guidelines: * The question and answer must be completely self-contained and clear.*\n The difficulty of the code can be taken a step further and the docstring describes the problem description.\n ### Given Code snippet: code\n ### Created Question Created Question\n ### Created Solution\n Created Solution”

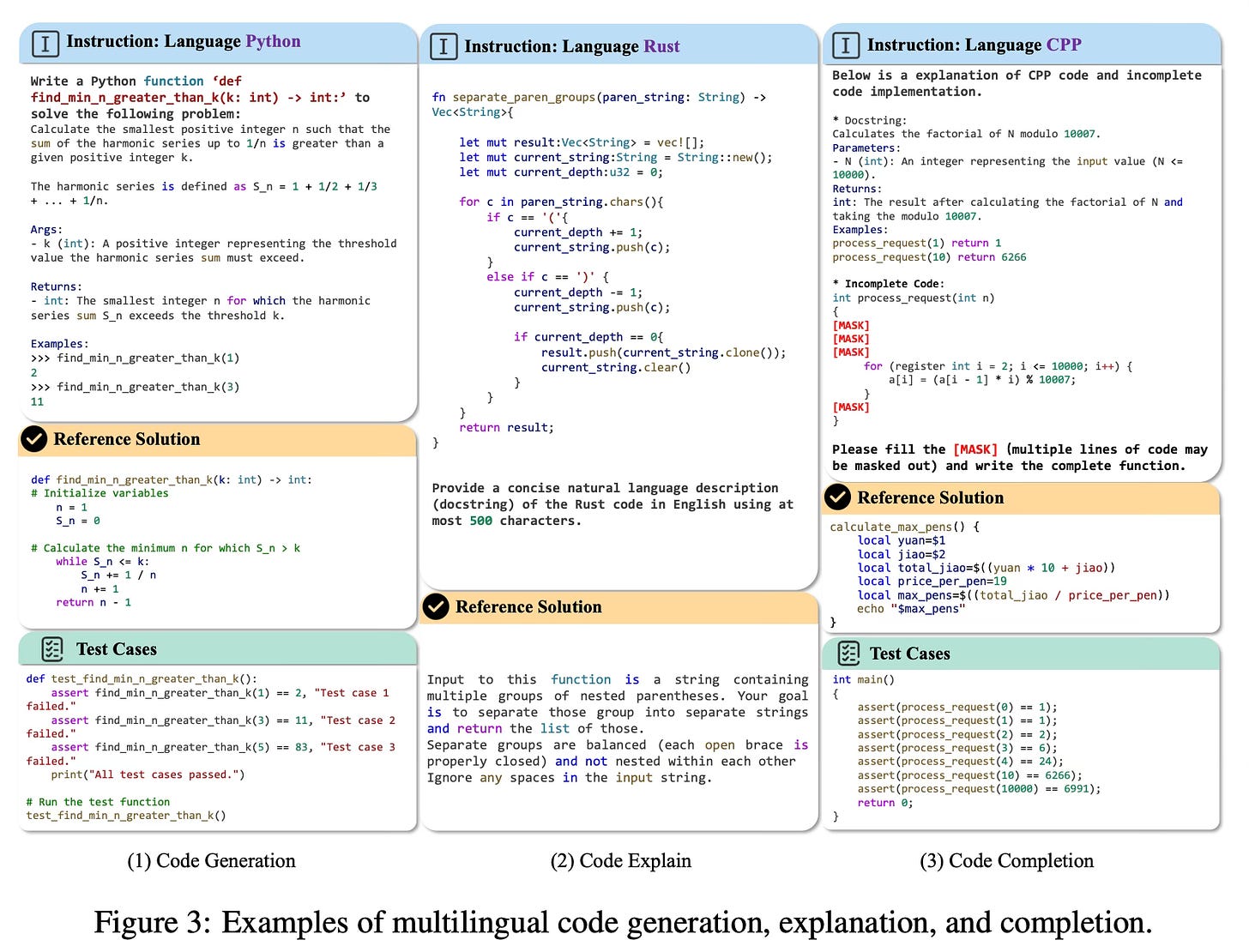

The MCEVAL evaluation set is a collection of test samples used to assess the performance of code LLMs. It includes code completion, understanding, and generation tasks in the 40 programming languages covered by the MCEVAL-INSTRUCT corpus. The evaluation set is carefully designed to test the models' ability to understand natural language instructions, generate correct and efficient code, and handle various programming concepts and constructs. To create the MCEVAL evaluation set, the annotation of multilingual code samples is conducted utilizing a comprehensive and systematic human annotation procedure.

The authors also introduce an effective multilingual coder called MCODER, which is trained on the MCEVAL-INSTRUCT corpus. MCODER is capable of generating code in multiple programming languages based on the provided instructions.

The evaluation process involves running the code generated by the LLMs on the test samples in the MCEVAL evaluation set and comparing the outputs against the expected results. The performance is measured using metrics such as execution success rate, code quality, and efficiency.

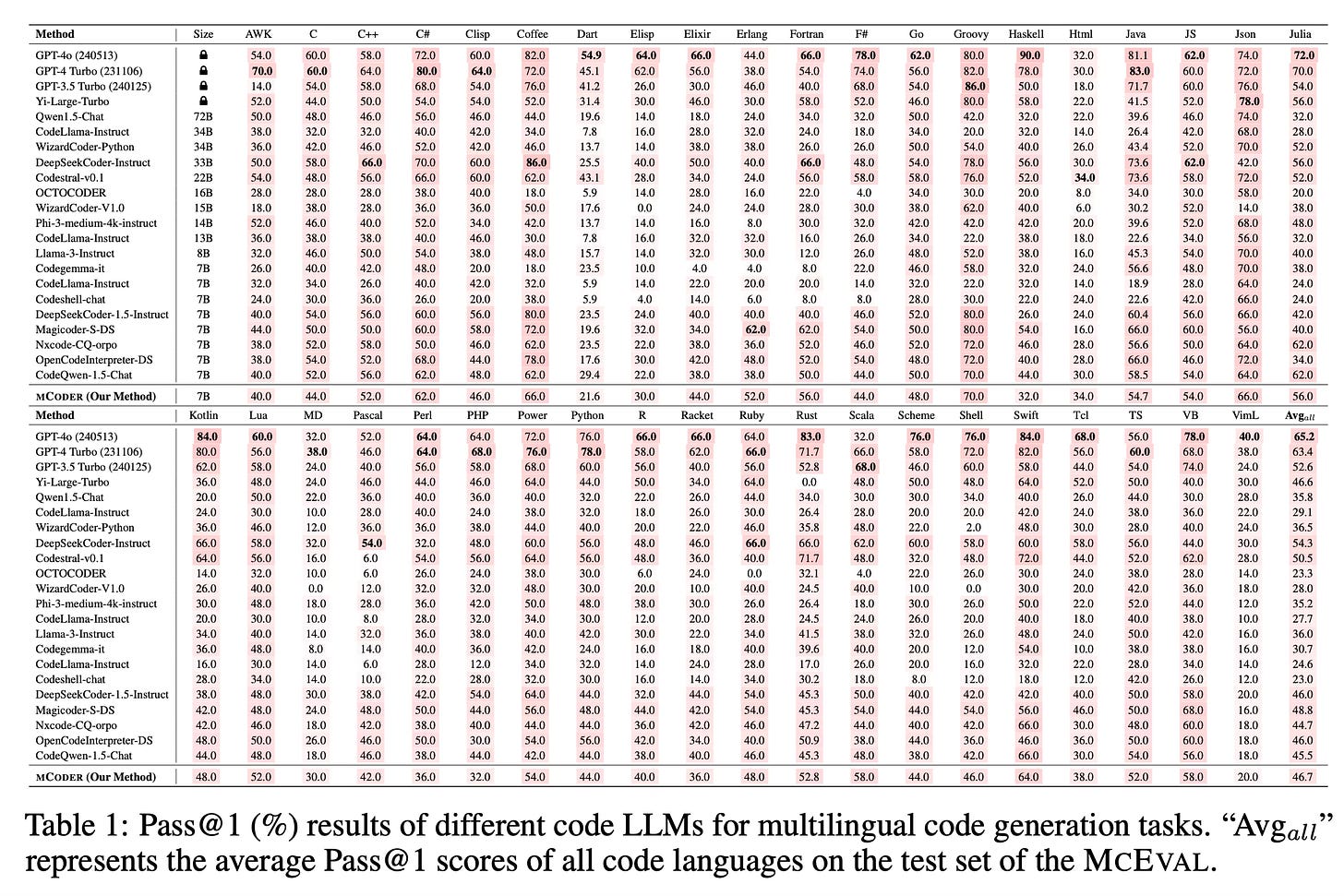

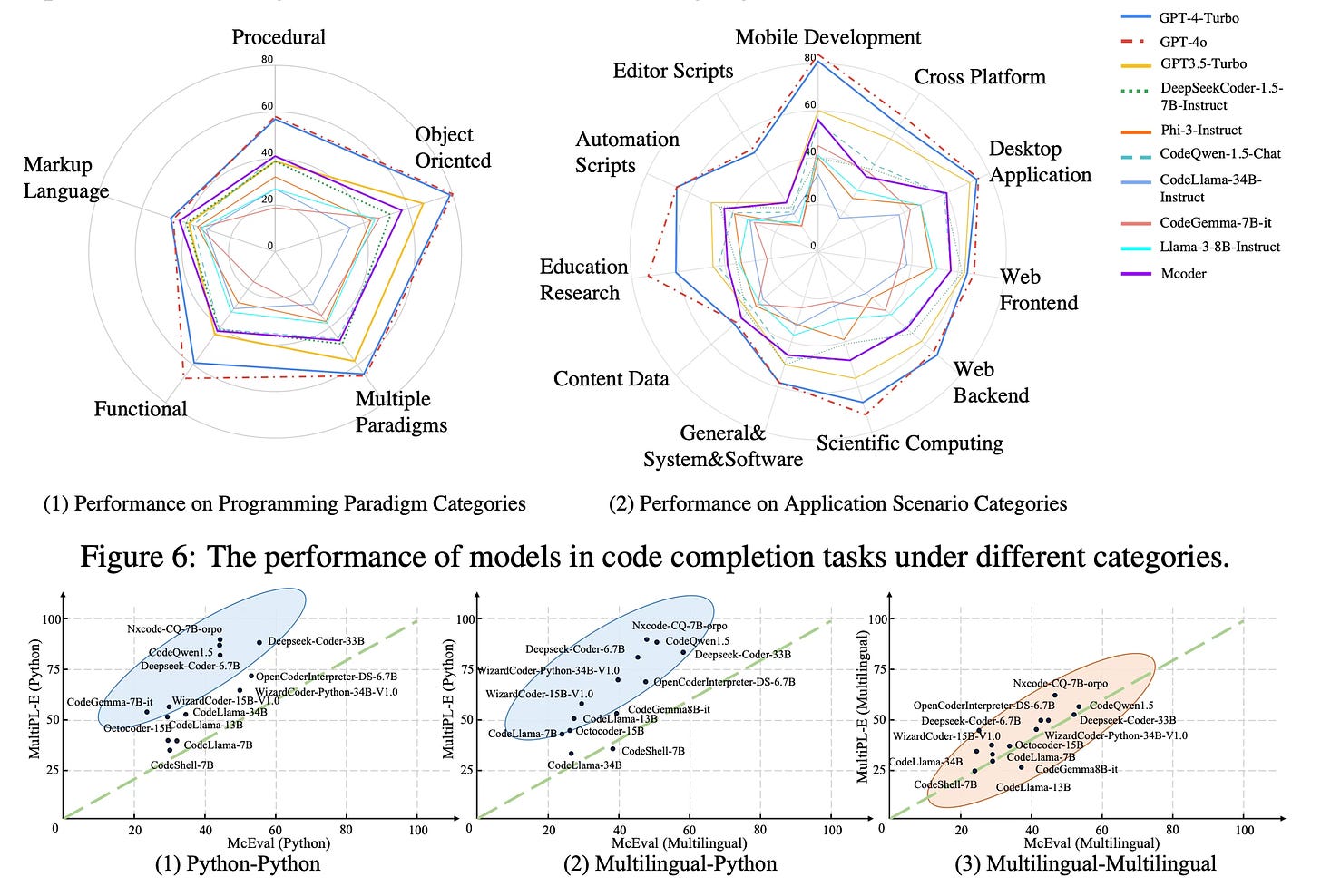

Results

Extensive experimental results on the MCEVAL benchmark compare the performance of open-source models and closed-source LLMs (e.g., GPT-series models) across numerous programming languages. The results highlight the challenges and opportunities in developing multilingual code LLMs, demonstrating that there is still a significant gap between open-source models and closed-source LLMs in many languages.

Conclusion

The MCEVAL benchmark and the MCEVAL-INSTRUCT corpus provide a valuable resource for the research community to evaluate and improve the performance of code LLMs in multilingual programming tasks. The authors' efforts in creating this comprehensive benchmark and introducing the MCODER model contribute to advancing the field of code generation and understanding. For more information, please consult the full paper.

Congrats to the authors for their work!

Chai, Linzheng, et al. "MCEVAL: Massively Multilingual Code Evaluation." arXiv preprint arXiv:2406.07436 (2024).