Megalodon: Efficient LLM Pretraining and Inference with Unlimited Context Length

Today’s paper introduces MEGALODON, an efficient neural architecture for sequence modeling with unlimited context length. It is an improved version of the MEGA (exponential moving average with gated attention) architecture, with several new technical components to enhance capability, stability, and scalability for large-scale long-context pretraining.

Method Overview

MEGALODON introduces the following components:

1. Complex Exponential Moving Average (CEMA): Extends the multi-dimensional EMA from MEGA to the complex domain, improving the capability of capturing long-range dependencies.

2. Timestep Normalization Layer: Generalizes group normalization to auto-regressive sequence modeling by computing cumulative mean and variance along the timestep dimension. This allows normalization along the sequential dimension.

3. Normalized Attention: Proposes a normalized attention mechanism specifically for MEGALODON to improve stability during training. It normalizes the shared representation before computing attention scores.

4. Pre-Norm with Two-Hop Residual: A new normalization configuration that rearranges the residual connections in each block to mitigate the instability issue of pre-normalization when scaling up model size.

5. 4D Parallelism for Distributed Training: In addition to data, tensor, and pipeline parallelism, MEGALODON can be parallelized along the timestep/sequence dimension by chunking input sequences into fixed blocks. This enables efficient distributed training for long-context pretraining.

Results

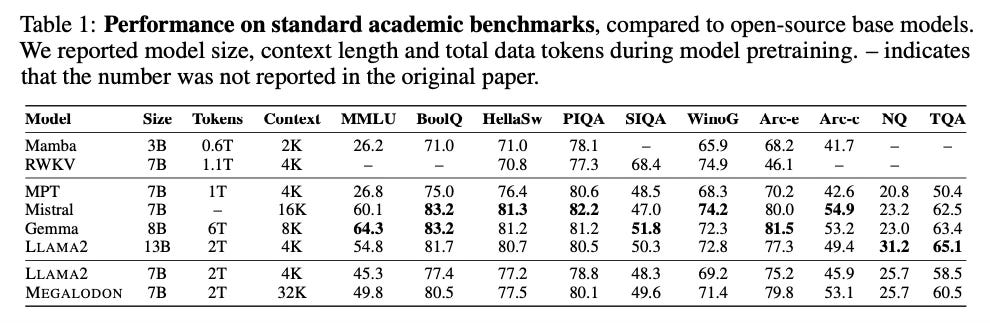

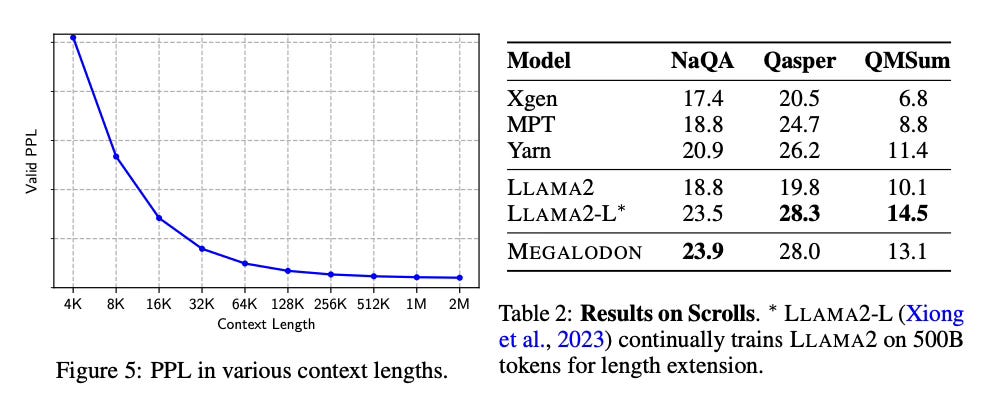

The paper evaluates MEGALODON on various tasks and modalities, including large-scale language model pretraining on 2 trillion tokens, academic benchmarks, long-context question answering, image classification, and auto-regressive language modeling. Key results:

- MEGALODON 7B outperforms LLAMA 2-7B in training perplexity and downstream task performance, while being more computationally efficient for long-context pretraining.

- MEGALODON exhibits strong performance on long-context tasks, demonstrating its ability to model extremely long sequences (up to 2M tokens).

- On other tasks like ImageNet, PG-19, MEGALODON achieves state-of-the-art results compared to previous architectures of similar size.

Conclusion

MEGALODON is an efficient and scalable architecture for long-context sequence modeling, with promising empirical results across various tasks and modalities. For more information please consult the full paper.

Code: https://github.com/XuezheMax/megalodon

Congrats to the authors for their work!

Ma, Xuezhe, et al. "MEGALODON: Efficient LLM Pretraining and Inference with Unlimited Context Length." arXiv preprint arXiv:2404.08801 (2024).