MMSearch: Benchmarking the Potential of Large Models as Multi-modal Search Engines

Today's paper introduces MMSearch, a comprehensive benchmark for evaluating the potential of Large Multimodal Models (LMMs) as AI search engines. The authors develop MMSearch-engine, a pipeline that enables any LMM to function as a multimodal search engine, and create a dataset of 300 challenging queries to test these capabilities.

Method Overview

The paper introduces MMSearch-engine, a pipeline that empowers LMMs to act as multimodal AI search engines. This pipeline integrates both textual and visual information in the search process, allowing for a more comprehensive understanding of user queries and web content.

The MMSearch-engine pipeline consists of three main stages:

Requery: The LMM reformulates the user's query (which may include text and images) into a search-engine-friendly format. This step ensures that the query is clear and effective for retrieving relevant information.

Rerank: After retrieving the top K relevant websites based on the requery, the LMM ranks these results based on their helpfulness and selects the most informative website for further analysis.

Summarization: The LMM synthesizes an answer based on the content of the selected website, considering both textual and visual information.

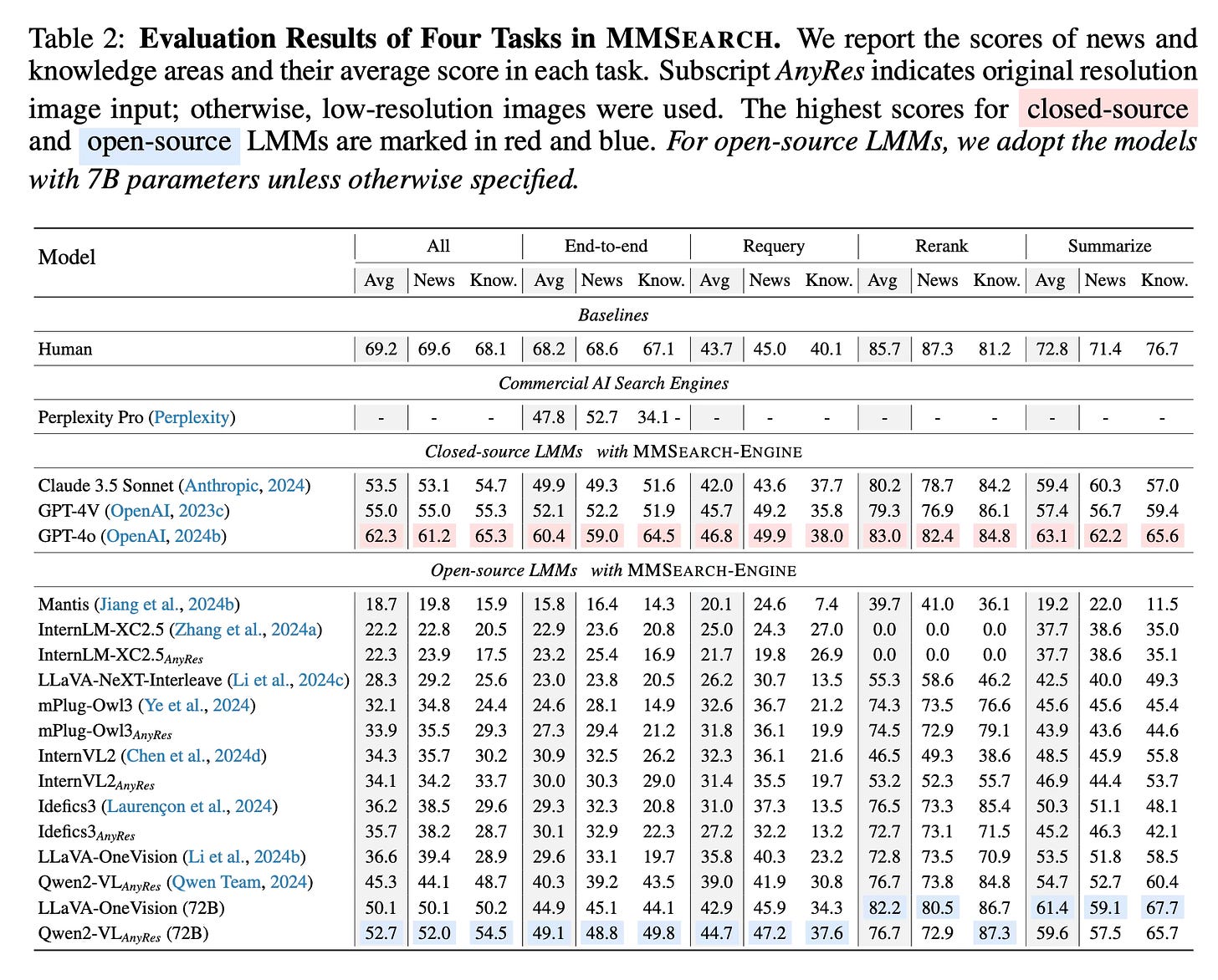

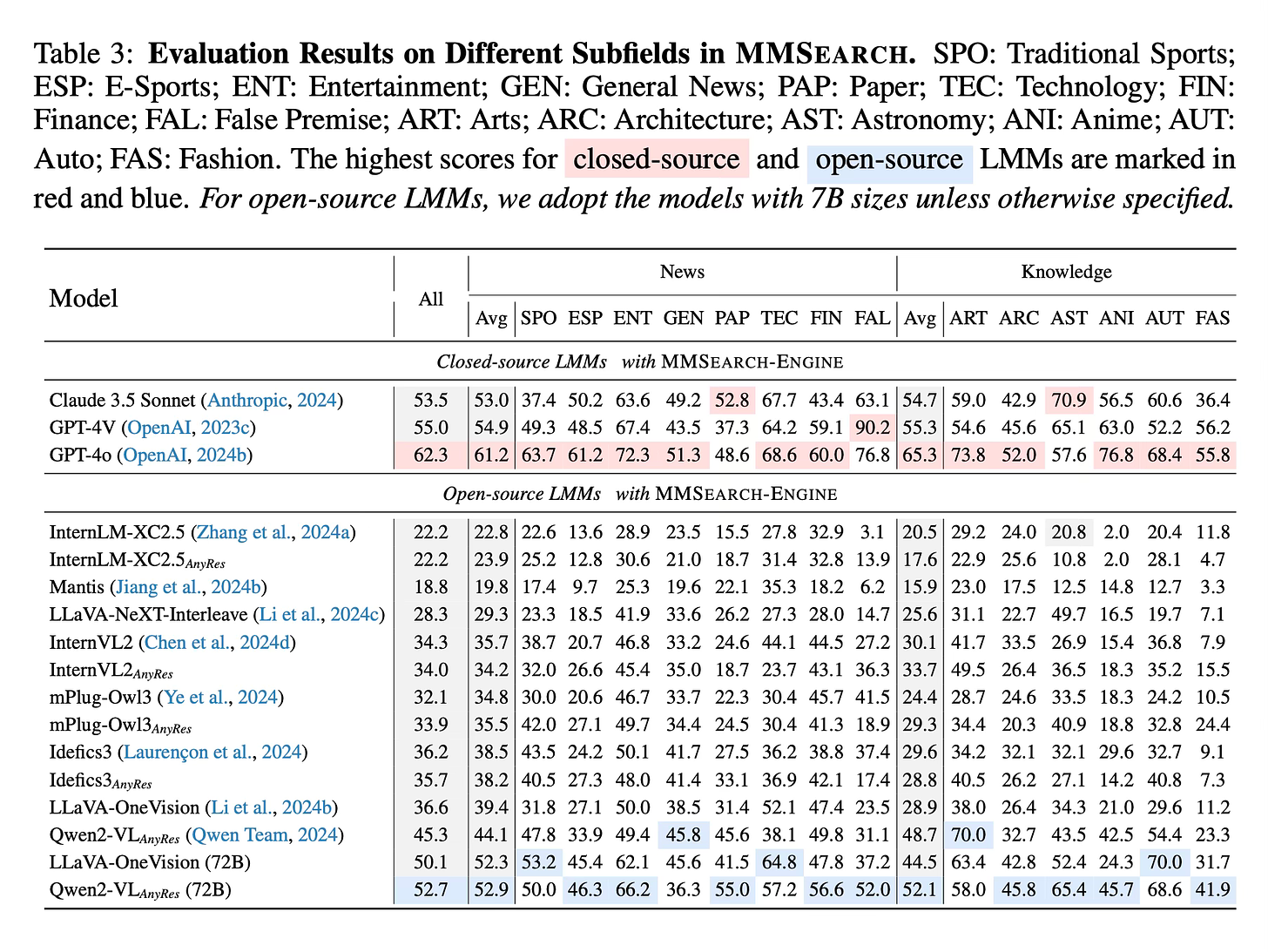

To evaluate LMMs using this pipeline, the authors created MMSearch, a benchmark dataset containing 300 carefully curated queries across 14 subfields. These queries are divided into two main areas: News and Knowledge. The News queries focus on recent events to ensure they are not part of the LMMs' training data, while the Knowledge queries require rare information that current state-of-the-art LMMs struggle to answer directly.

Results

The paper reports that when equipped with MMSearch-engine, state-of-the-art LMMs like GPT-4o and Claude 3.5 Sonnet outperformed the commercial AI search engine Perplexity Pro in end-to-end tasks. However, the error analysis revealed that current LMMs still face challenges in fully grasping multimodal search-specific tasks, particularly in requery and rerank capabilities.

Conclusion

MMSearch provides a framework for evaluating and enhancing the multimodal search capabilities of Large Multimodal Models. By identifying current limitations and areas for improvement, this benchmark aims to guide the future development of more effective multimodal AI search engines. For more information please consult the full paper.

Congrats to the authors for their work!

Jiang, Dongzhi, et al. "MMSearch: Benchmarking the Potential of Large Models as Multi-Modal Search Engines." arXiv preprint arXiv:2409.12959 (2024).