MoRA: High-Rank Updating for Parameter-Efficient Fine-Tuning

Today's paper discusses a new method called MoRA - High-Rank Updating for Parameter-Efficient Fine-Tuning, that aims to improve upon the popular Low-Rank Adaptation (LoRA) technique for fine-tuning large language models. The authors analyze the limitations of LoRA's low-rank updating mechanism and propose using a square matrix to achieve high-rank updating while maintaining the same number of trainable parameters.

Method Overview

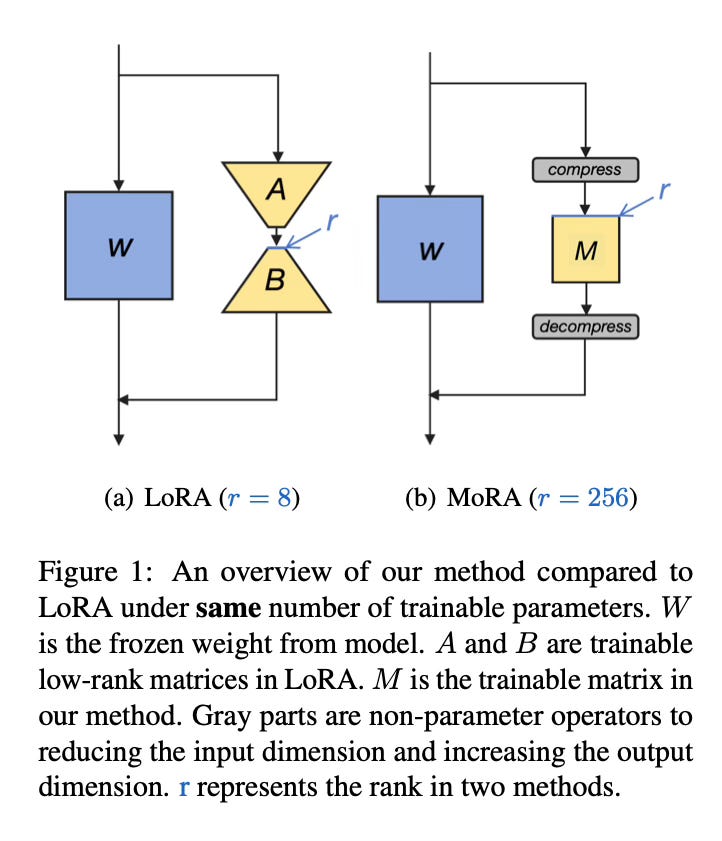

The key idea behind MoRA is to use a square matrix M instead of the low-rank matrices employed by LoRA. This allows for a higher rank update to the model's weights, potentially improving the ability to learn and memorize new knowledge during fine-tuning.

To make this work, MoRA introduces non-parameter operators that reduce the input dimension and increase the output dimension for the square matrix M. This ensures that the updated weights can be merged back into the original language model, similar to how LoRA operates.

The authors discuss four different types of non-parameter operators that can be used in MoRA. These operators perform operations like linear projections, convolutions, or attention mechanisms to transform the input and output dimensions appropriately.

By using a high-rank square matrix along with these non-parameter operators, MoRA aims to overcome the limitations of low-rank updating in LoRA, particularly for tasks that require memorizing and learning new knowledge during fine-tuning.

Results

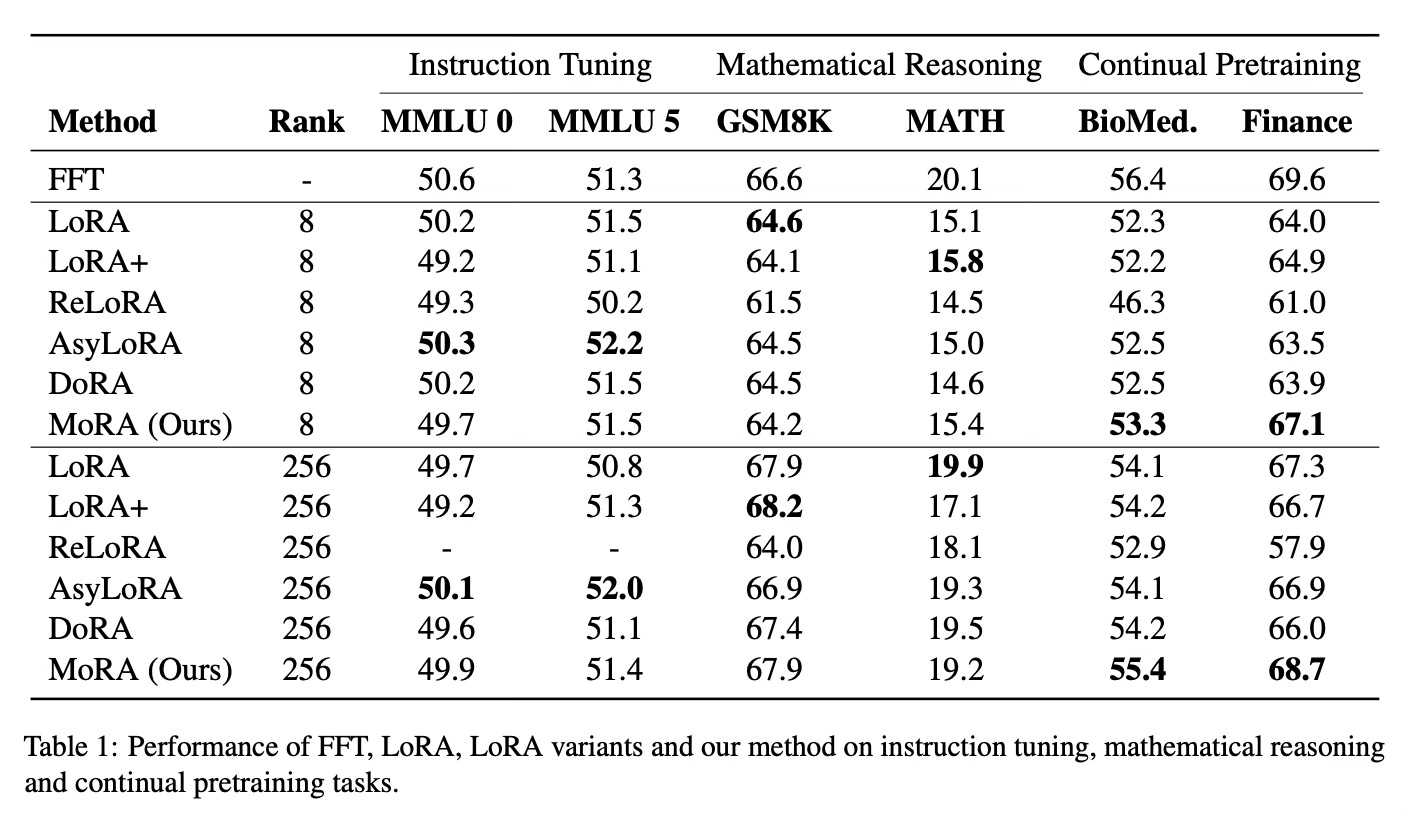

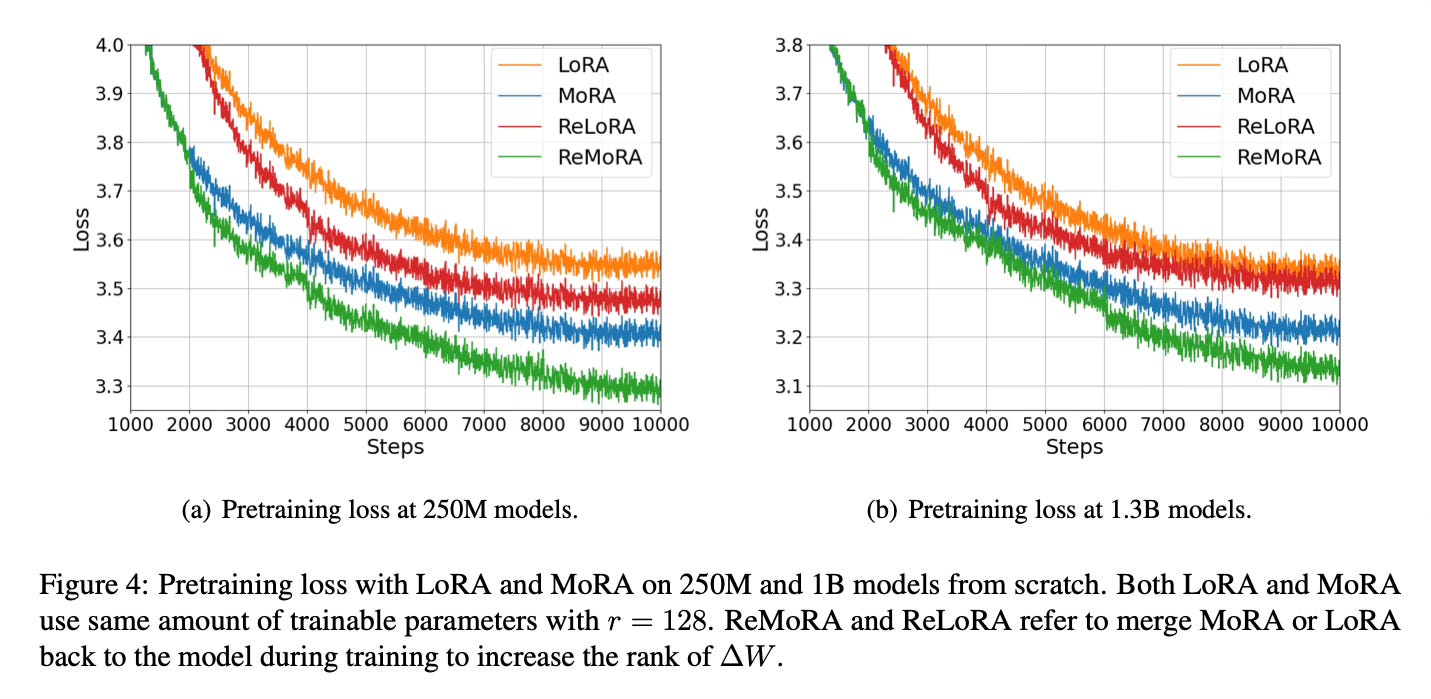

The authors evaluate MoRA across five tasks: instruction tuning, mathematical reasoning, continual pretraining, and pretraining. They find that MoRA outperforms LoRA on memory-intensive tasks, where the ability to memorize new knowledge is crucial. On other tasks like instruction tuning, MoRA achieves comparable performance to LoRA.

Conclusion

The proposed MoRA method introduces high-rank updating for parameter-efficient fine-tuning of large language models. By using a square matrix and non-parameter operators, MoRA can achieve a higher rank update than LoRA while maintaining the same number of trainable parameters. This approach shows promising results, particularly for tasks that require memorizing and learning new knowledge during fine-tuning. For more information please consult the full paper.

Congrats to the authors for their work!

Jiang, Ting, et al. "MoRA: High-Rank Updating for Parameter-Efficient Fine-Tuning." ArXiv, 20 May 2024, https://arxiv.org/abs/2405.12130.