Needle In A Multimodal Haystack

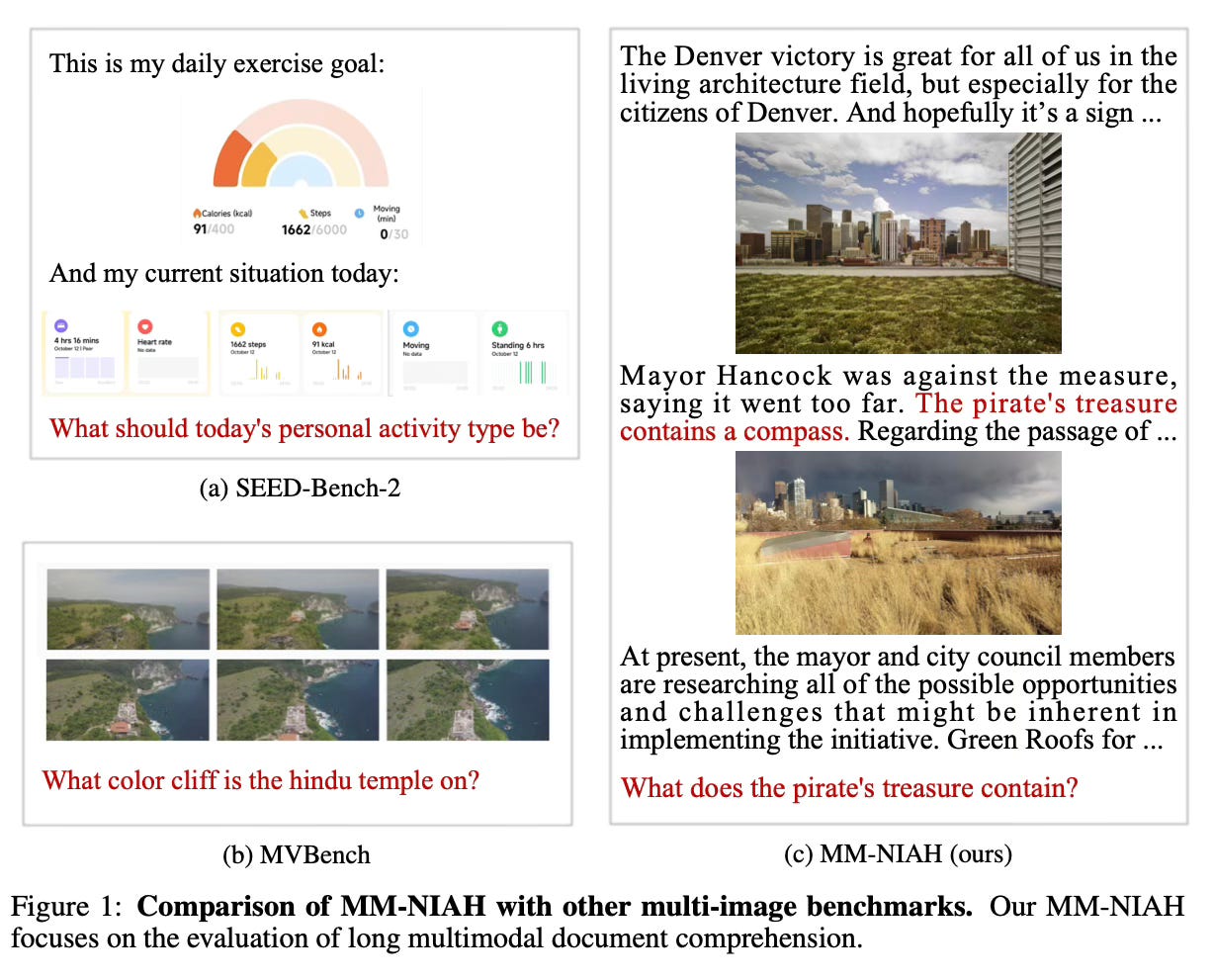

Today's paper introduces the Needle In A Multimodal Haystack (MM-NIAH) benchmark, designed to evaluate the ability of multimodal large language models to comprehend long multimodal documents.

Method Overview

The method involves creating long "multimodal haystack" documents by concatenating interleaved image-text sequences from the OBELICS dataset. Then, "needles" containing key information are inserted into either the text or images of these documents.

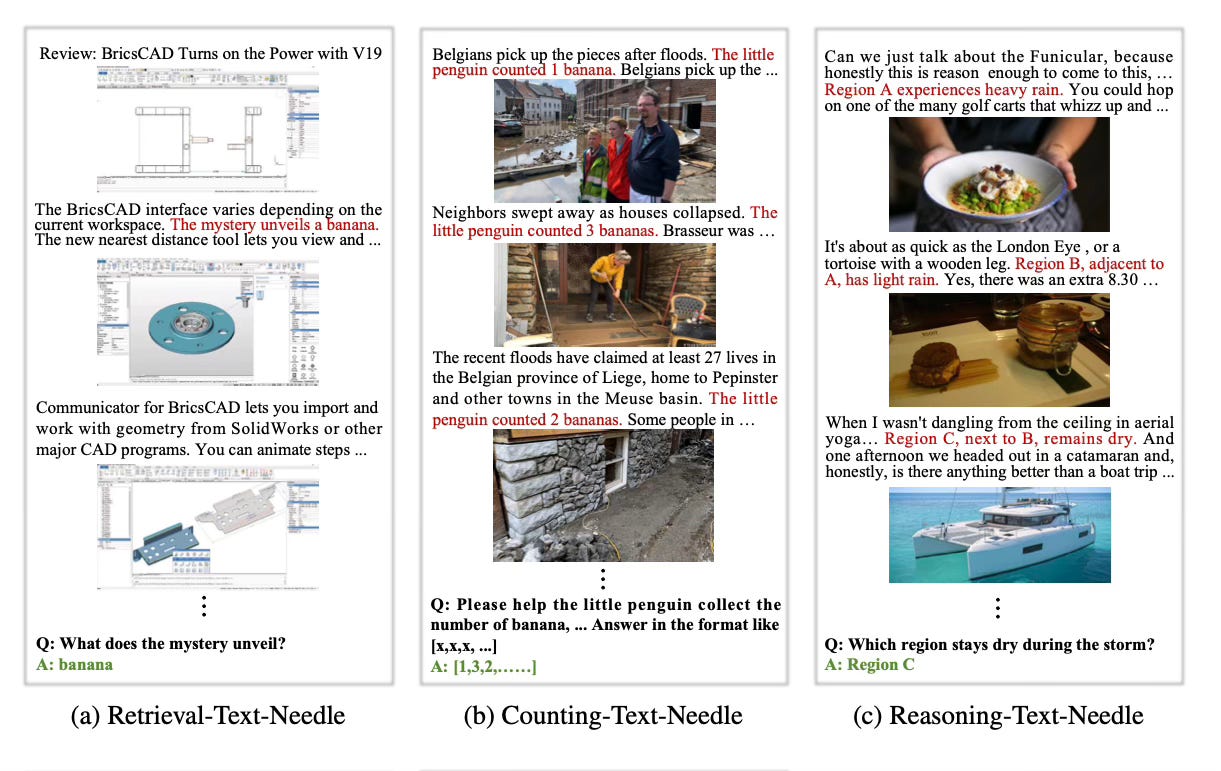

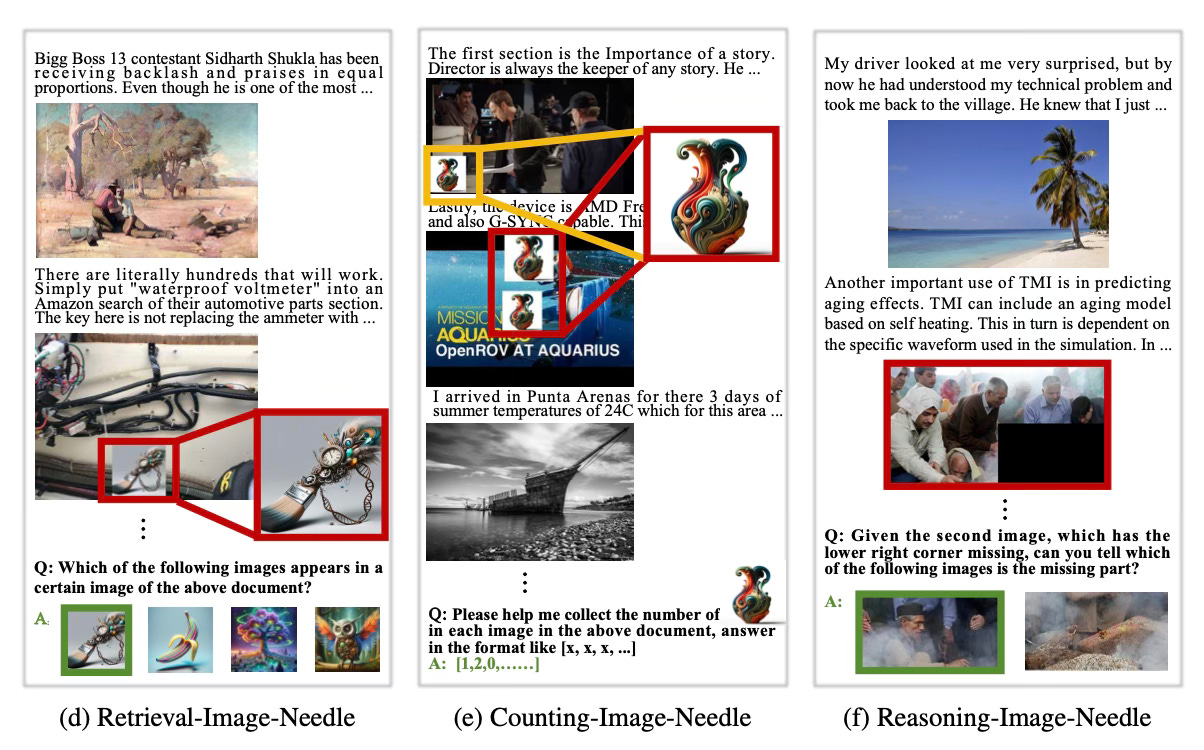

There are three types of tasks:

Retrieval: A text or image needle is inserted, and the model must retrieve this information from the document.

Counting: Multiple text statements or images are inserted as needles, and the model must count how many needles are present.

Reasoning: Multiple text statements or related images are inserted, and the model must reason over this information to answer a question.

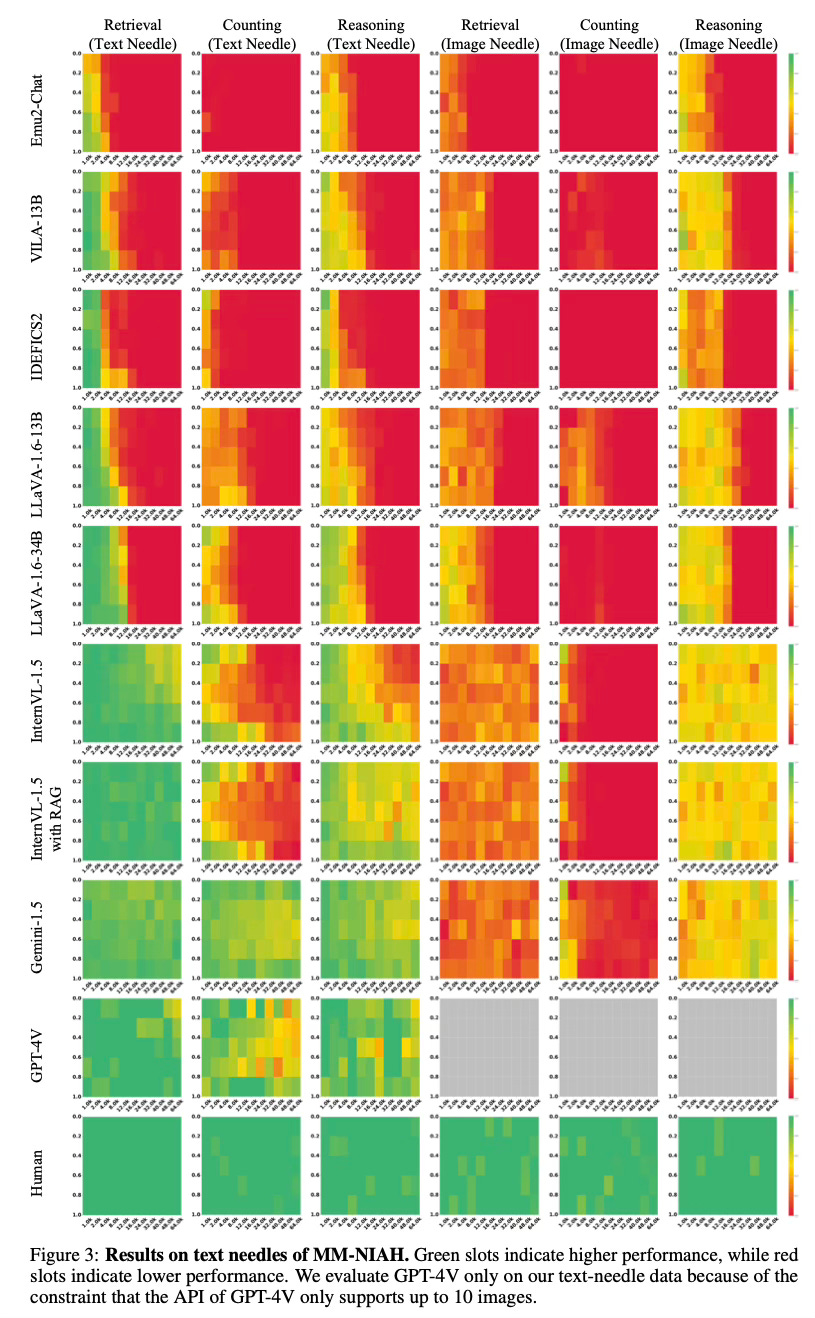

Results

Experiments show that existing multimodal large language models struggle on this benchmark, especially for vision-centric tasks involving image needles. Models trained on interleaved data did not show superior performance. The "lost in the middle" problem was observed for text needles. Retrieval augmented generation helped for text but not image retrieval.

Conclusion

Overall, the results suggest that understanding long multimodal documents remains a significant challenge for current models. For more information please consult the full paper or the project page.

Congrats to the authors for their work!

Wang, Weiyun, et al. "Needle In A Multimodal Haystack." ArXiv Preprint ArXiv:2406.07230, 2024.