Octo-planner: On-device Language Model for Planner-Action Agents

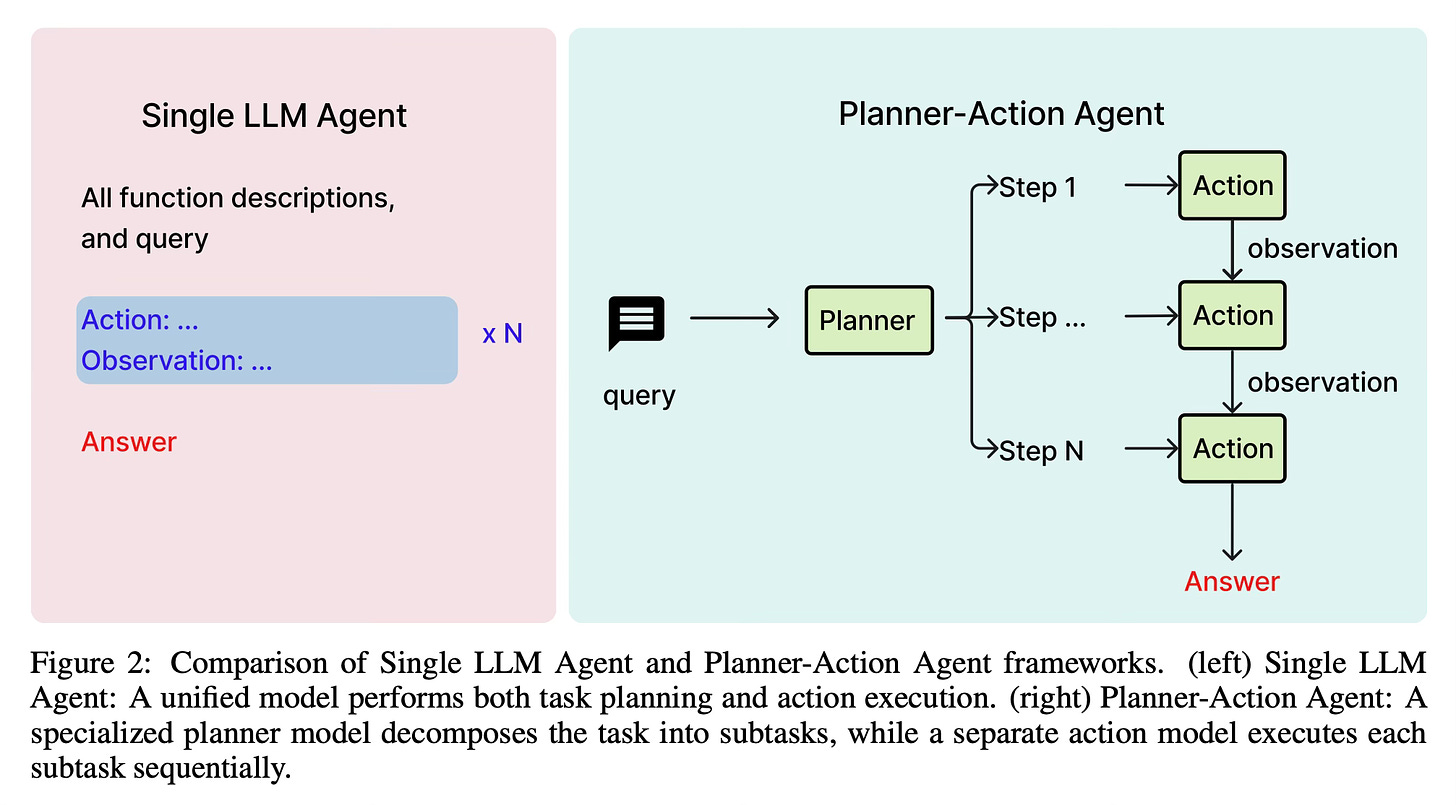

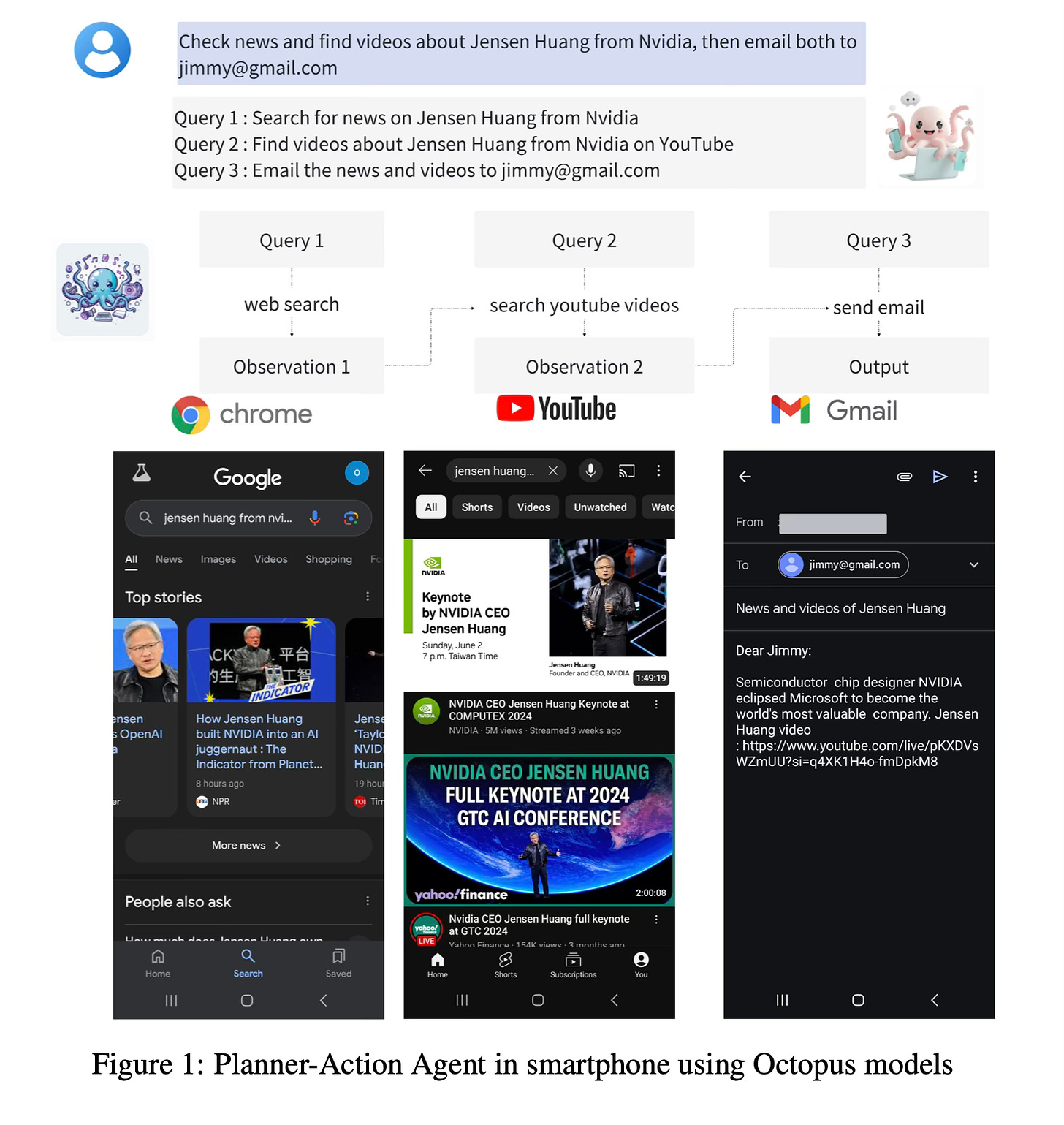

Today's paper introduces Octo-planner, an efficient on-device planning agent for AI assistants. The authors present a new Planner-Action framework that separates planning and action execution, allowing for optimized performance on resource-constrained devices. This approach aims to make AI agents more practical and accessible for everyday use while maintaining high accuracy.

Method Overview

The Octo-planner system uses a two-part framework: a planning agent and an action agent. The planning agent, based on a fine-tuned language model, takes a user query and available function descriptions as input. It then generates a sequence of sub-steps to accomplish the task. The action agent, using the Octopus model, executes each sub-step sequentially.

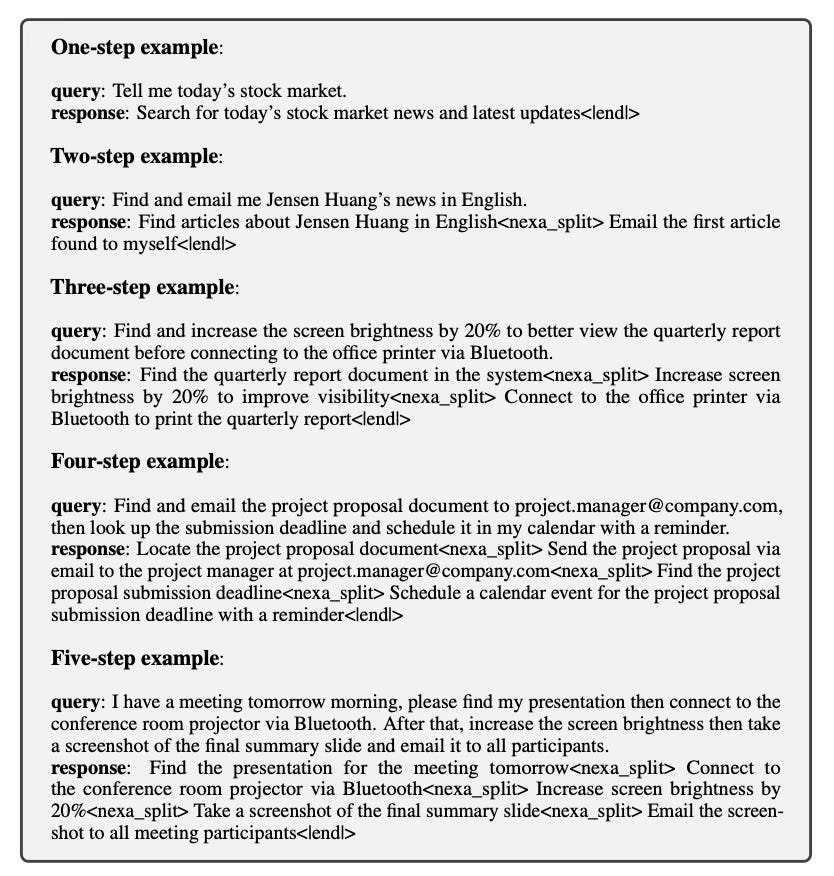

To optimize for on-device performance, the authors employ model fine-tuning instead of in-context learning. This approach reduces computational costs and improves response times. The training process involves using GPT-4 to generate diverse planning queries and responses based on available functions. These generated datasets are then validated to ensure quality. Below you can find some examples of data:

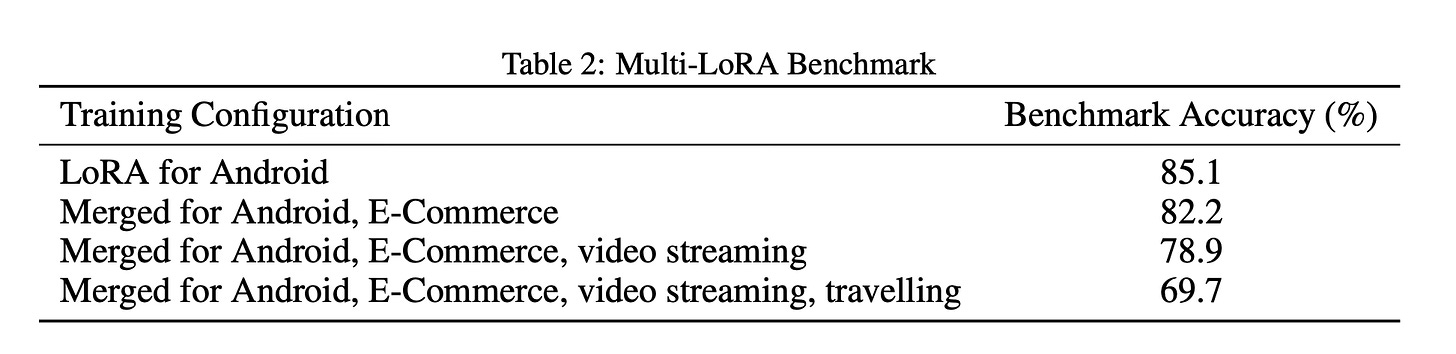

The authors fine-tune the Phi-3 Mini model on this curated dataset. To address multi-domain planning challenges, they developed a multi-LoRA (Low-Rank Adaptation) training method. This technique merges weights from LoRAs trained on distinct function subsets, enabling flexible handling of complex, multi-domain queries while maintaining computational efficiency.

The framework also includes a comprehensive benchmark design to evaluate the planner's performance. This involves automated generation of test datasets, expert validation, and empirical testing to ensure the reliability and accuracy of the results.

Results

The Octo-planner achieved impressive results in various configurations:

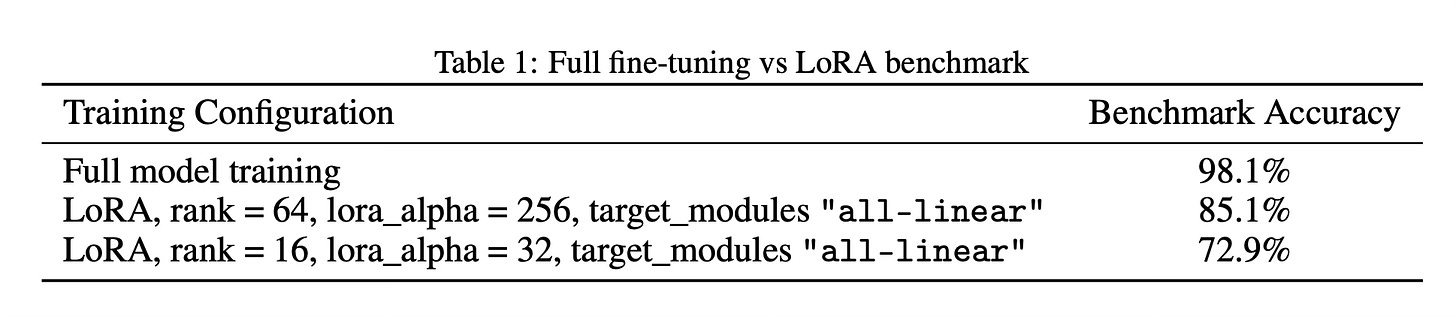

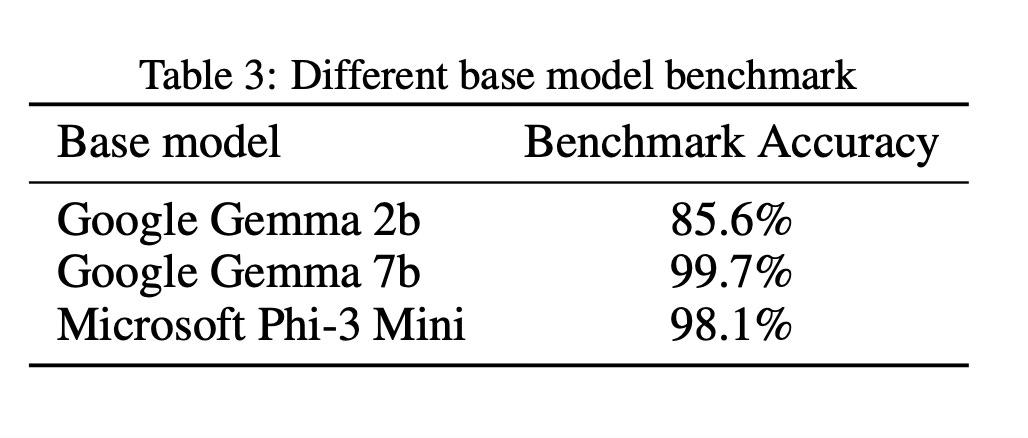

Full fine-tuning of the model reached 98.1% accuracy on the benchmark dataset.

LoRA-based training achieved 85.1% accuracy with rank 64 and alpha 256.

The multi-LoRA approach showed good performance across multiple domains, with 82.2% accuracy when merging two domains.

Different base models were tested, with Google Gemma 7b achieving 99.7% accuracy after fine-tuning.

Conclusion

The Octo-planner represents a significant advancement in on-device AI planning. By separating planning and action execution and optimizing for edge devices, the authors have created a system that balances efficiency, accuracy, and adaptability. This approach addresses key challenges in AI deployment, including data privacy, latency, and offline functionality, paving the way for more practical and sophisticated AI agents on personal devices. For more information please consult the full paper.

Congrats to the authors for their work!

Chen, Wei, et al. "Octo-planner: On-device Language Model for Planner-Action Agents." arXiv preprint arXiv:2406.18082 (2024).