Today's paper introduces Prism, a novel framework for decoupling and assessing the capabilities of Vision Language Models (VLMs). Prism separates the perception and reasoning processes involved in visual question answering, allowing for systematic evaluation of both proprietary and open-source VLMs. The framework provides valuable insights into VLM capabilities and demonstrates potential as an efficient solution for vision-language tasks.

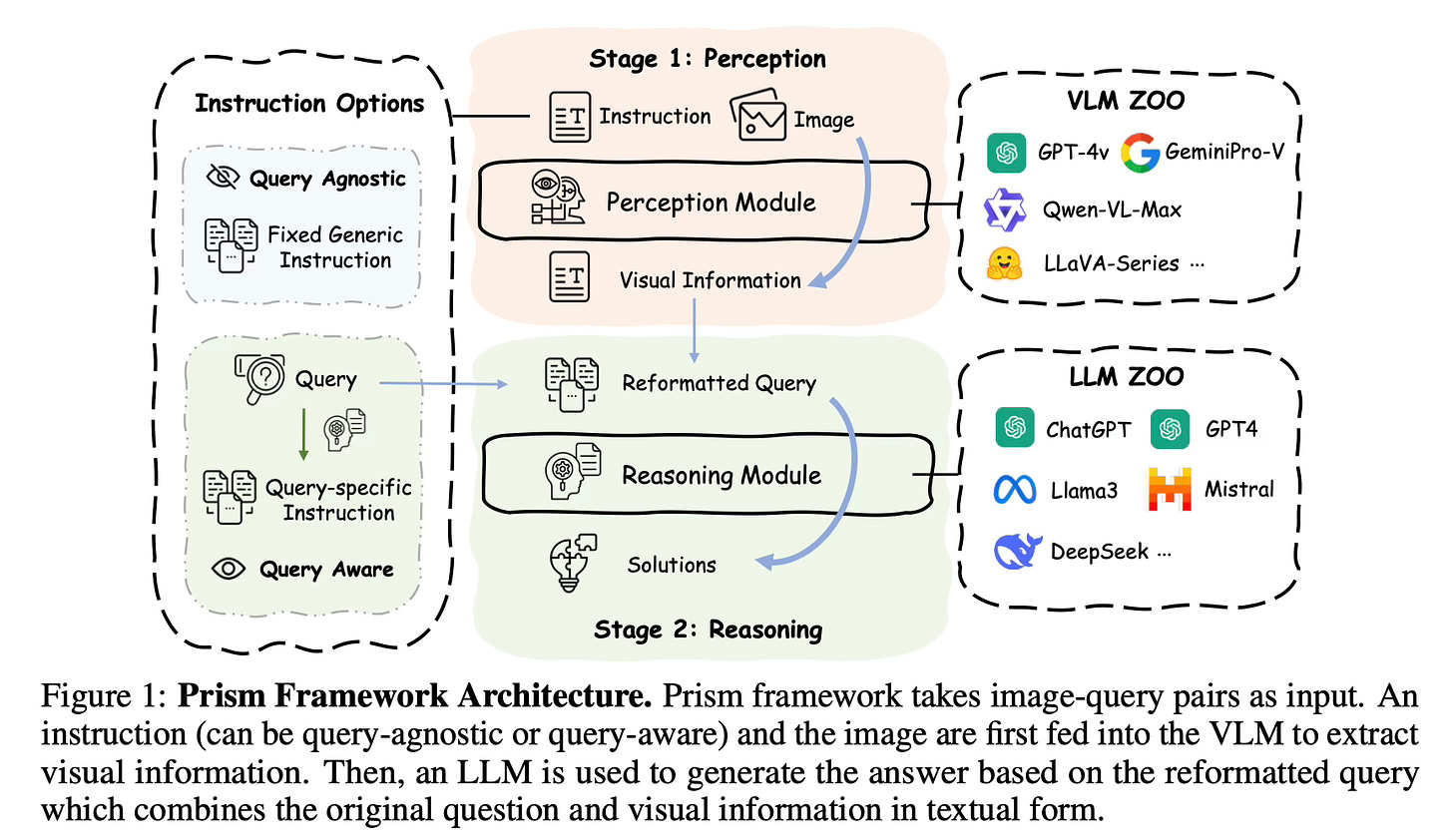

Method Overview

Prism decomposes visual question answering into two distinct stages:

Perception Stage: A VLM extracts and articulates visual information from an image in textual form, guided by either a generic or query-specific instruction.

Reasoning Stage: A Large Language Model (LLM) formulates responses based on the extracted visual information and the original question.

This modular design allows for flexible swapping of different VLMs and LLMs in each stage. To evaluate perception capabilities, the reasoning module (LLM) is kept constant while testing various VLMs. Conversely, keeping the VLM fixed and varying the LLM assesses reasoning limitations. GPT-3.5-Turbo-0125 is adopted as the reasoning module, unless otherwise specified.

Prism can use either generic instructions for basic visual information extraction or query-specific instructions tailored to the question. The framework enables systematic comparison of VLMs by controlling for reasoning capabilities.

Additionally, Prism can function as an efficient vision-language task solver by combining a lightweight VLM focused on perception with a powerful LLM for reasoning. The authors train a 2B parameter visual captioner based on the LLaVA architecture to serve as the perception module.

Results

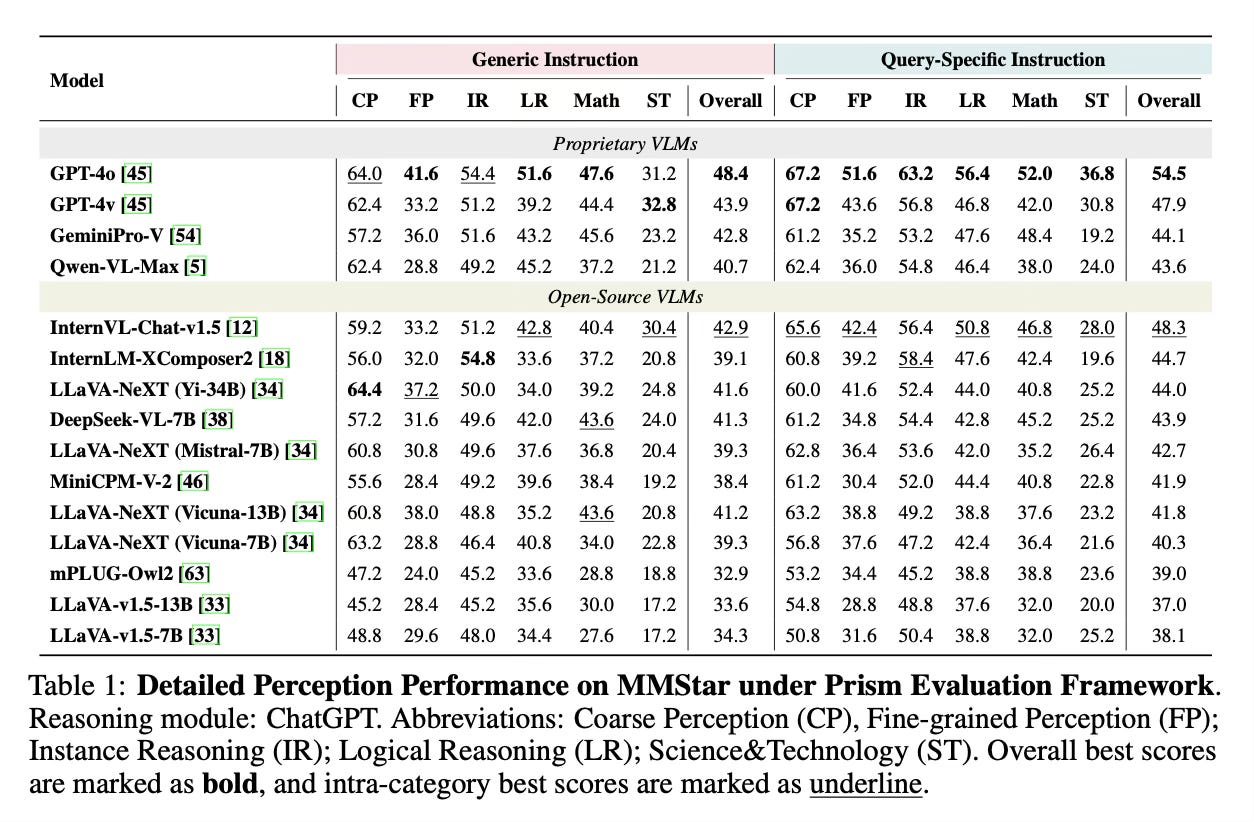

Key findings from using Prism to analyze VLM capabilities include:

Proprietary VLMs like GPT-4v lead in perception capabilities

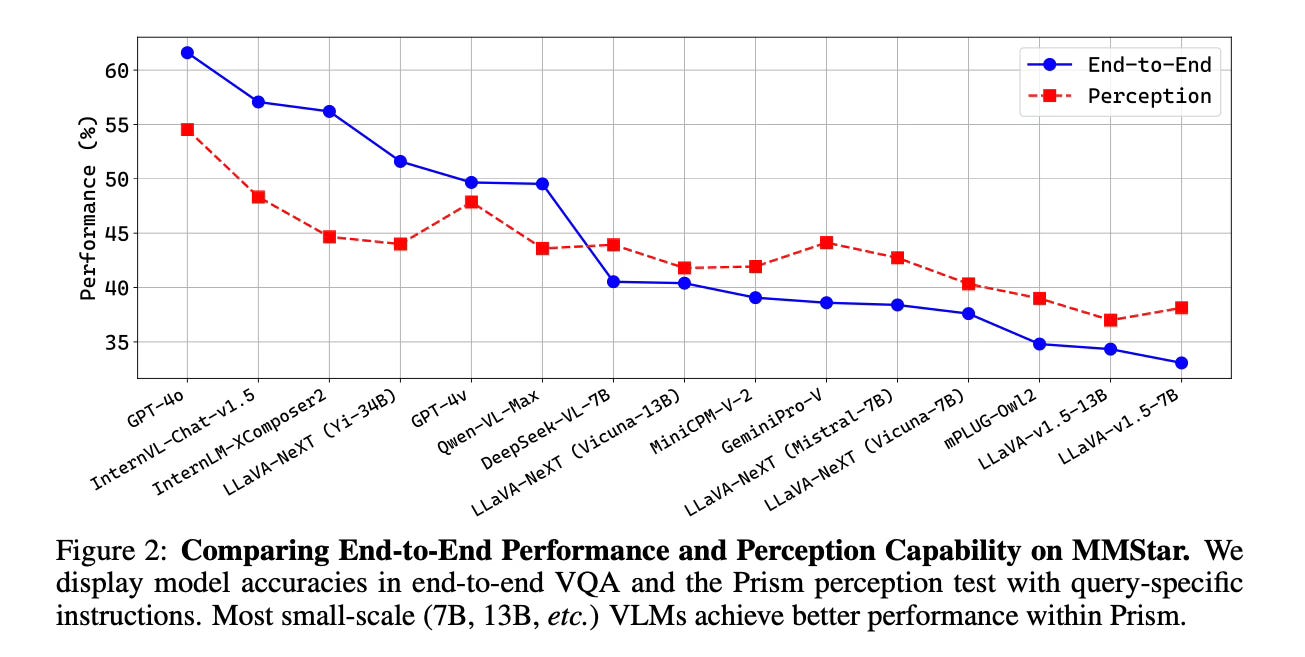

Open-source VLMs show consistent perception performance regardless of language model size

Smaller open-source VLMs (e.g. 7B parameters) are often constrained by limited reasoning capabilities

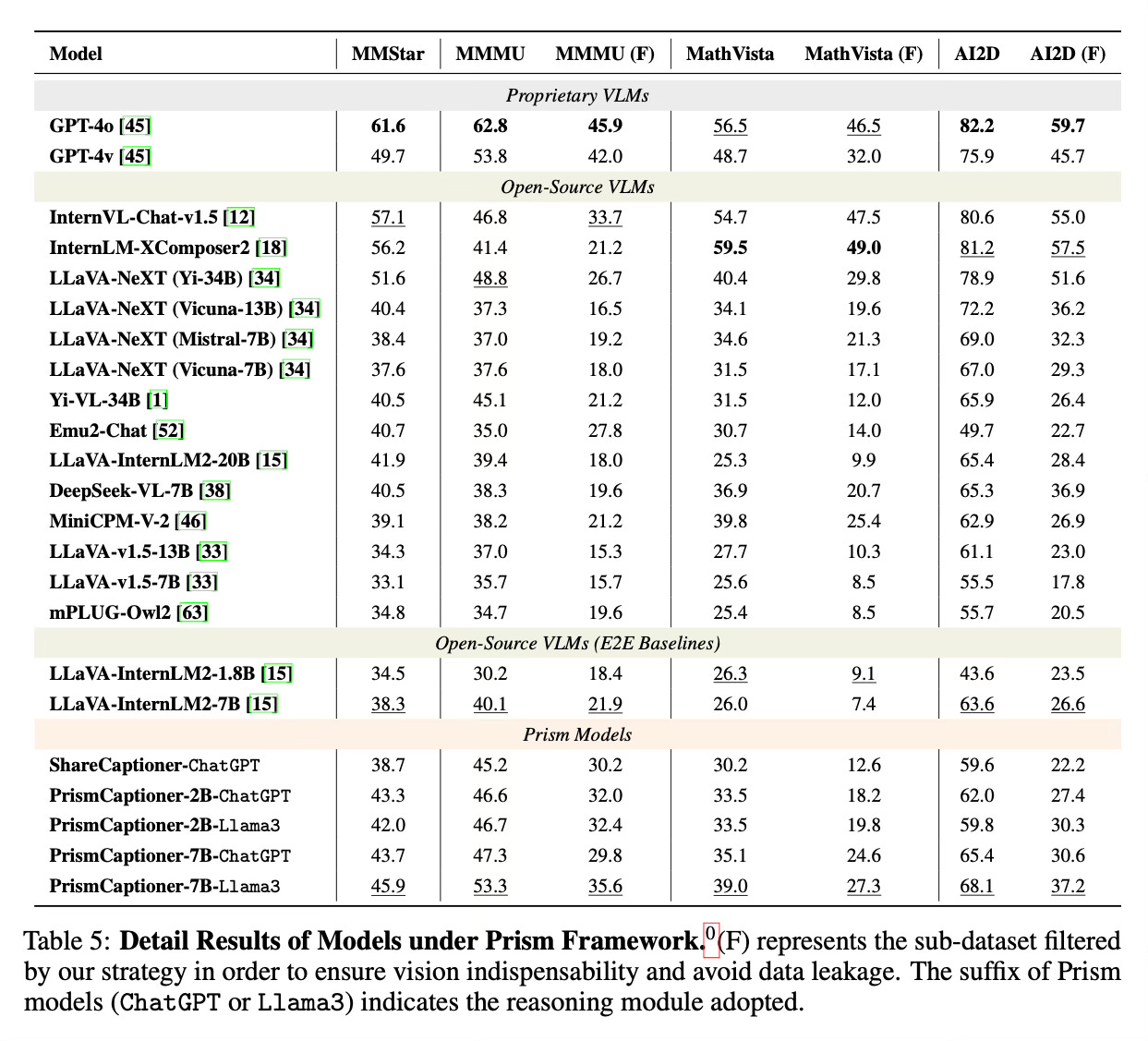

When used as a vision-language solver:

Prism with a 2B LLaVA model and ChatGPT-3.5 outperforms many larger open-source VLMs on multimodal benchmarks

Performance gains are particularly pronounced for reasoning-related visual questions

Prism achieves results competitive with VLMs 10x larger on the MMStar benchmark

Conclusion

Prism provides a valuable framework for systematically evaluating and comparing VLM capabilities by decoupling perception and reasoning. It offers insights to guide model optimization and demonstrates potential as an efficient approach for vision-language tasks by combining lightweight perception models with powerful reasoning engines. For more information please consult the full paper.

Congrats to the authors for their work!

Qiao, Yuxuan, et al. "Prism: A Framework for Decoupling and Assessing the Capabilities of VLMs." arXiv preprint arXiv:2406.14544 (2024).

Prism is an innovative framework designed to address the intertwined challenges of perception and reasoning in solving visual problems. By separating perception and reasoning into two distinct stages, Prism enables a systematic comparison and evaluation of proprietary and open-source Vision Language Models (VLMs) in terms of their perception and reasoning capabilities. Combining a streamlined VLM focused on perception with a powerful Large Language Model (LLM) designed for reasoning, Prism achieves outstanding results in general visual language tasks while significantly reducing training and operational costs.