Scalable MatMul-free Language Modeling

Today's paper introduces a novel approach to language modeling that completely eliminates matrix multiplication (MatMul) operations while maintaining strong performance. The authors demonstrate that their MatMul-free language model can achieve comparable results to state-of-the-art Transformers up to at least 2.7 billion parameters, while significantly reducing memory usage and computational costs.

Task definition

The paper aims to create a large language model that can perform competitively with state-of-the-art Transformer models without using any matrix multiplication operations. This task involves redesigning the core components of language models to use only simpler operations like addition and element-wise multiplication, while still maintaining high performance on language tasks.

Method Overview

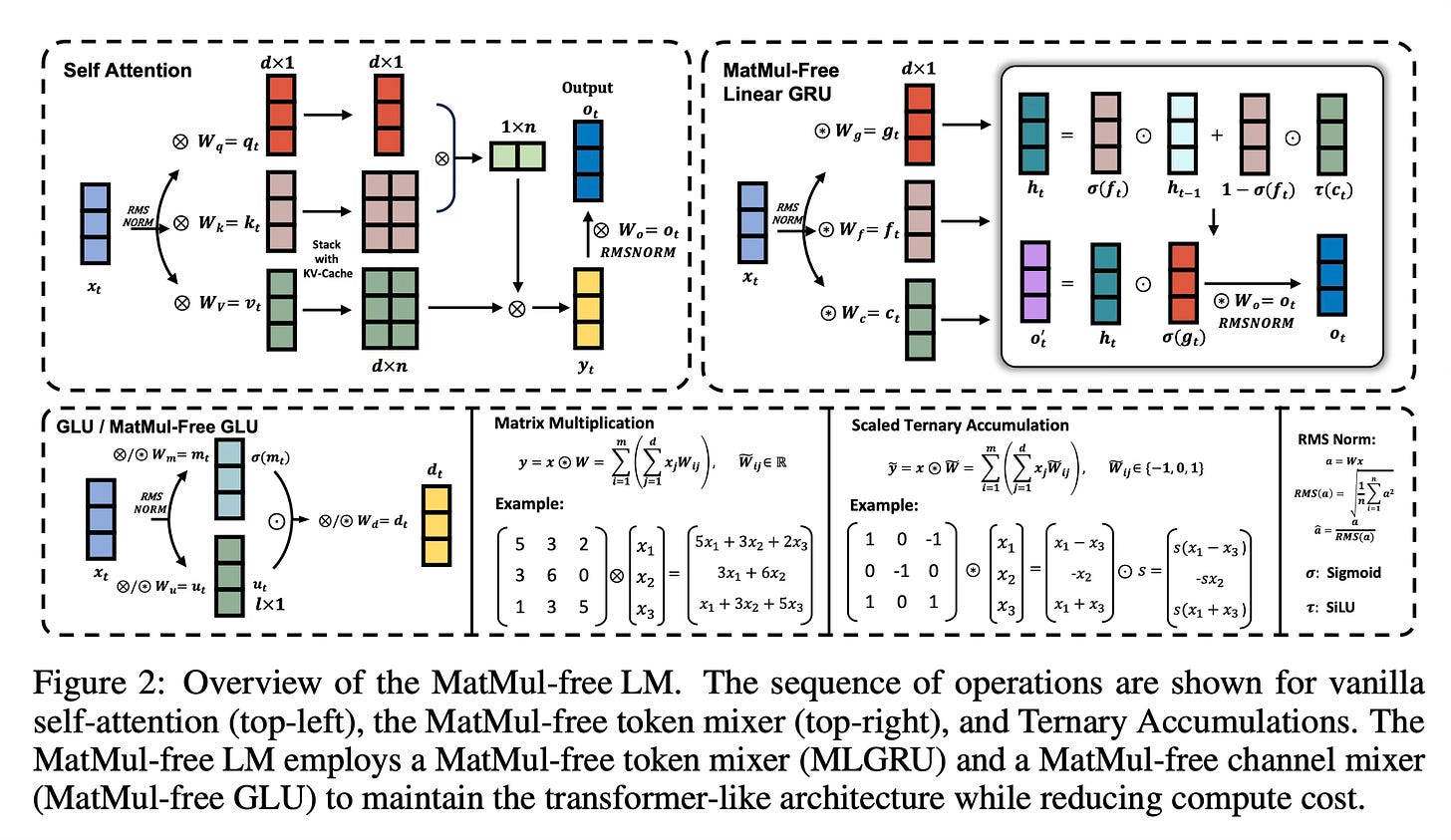

The overall pipeline consists of two main components: a MatMul-free token mixer and a MatMul-free channel mixer.

For the dense layers, they use BitLinear modules with ternary weights (values limited to -1, 0, and 1). This allows matrix multiplications to be replaced with simple addition and subtraction operations. To improve efficiency, they introduce a fused BitLinear layer that combines normalization and quantization steps to reduce memory access.

The token mixer, responsible for capturing sequential dependencies, is implemented using a modified version of the Gated Recurrent Unit (GRU) called the MatMul-free Linear Gated Recurrent Unit (MLGRU). This adaptation removes hidden-state related weights and replaces remaining weight matrices with ternary weights, eliminating all MatMul operations while preserving essential gating mechanisms.

For the channel mixer, which integrates information across embedding dimensions, they use a Gated Linear Unit (GLU) adapted to work with BitLinear layers. This allows for efficient mixing of information without relying on matrix multiplications.

The training process employs techniques like surrogate gradients to handle non-differentiable functions and uses larger learning rates to compensate for the ternary weight representation. The authors also implement custom FPGA hardware to exploit the efficiency gains of their MatMul-free approach.

Results

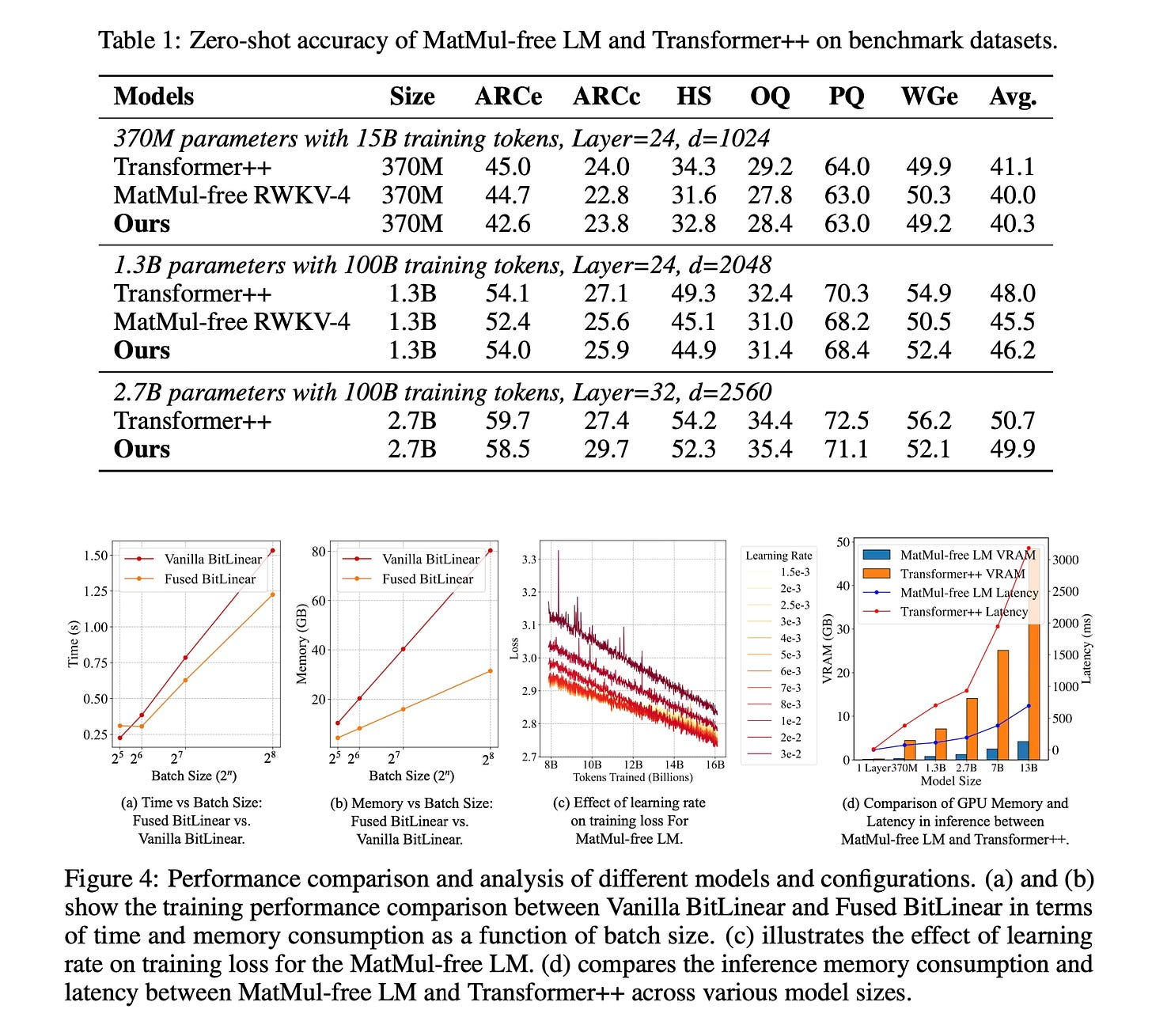

The MatMul-free language model achieves competitive performance with Transformer models across various model sizes (370M, 1.3B, and 2.7B parameters) on zero-shot language tasks.

The proposed architecture demonstrates significant efficiency gains in both training and inference. During training, the fused BitLinear implementation shows up to 25.6% speedup and 61.0% reduction in memory usage compared to a vanilla implementation. In inference, the MatMul-free model consistently demonstrates lower memory usage and latency across all model sizes, with the largest 13B parameter model using only 4.19 GB of GPU memory compared to 48.50 GB for an equivalent Transformer model.

Conclusion

This paper demonstrates that it is possible to build high-performing language models without relying on matrix multiplication operations. The proposed MatMul-free language model achieves comparable performance to state-of-the-art Transformers while significantly reducing computational costs and memory usage. This approach opens up new possibilities for efficient language model deployment on various hardware platforms and moves towards more sustainable and accessible AI technologies. For more information please consult the full paper.

Congrats to the authors for their work!

Zhu, Rui-Jie, et al. "Scalable MatMul-free Language Modeling." arXiv preprint arXiv:2406.02528 (2024).