Self-Tuning: Instructing LLMs to Effectively Acquire New Knowledge through Self-Teaching

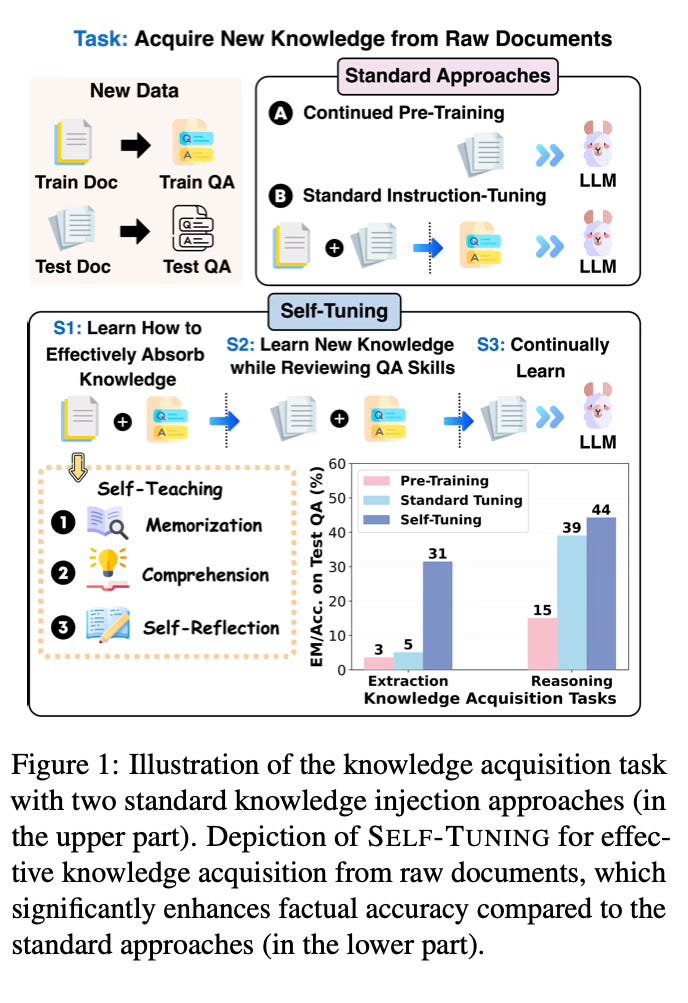

Today's paper introduces SELF-TUNING, a framework designed to enhance large language models' (LLMs) ability to effectively acquire new knowledge from raw documents through self-teaching. Motivated by the Feynman Technique for efficient human learning, SELF-TUNING aims to improve LLMs' knowledge acquisition across memorization, comprehension, and self-reflection aspects.

Method Overview

SELF-TUNING is designed to acquire new knowledge from raw documents, starting from a pre-trained model such as LLaMA 2. It consists of three main stages:

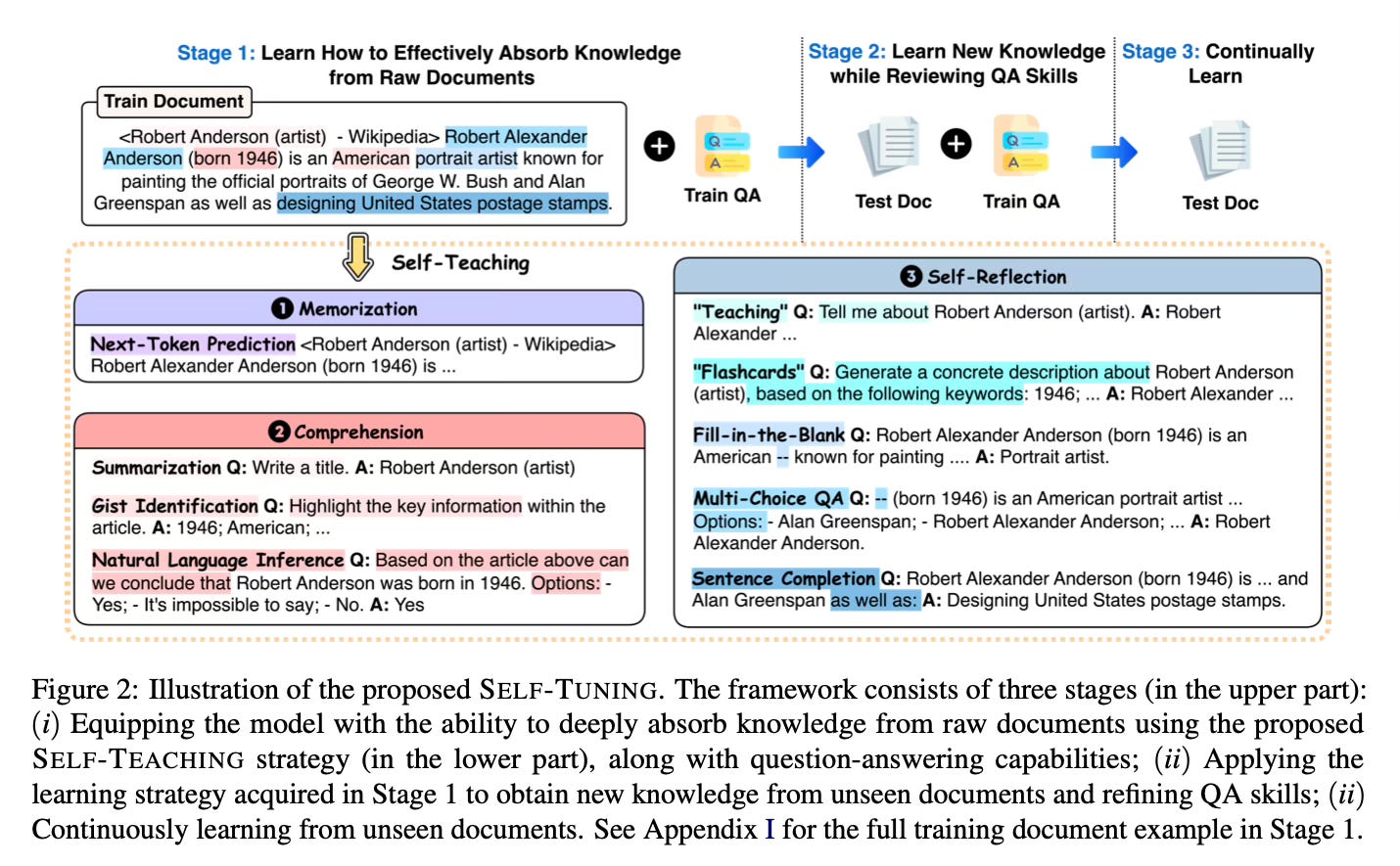

Learn how to effectively absorb knowledge from raw documents:

The model is trained on a mix of training documents, associated QA data, and self-teaching tasks.

Self-teaching tasks are generated in a self-supervised manner based on document contents.

Tasks cover memorization (plain text), comprehension (summarization, key info highlighting, natural language inference), and self-reflection ("teaching", flashcards, fill-in-the-blank, multi-choice QA, sentence completion).

Learn new knowledge while reviewing QA skills:

The model applies the learned strategy to spontaneously extract knowledge from unseen test documents.

Training also includes review of QA data to refine question-answering ability.

Continually learn:

Follow-up training on test documents ensures thorough absorption of new knowledge.

The core component is the SELF-TEACHING strategy, which presents documents as plain text for memorization, along with various knowledge-intensive tasks for comprehension and self-reflection. This approach does not require any specific mining patterns, making it applicable to any raw text.

Results

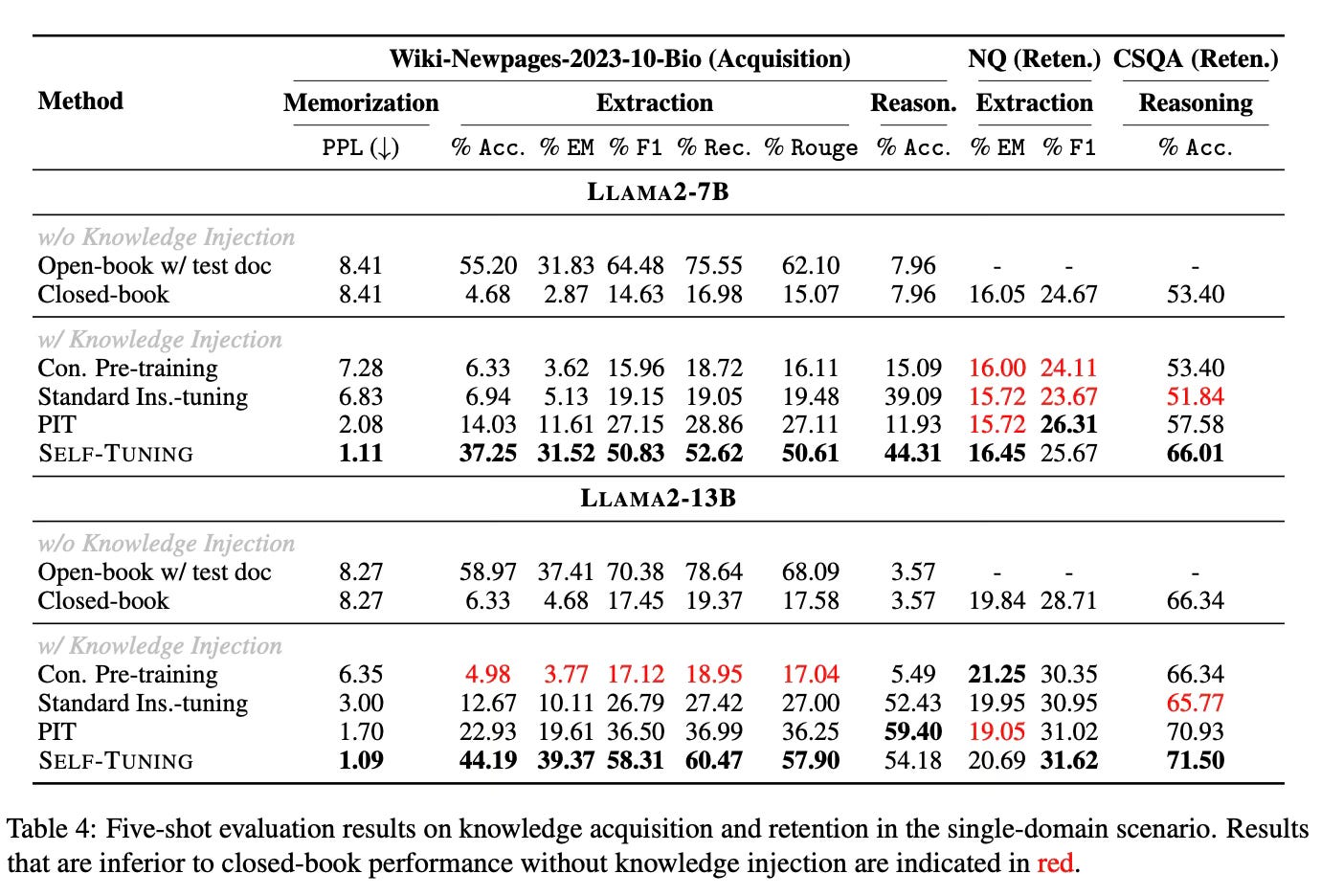

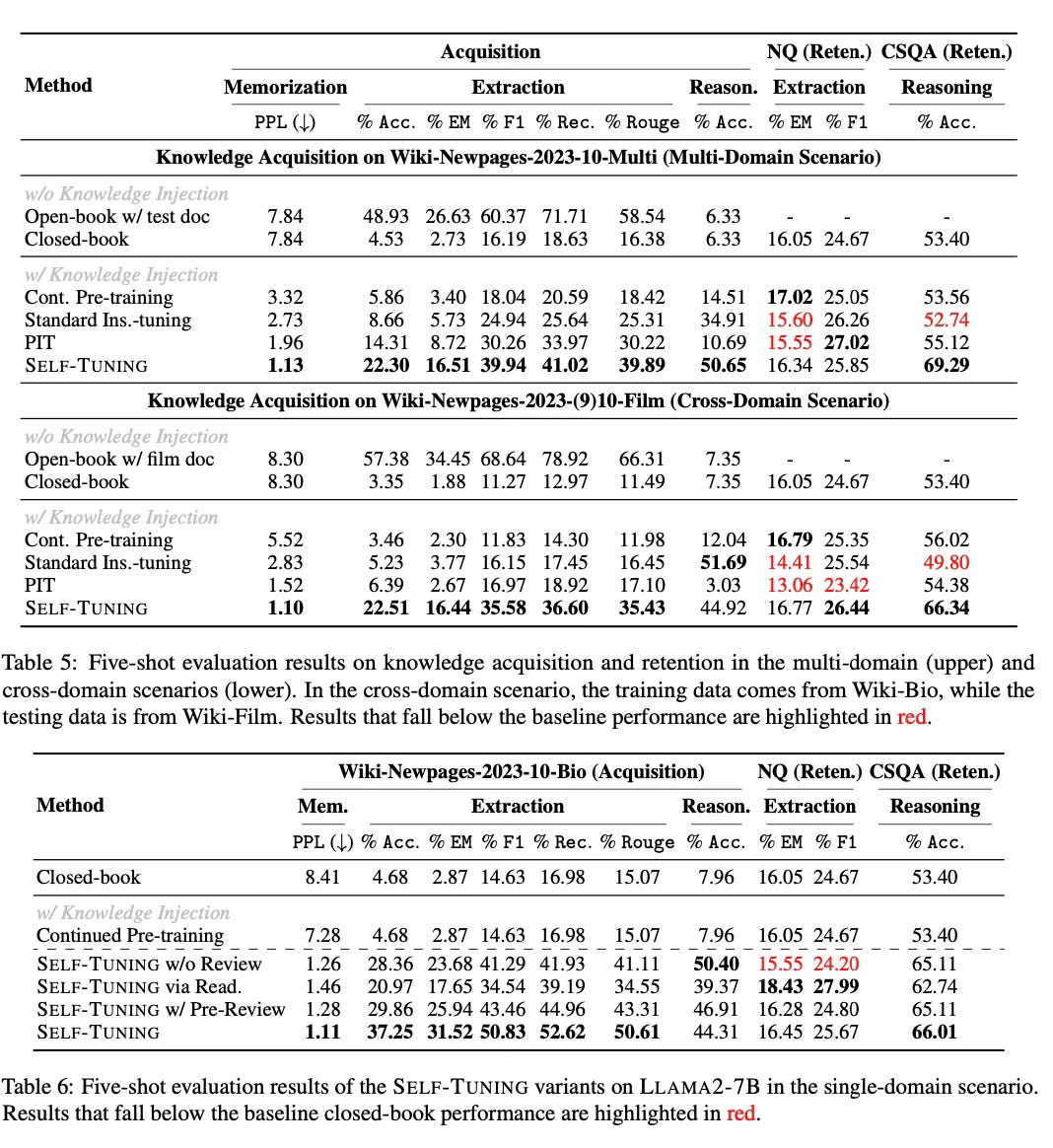

SELF-TUNING significantly outperforms other methods across three knowledge acquisition tasks:

Memorization: Reduces perplexity to nearly 1 on test documents.

Extraction: Increases exact match scores by ~20% on open-ended generation tasks.

Reasoning: Achieves high accuracy on natural language inference tasks.

SELF-TUNING also has very good results in knowledge retention, showing slight improvements in retaining previously learned world knowledge and commonsense reasoning. The method demonstrates consistent strong performance in multi-domain and cross-domain scenarios, highlighting its potential for real-world applications.

Conclusion

SELF-TUNING provides a promising approach for enhancing LLMs' ability to acquire new knowledge from raw documents. By incorporating aspects of memorization, comprehension, and self-reflection, it achieves superior performance across various knowledge acquisition tasks while maintaining previously acquired knowledge. For more information please consult the full paper.

Congrats to the authors for their work!

Zhang, Xiaoying, et al. "SELF-TUNING: Instructing LLMs to Effectively Acquire New Knowledge through Self-Teaching." arXiv preprint arXiv:2406.06326 (2024).