VASA-1: Lifelike Audio-Driven Talking Faces Generated in Real Time

Today’s paper introduces VASA-1, a framework for generating highly realistic and lifelike talking face videos from a single static image and an audio clip. The generated videos exhibit precise lip synchronization with the audio, expressive facial dynamics, and natural head movements. Below you can find an example.

Method Overview

VESA-1 uses a diffusion-based model that generates holistic facial dynamics (lip motion, expressions, eye movements etc.) and head movements in a latent face space, conditioned on the input audio and other optional control signals like gaze direction and emotion offset. It is also learning an expressive and disentangled face latent space from videos using a 3D-aided representation. This separates facial dynamics from appearance/identity to enable effective generative modeling. The method consists of two main components:

Face Latent Space Learning: Videos are encoded into disentangled latent codes for 3D appearance, identity, head pose and holistic facial dynamics. A decoder then reconstructs faces from these latents.

Facial Dynamics Generation: A diffusion transformer model is trained to generate the facial dynamics and head pose latent codes conditioned on the input audio and control signals like gaze direction. Classifier-free guidance is used during inference.

At test time, the appearance/identity latents are extracted from the input image. The facial dynamics latents are then generated from the audio and control signals using the diffusion model. Finally, the decoder combines all latents to produce the video frames.

Results

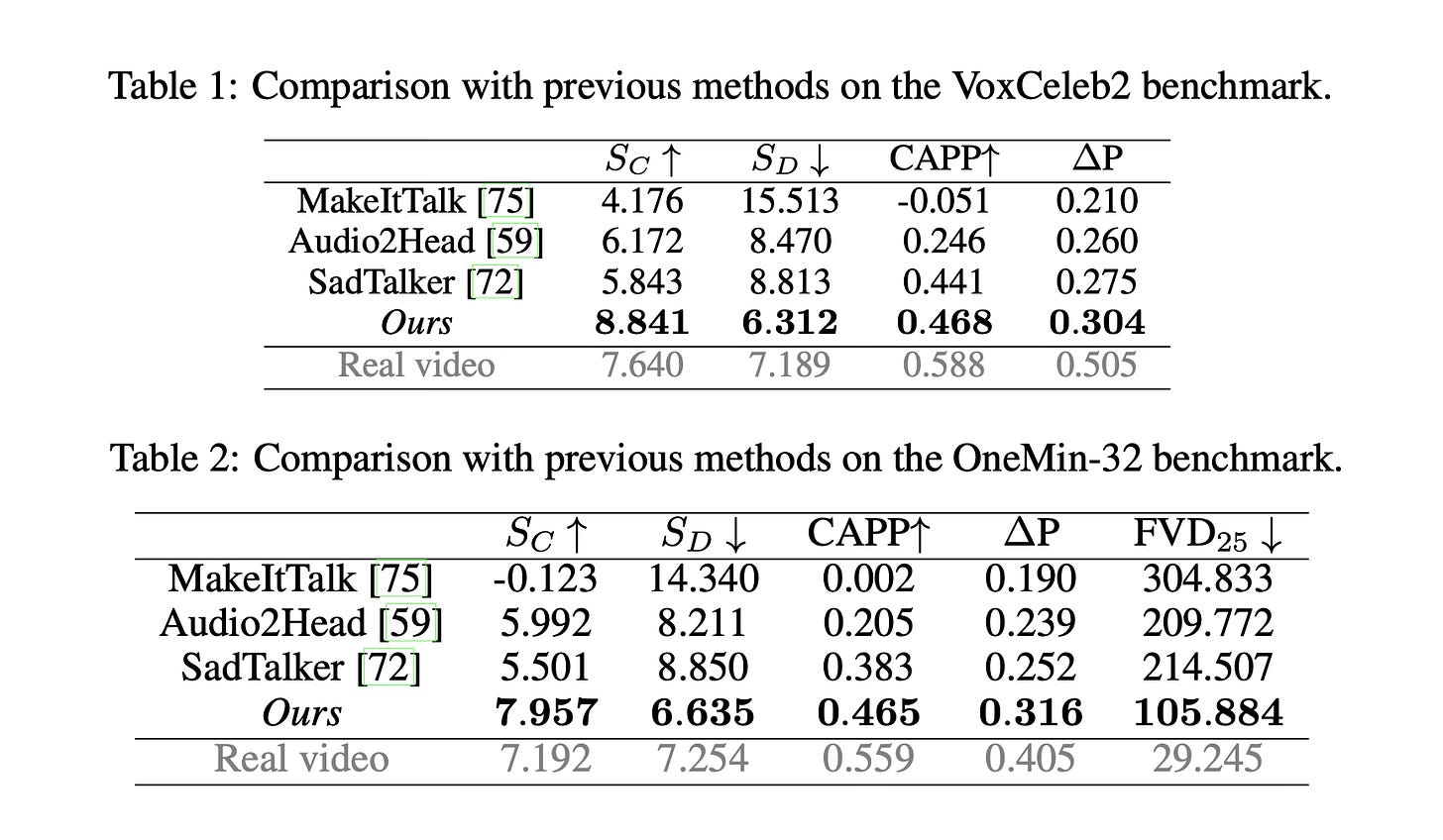

Extensive evaluation shows VASA-1 significantly outperforms prior methods in audio-lip sync, facial expression quality, head motion naturalness and overall video realism. It can generate high-quality 512x512 videos at up to 40 FPS with negligible latency.

Conclusion

VASA-1 achieves a new state-of-the-art in audio-driven talking face generation, producing highly lifelike results with appealing visual affective skills. This paves the way for realistic AI avatars for applications like digital communication, accessibility and education. For more information please consult the full paper or the project page.

Congrats to the authors for their work!

Xu, Sicheng, et al. "VASA-1: Lifelike Audio-Driven Talking Faces Generated in Real Time." ArXiv, 16 Apr. 2024, arxiv.org/abs/2404.10667v1.