Video2Game: Real-time, Interactive, Realistic and Browser-Compatible Environment from a Single Video

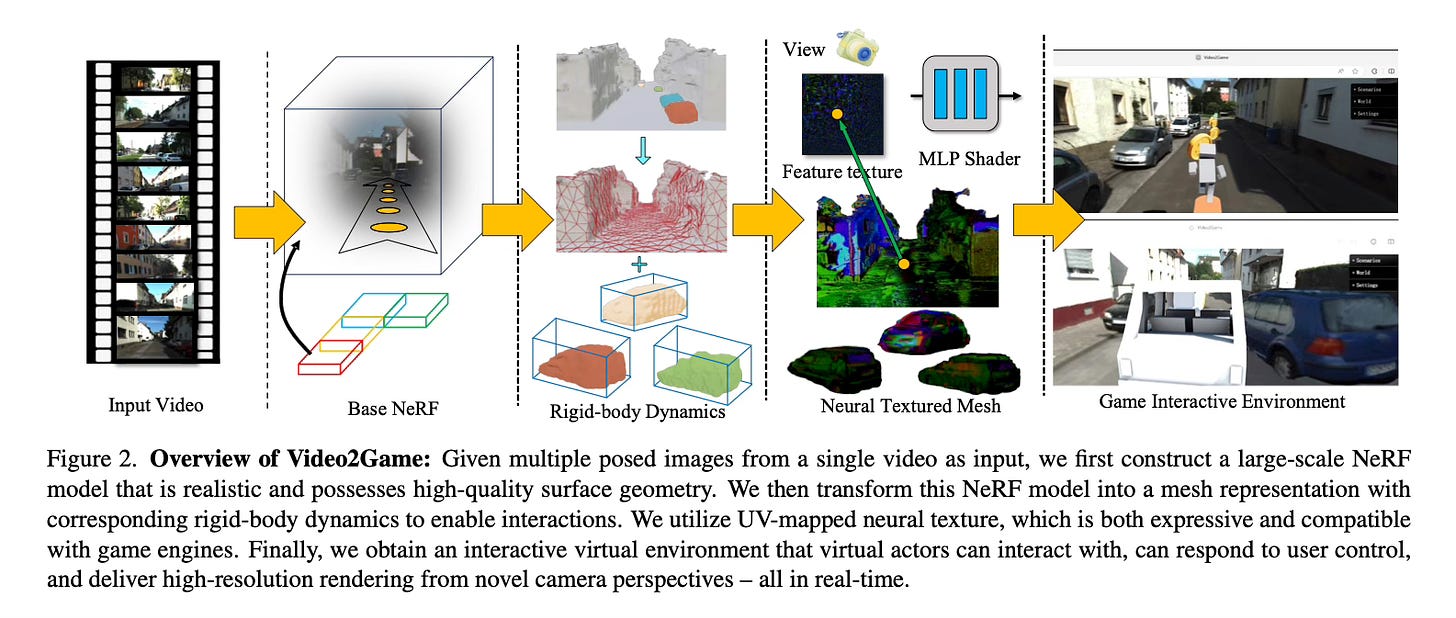

Today’s paper presents Video2Game, a new approach for automatically converting videos of real-world scenes into realistic and interactive game environments. The system leverages neural radiance fields (NeRF) to capture scene geometry and appearance, a mesh module for faster rendering, and a physics module to model object interactions and dynamics. This enables the construction of an interactable digital replica of the real world.

Method Overview

At the core of Video2Game are three main components:

1. A NeRF module effectively captures the scene's geometry and visual appearance. It is enhanced to handle large-scale, unbounded environments by using contraction functions, leveraging monocular depth and normal cues to regularize geometry, and dividing large scenes into blocks.

2. The NeRF representation is then transformed into a mesh with UV-mapped neural textures for faster rendering while maintaining quality. The mesh topology is obtained via marching cubes on the NeRF density field. A customized shader computes the final color by combining a view-independent base color and view-dependent specular component predicted by a lightweight MLP.

3. To enable physical interactions, the scene is decomposed into individual actionable entities using the NeRF representation. Each entity is equipped with collision geometry, mass, and friction parameters. This allows effective modeling and simulation of rigid body dynamics and interactions between objects.

The entire pipeline is designed to be compatible with game engines. The resulting assets can be imported into a WebGL-based engine, allowing users to interact with the virtual environment in real-time through their browser.

Results

Video2Game is tested on both indoor and large-scale outdoor scenes. It achieves realistic real-time rendering and enables the construction of interactive games on top of the reconstructed environments.

Browser-based game demos showcase various interactive features, including free navigation, object manipulation, coin collection, car driving and collisions. The environments run at over 100 FPS across different hardware setups.

Conclusion

Video2Game presents a novel approach for automatically converting real-world videos into interactive game environments. By combining the strengths of NeRF scene modeling with physics-based simulation and integration into game engines, it enables the creation of compelling 3D content and opens up exciting possibilities for digital game design and robotics applications. For more information please consult the full paper or the project page.

Code: https://github.com/video2game/video2game

Demo: https://video2game.github.io/src/garden/index.html

Congrats to the authors for their work!

Xia, Hongchi, et al. "Video2Game: Real-time, Interactive, Realistic and Browser-Compatible Environment from a Single Video." arXiv preprint arXiv:2404.09833 (2023)