Aya 23: Open Weight Releases to Further Multilingual Progress

Today's paper introduces Aya23, a family of multilingual language models covering 23 languages. It builds on the previous Aya model release, but it focuses on allocating more capacity to fewer languages (23) during pre-training to improve performance.

Method Overview

The Aya23 models are based on Cohere's Command series models, which are pre-trained on data from 23 languages using a standard decoder-only Transformer architecture. Some key aspects of the pre-training include:

Parallel attention and feed-forward layers for improved training efficiency

SwiGLU activation function

No bias terms in dense layers for better training stability

Rotary positional embeddings for better long context handling

256k vocabulary size BPE tokenizer trained on a balanced subset of pre-training data

The pre-trained models are then instruction fine-tuned on a mixture of multilingual data sources:

Multilingual templates from datasets like xP3, data provenance collection, and Aya collection

Human annotations from native speakers (55k examples across 23 languages)

Translated versions of English instruction datasets like HotpotQA and Flan-CoT (1.1M examples)

Synthetically generated responses using Command R+ for prompts from ShareGPT and Dolly-15k (1.63M examples)

Two model sizes are released - 8B and 35B parameters. The fine-tuning uses a context length of 8192 tokens and runs for 13,200 update steps on TPUv4 chips.

Results

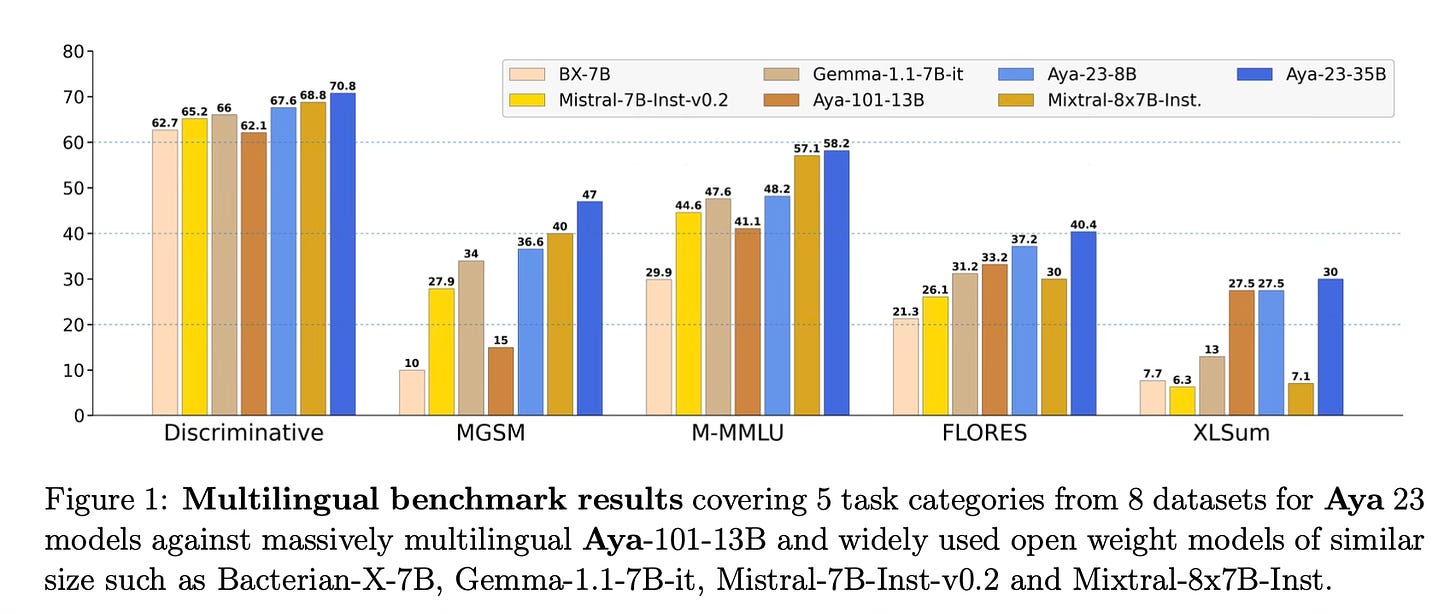

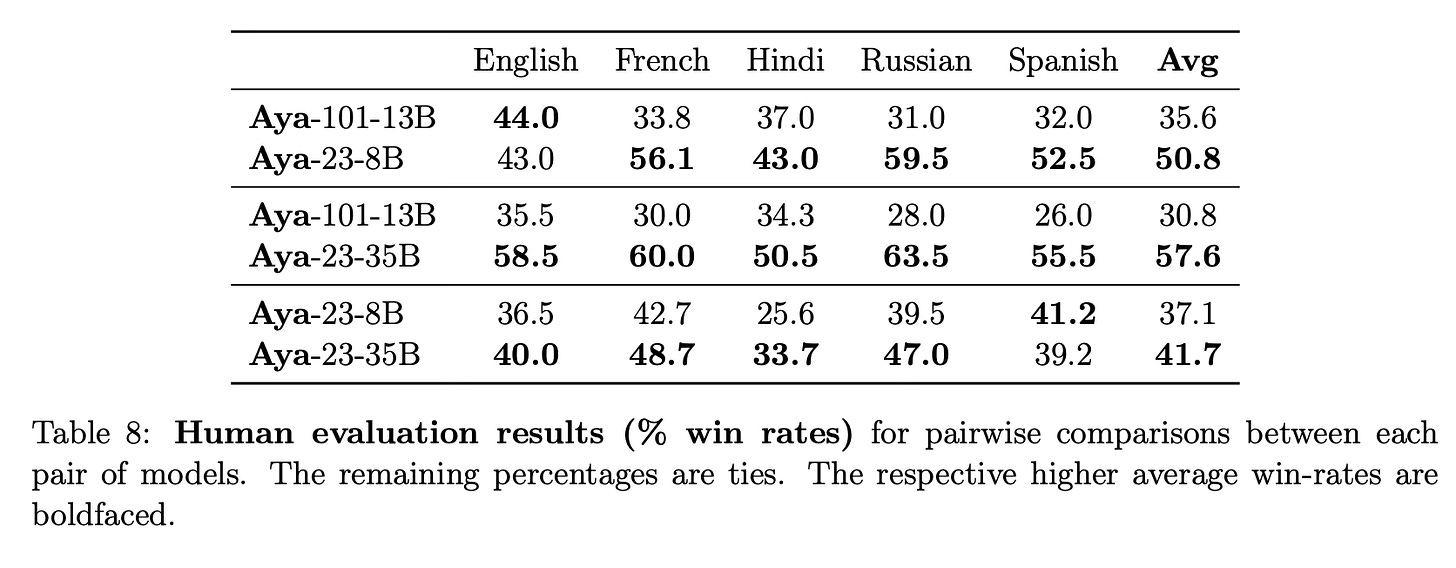

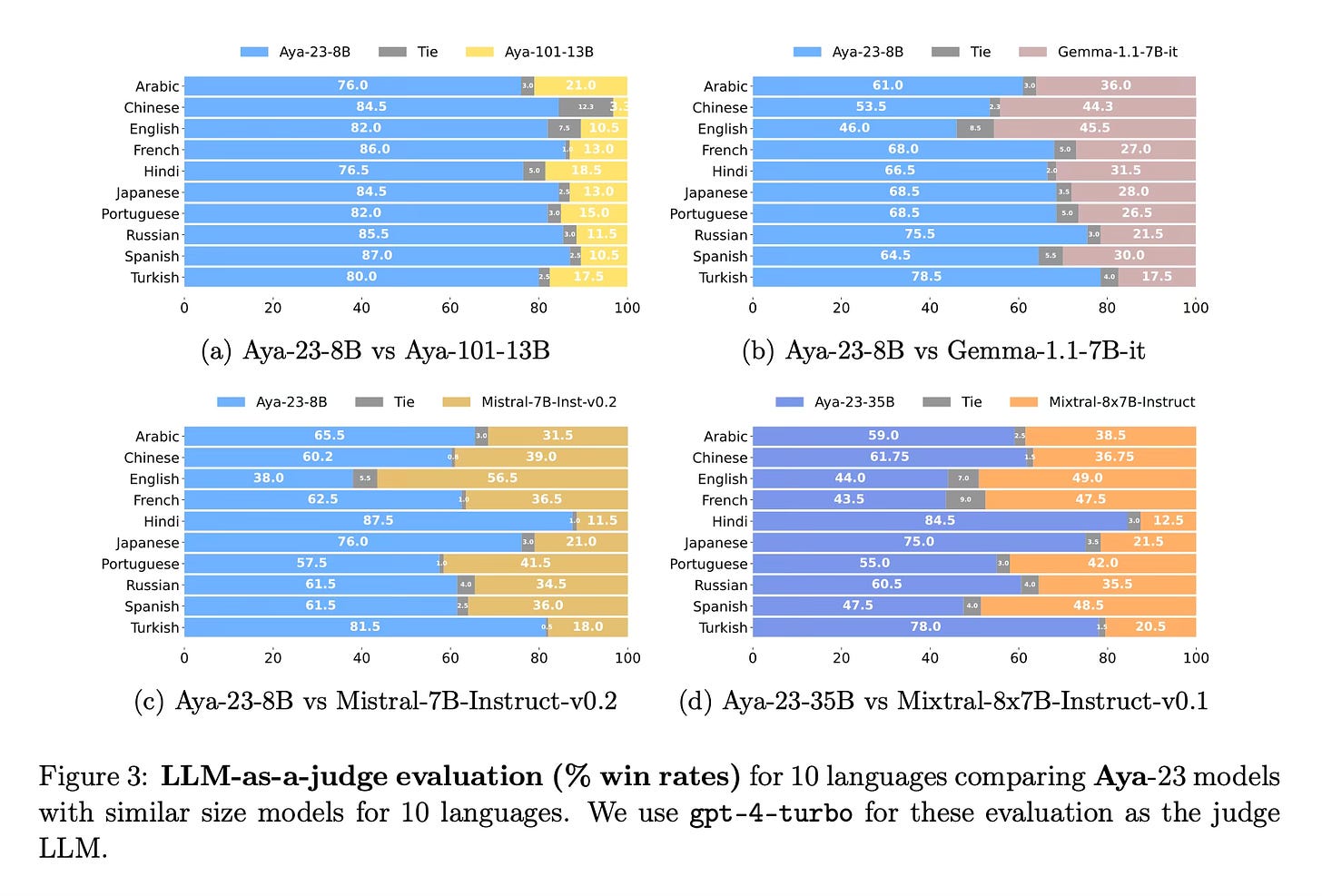

Aya23 models outperform previous massively multilingual models like Aya101 as well as widely used models like Gemma, Mistral and Mixtral across a comprehensive evaluation covering: discriminative tasks like coreference resolution, sentence completion, and language understanding, generative tasks like translation, summarization, and mathematical reasoning.

Compared to Aya101, Aya23 improves discriminative tasks by up to 14%, generative tasks by up to 20% and multilingual MMLU by up to 41.6%.

Conclusion

The Aya23 model family demonstrates state-of-the-art multilingual performance across a wide range of tasks by allocating more capacity to fewer languages during pre-training. The 8B and 35B model weights are publicly released. For more information please consult the full paper

Models: https://huggingface.co/CohereForAI/

Congrats to the authors for their work!

Citation: Aryabumi, Viraat, et al. "Aya 23: Open Weight Releases to Further Multilingual Progress." Technical Report, Cohere For AI, 2024.