General Agentic Memory Via Deep Research

Today’s paper introduces General Agentic Memory (GAM), a framework designed to enhance how AI agents manage and retrieve long-term context. It proposes a “just-in-time” approach that separates memory into a lightweight summary for quick reference and a complete database for deep research, addressing the information loss common in static memory systems.

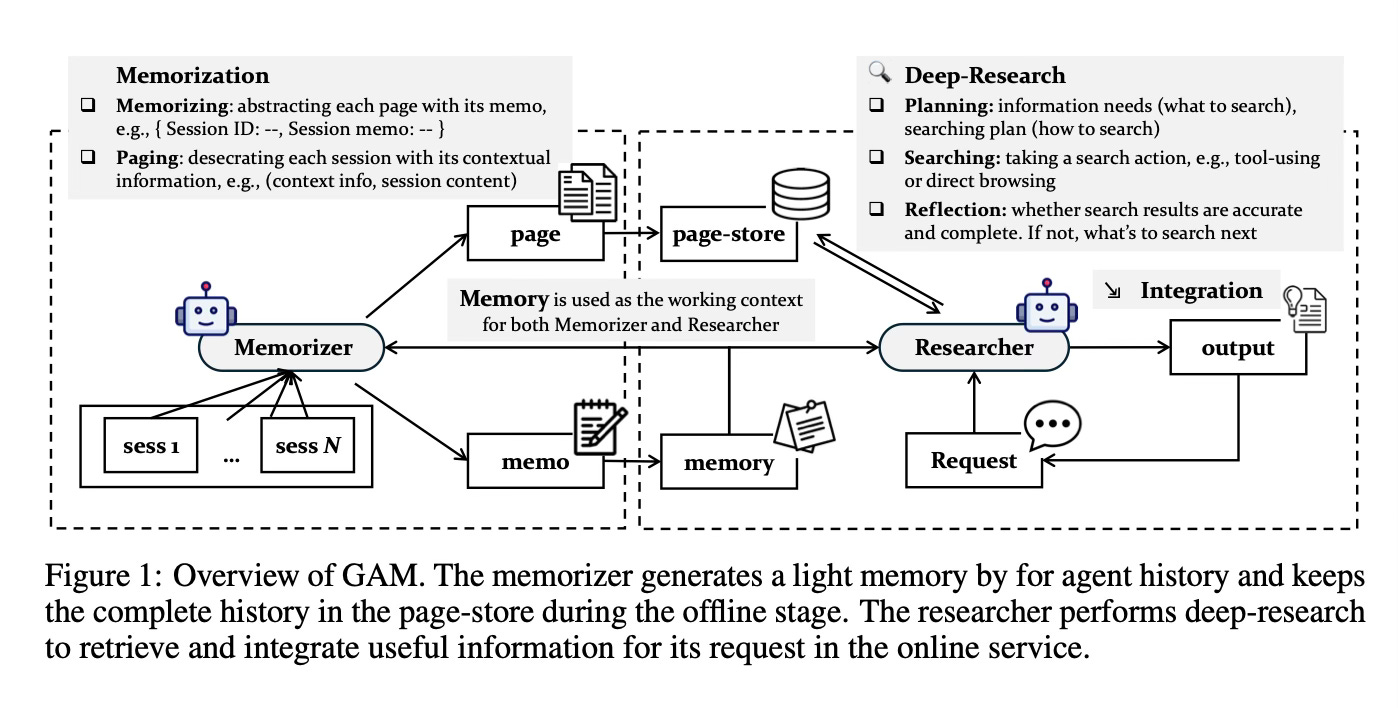

Method Overview

The method employs a dual-agent framework that mimics a library research process. Instead of trying to compress and memorize every single detail in advance, which often leads to data loss, the system maintains a “lightweight” index of events while storing the full, detailed records in a database. When a specific question arises, a specialized “researcher” agent uses the index to figure out exactly where to look in the full records, actively gathers the necessary information, and compiles an answer tailored to that specific moment.

The first component, the Memorizer, processes the agent’s history in the background as new interactions occur. It creates concise memos that highlight key historical points to serve as a high-level map of the context. Simultaneously, it preserves the complete, uncompressed information by organizing the history into “pages” and saving them into a universal page-store. This ensures that while the active memory remains small and efficient, the full fidelity of the history is preserved for future reference.

The second component, the Researcher, is responsible for addressing specific user requests. Using the pre-constructed memos, it plans a search strategy to identify what information is needed. It then retrieves relevant pages from the store using various search tools and integrates the findings. The Researcher uses a reflection mechanism to check if the gathered information is sufficient; if the data is incomplete, it iteratively refines its search plan and looks for more evidence until the request is fully satisfied.

Results

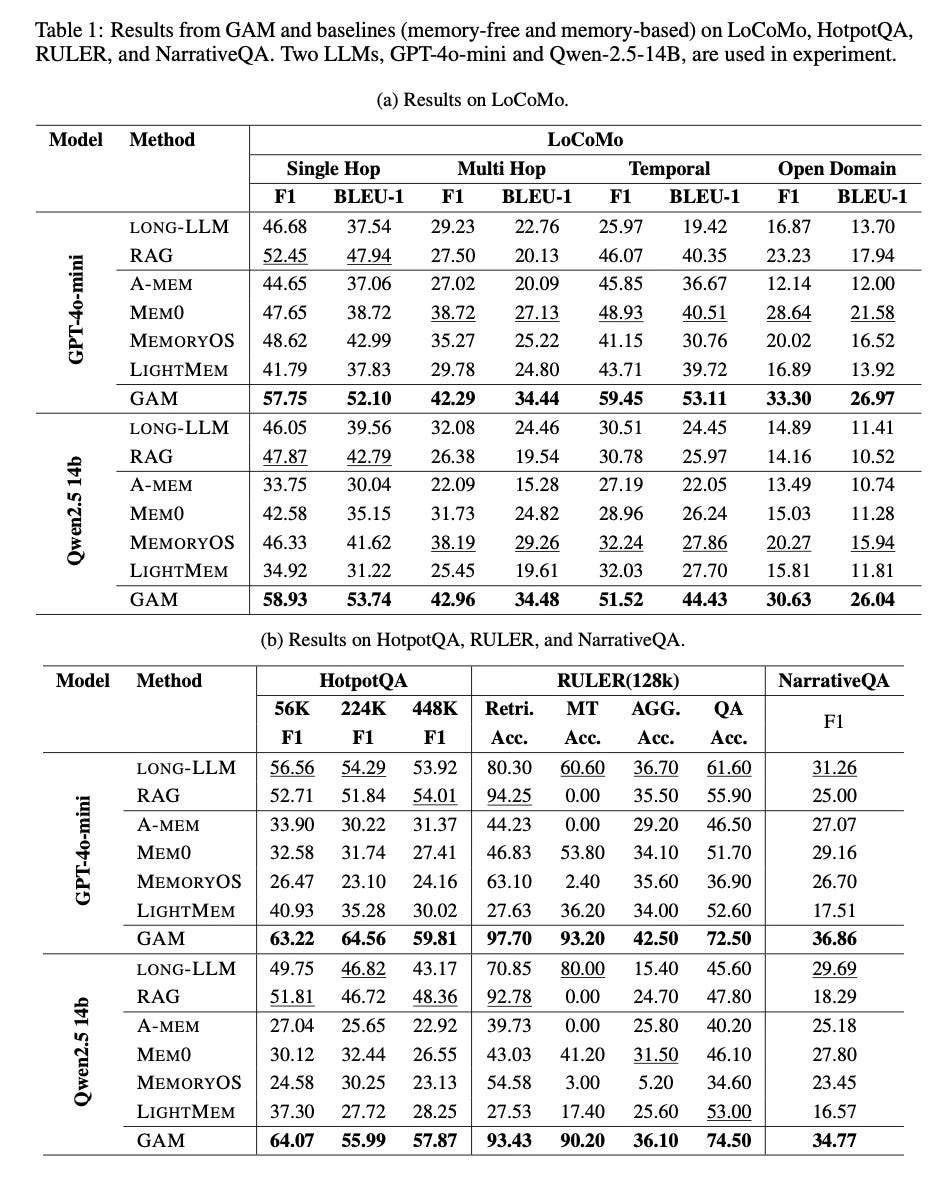

The paper demonstrates that GAM consistently outperforms existing memory systems and retrieval-augmented generation (RAG) methods across multiple benchmarks, including LoCoMo, HotpotQA, and RULER. The system proves particularly effective in multi-hop reasoning tasks, where the agent must track and connect information dispersed throughout a long history. Furthermore, experiments indicate that the method benefits significantly from test-time scaling, showing improved accuracy when the researcher is allowed to perform deeper reflection and retrieve more pages.

Conclusion

In summary, this paper presents a dynamic memory framework that shifts from static, ahead-of-time data compression to an active, just-in-time retrieval process. By combining a lightweight memorizer with a capable researcher agent, the system effectively balances the need for efficient storage with the ability to recall precise details, enabling AI agents to handle long-context tasks with greater accuracy.

For more information please consult the full paper.

Congrats to the authors for their work!

Yan, B.Y., et al. “General Agentic Memory Via Deep Research.” arXiv preprint arXiv:2511.18423, 2025.