Magpie: Alignment Data Synthesis from Scratch by Prompting Aligned LLMs with Nothing

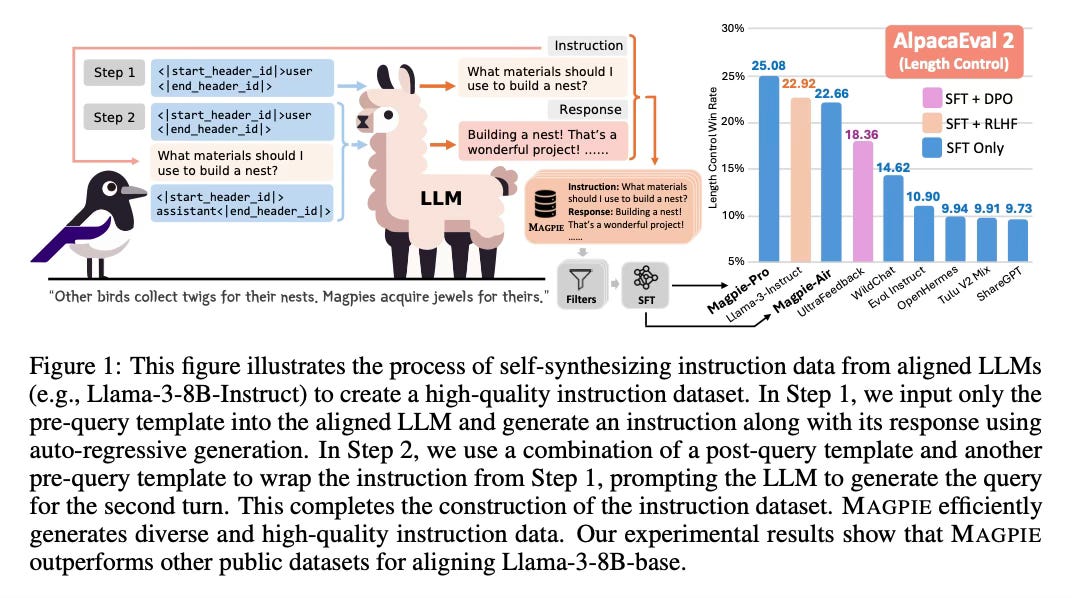

Today's paper presents MAGPIE, a scalable method to synthesize high-quality instruction data for fine-tuning large language models (LLMs) like Llama-3. The key idea is to leverage the auto-regressive nature of aligned LLMs to generate diverse instructions and responses by prompting them with predefined instruction templates.

Method Overview

The MAGPIE method consists of two main steps:

Instruction Generation: Given an open-weight aligned LLM like Llama-3-Instruct, MAGPIE crafts an input query following the predefined instruction template format, but only specifying the role of the instruction provider (e.g. "user:"). The LLM then auto-regressively generates a detailed instruction when given this prompt. This allows generating a diverse set of instructions without any seed questions or complex prompt engineering.

Response Generation: The instructions generated in the previous step are then provided as prompts to the LLM, which generates corresponding responses. The instructions and responses together form the synthesized instruction dataset.

MAGPIE can be fully automated without any human intervention. It can also be extended to generate multi-turn conversations and preference data.

Results

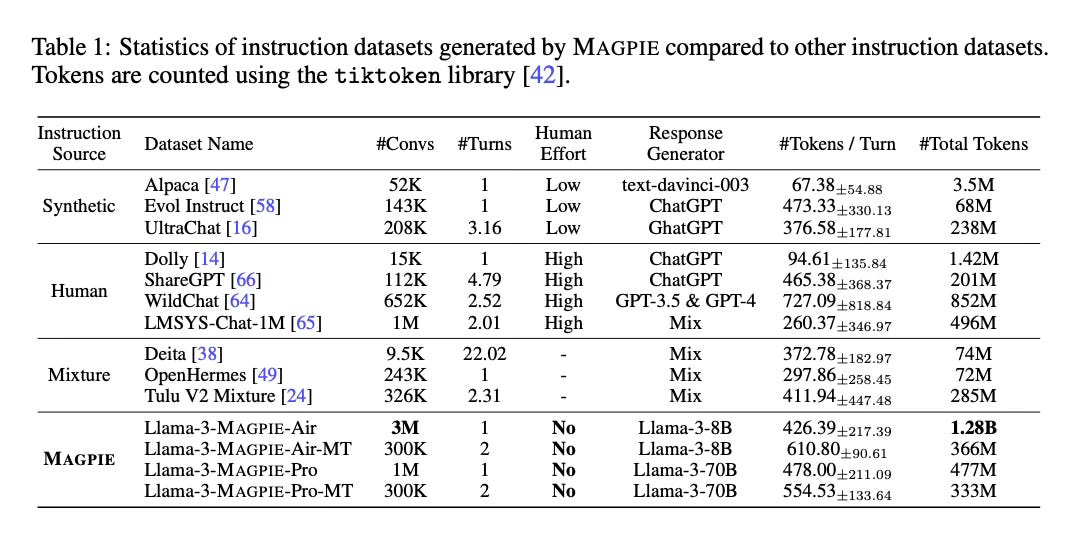

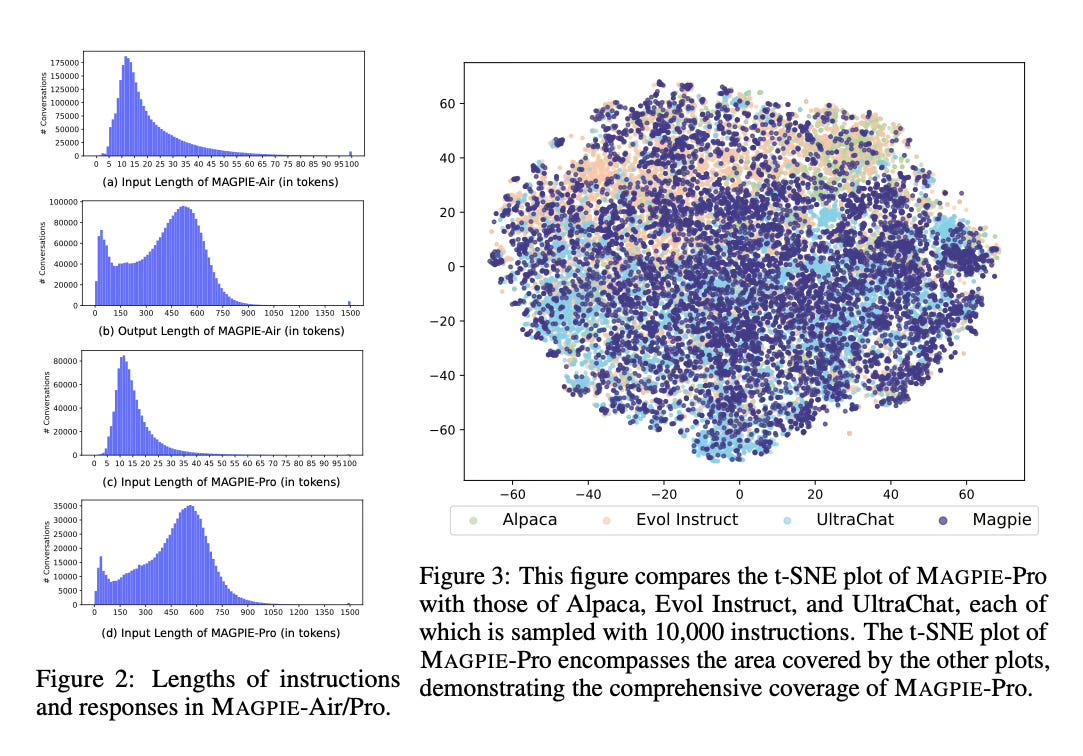

The authors applied MAGPIE to Llama-3-8B-Instruct and Llama-3-70B-Instruct to create the MAGPIE-Air and MAGPIE-Pro datasets respectively. Comprehensive analysis shows these datasets have high quality, diversity, and broad coverage compared to existing instruction datasets.

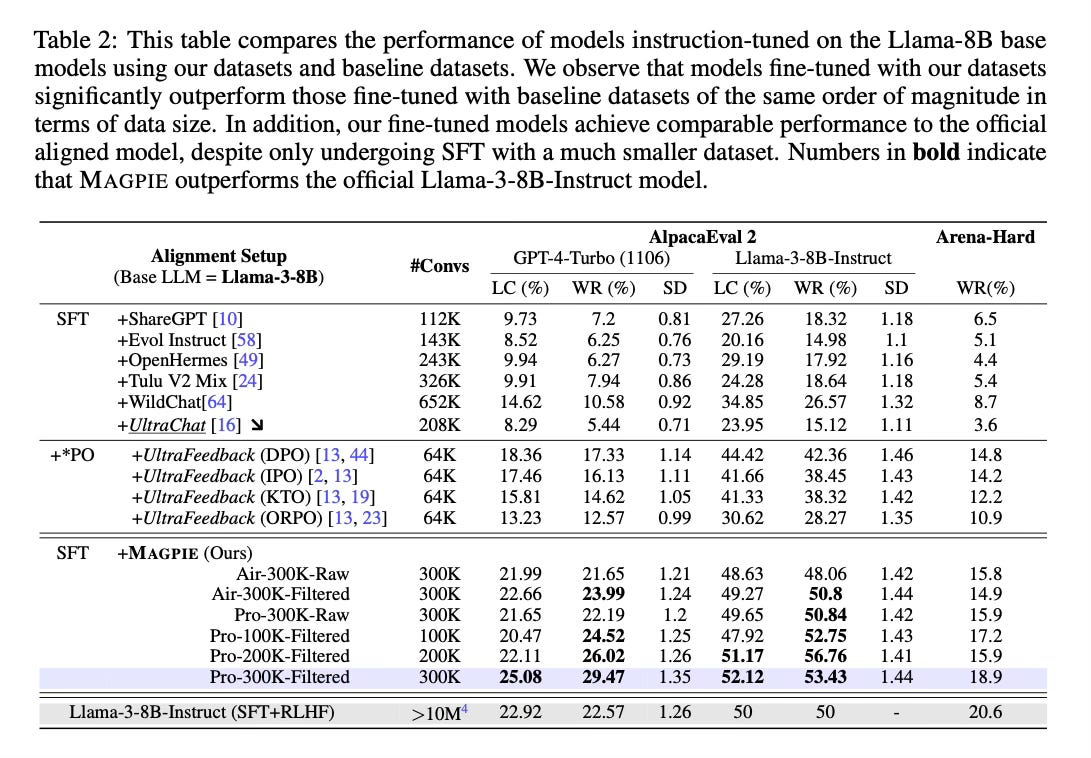

When fine-tuning Llama models on these datasets, the authors found that MAGPIE outperforms prior instruction datasets like ShareGPT and WildChat, and even surpasses the performance of the official Llama-3-Instruct model which was tuned on over 10M examples.

Conclusion

MAGPIE provides a scalable way to generate high-quality and diverse instruction data for aligning LLMs, without any human effort. Models fine-tuned on MAGPIE data demonstrate state-of-the-art instruction-following capabilities across benchmarks. For more information please consult the full paper.

Congrats to the authors for their work!

Xu, Zhangchen, et al. "MAGPIE: Alignment Data Synthesis from Scratch by Prompting Aligned LLMs with Nothing." ArXiv, 12 June 2024, https://arxiv.org/abs/2406.08464.