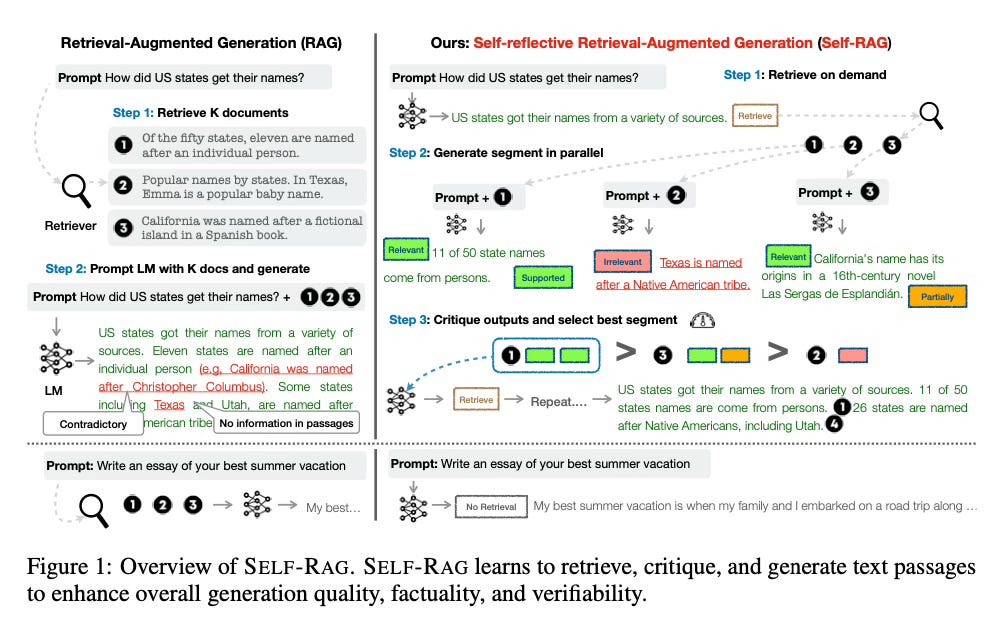

Today’s paper introduces Self-Reflective Retrieval-Augmented Generation (SELF-RAG), a new framework that enhances the quality and factuality of large language models (LLMs) through retrieval and self-reflection. SELF-RAG trains a language model to adaptively retrieve passages on-demand and generate and reflect on retrieved passages and its own generations using special reflection tokens.

Method Overview

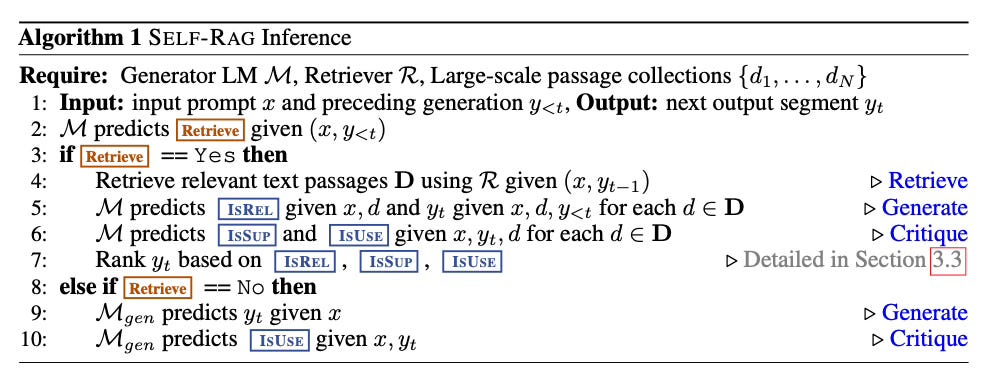

SELF-RAG works in the following way at inference:

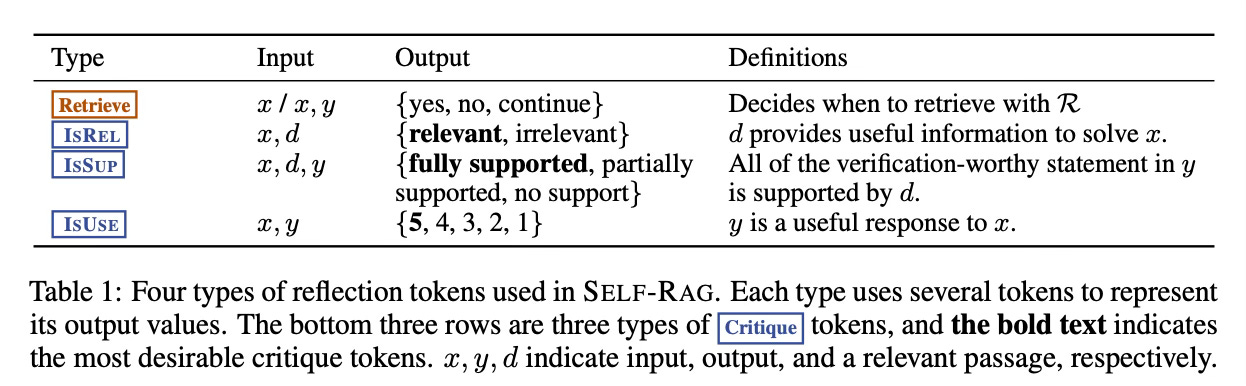

1. Given an input prompt and preceding generations, SELF-RAG first determines if augmenting the continued generation with retrieved passages would be helpful. If so, it outputs a retrieval token that calls a retriever model on demand to retrieve relevant passages. If not, the next token is predicted as for any LLM.

2. SELF-RAG then concurrently processes the multiple retrieved passages, evaluating their relevance. It generates corresponding task outputs for each passage.

3. After generating outputs, SELF-RAG generates critique tokens to criticize its own outputs in terms of factuality and overall quality. It uses these to select the best generated output segment.

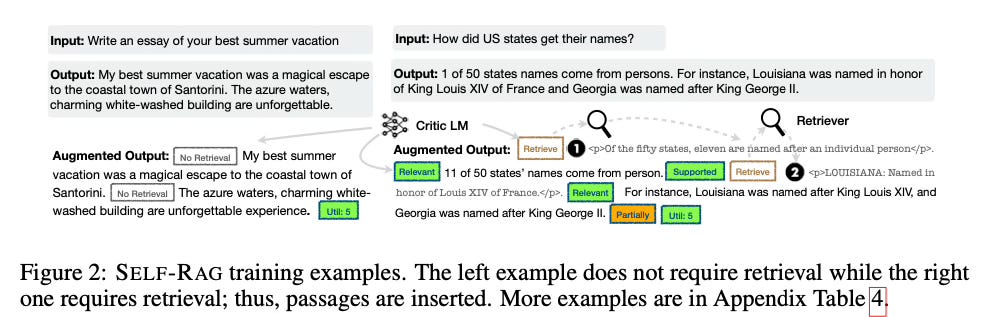

For training, the LLM is trained on a diverse collection of text interleaved with the reflection tokens and retrieved passages. The reflection tokens are inserted offline into the training data by a separate critic model. This allows the generator LLM to learn to generate text along with the reflection tokens in a unified way.

Results

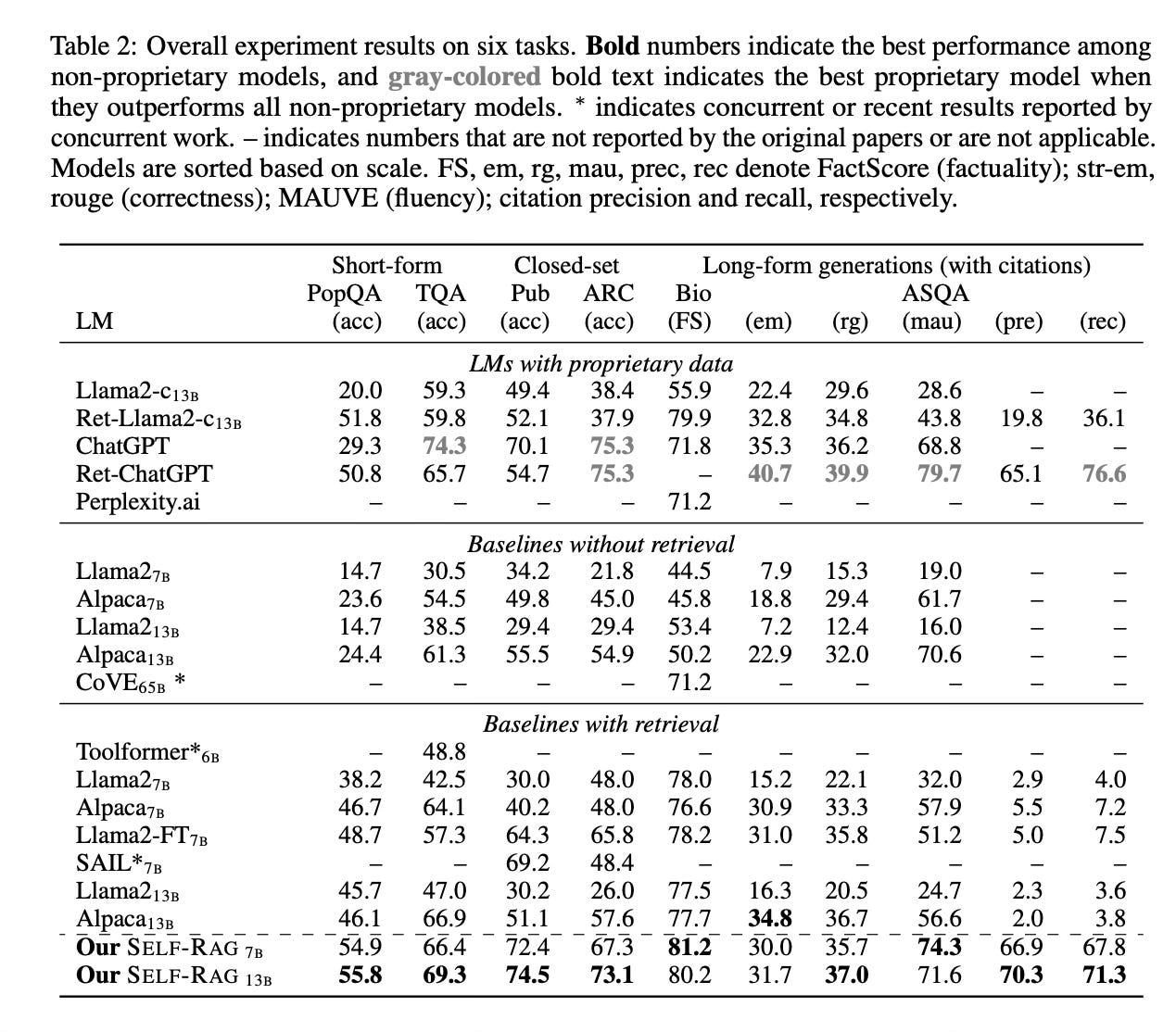

Experiments on six diverse tasks show that SELF-RAG (using a Llama-2 base architecture) significantly outperforms pre-trained and instruction-tuned LLMs with more parameters, as well as widely used retrieval-augmented generation approaches. Notably, SELF-RAG outperforms the retrieval-augmented ChatGPT on four tasks, and surpasses Llama2-chat and Alpaca on all evaluated tasks. SELF-RAG also demonstrates higher citation accuracy in its generated outputs.

Conclusion

SELF-RAG introduces a new approach to enhance LLM generation quality and factuality through on-demand retrieval and self-reflection using special reflection tokens. For more information please consult the full paper.

Congrats to the authors for their work!

Asai, Akari, et al. "SELF-RAG: Learning to Retrieve, Generate, and Critique through Self-Reflection." arXiv preprint arXiv:2310.11511 (2023).